Your potential customer just asked ChatGPT which tools solve their exact problem. Your brand came up, but the description was completely wrong. Wrong positioning. Wrong use case. Maybe it even gave you credit for a competitor's feature. And just like that, the prospect moved on without ever visiting your site.

This isn’t a hypothetical horror story anymore. It's happening to SaaS brands right now, and most of us won’t even know until the damage is done. I've learned to see AI search as a brand risk problem first and foremost, not a content format problem.

The founders who win this next chapter aren’t the ones sprinkling in some FAQ schema and calling it a day. They’re the ones building a system. That means having a clear source of truth, making it technically easy to extract, monitoring it consistently, and having just enough governance to keep it all on track. Let’s get into how you can build this, even if your “team” is just you and a laptop.

AI search isn’t “search”—it’s a new layer of brand interpretation

Traditional search shows a link to your page. AI search summarizes your entire brand for the user. That single shift changes everything.

When someone asks a question on Perplexity, ChatGPT, or Gemini, they aren't browsing links. They're getting a synthesized answer that might blend your homepage, a competitor's blog, a G2 review from 2021, and some random forum thread. No click required. No chance for your beautifully designed homepage to set the record straight.

Where the redefinition happens in the journey (awareness → shortlist → evaluation)

I used to think a confused buyer would reach out with questions. They don't. They reach out after they’ve already put you in a box. They form their entire opinion, comparison set, and trust signal from a three-paragraph AI answer they got during their initial research. By the time they finally land on your site, they've already decided if you're "a tool for enterprise teams" or "something for scrappy startups." That framing came from the AI, not from you.

What “brand drift” looks like in practice

Brand drift in AI answers usually follows a few painful patterns. I’ve seen them all. Your product gets described with a competitor's key differentiator. An outdated pricing model is cited as fact. The AI thinks you're "for developers" when you actually serve growth teams. Or a limitation you fixed a year ago is presented as a current weakness. These aren't bugs that get patched automatically. They stick around until the underlying sources change.

Why AI gets your brand wrong (root causes you can control)

This is where most founders get stuck. We assume AI hallucinations are totally random, but they’re not. There are specific, fixable reasons why these answer engines distort your brand, and you have more control than you think.

Ambiguous messaging creates ambiguous answers

In our early days, we were so guilty of this. Our homepage said we "supercharge your workflow," our blog called us an "AI writing assistant," and our LinkedIn bio said we were a "content operations platform." When an AI model tried to make sense of it, what could it do? It averaged the three signals into something vague or just picked the one that seemed most confident. Inconsistent positioning across your own properties is one of the biggest own goals you can score here.

Fragmented sources beat your website when you don’t provide an extractable “truth”

Here's the uncomfortable truth: a forum thread from 18 months ago can carry more weight than your carefully crafted product page. Why? Because it might be easier for the AI to parse or be backed up by a few other sources. If you don't have clean, extractable pages that clearly define what your product is, what it does, and what it doesn't do, the model fills the gaps with whatever junk it can find.

Many teams, including mine, fix this by centralizing our positioning and product facts into a structured source of truth. We use DeepSmith's Deep IQ feature for this. It stores all our product details, voice guidelines, and messaging guardrails so every piece of content we publish is grounded in the same accurate context. That consistency is what AI answer engines eventually learn to reflect.

Technical unreadability (including JavaScript rendering) makes “good content” invisible

You can have the clearest positioning on the internet, but it won't matter if AI crawlers can't read it. JavaScript-heavy sites, especially ones that render content on the client side, are a huge blind spot. A bot shows up, sees a nearly empty HTML shell, and just moves on. Your content never gets indexed, extracted, or cited. It’s a technical problem that looks like a visibility problem.

Personalization and context change the answer (even with the same prompt)

Even if you do everything right, you have to accept one hard truth. There is no single "AI answer" about your brand. The user's location, their conversation history, and the platform they're using can all produce different responses to the same prompt. Your goal isn't to control one single answer. It's to create enough consistent, authoritative signals that most answers trend toward being accurate.

Build an AI-readable “source of truth” that answer engines can’t easily distort

The work here isn't about writing more content. It's about writing it in a specific way that gives AI systems clean, citable material to work with. Think of it as creating a brand instruction manual for the robots.

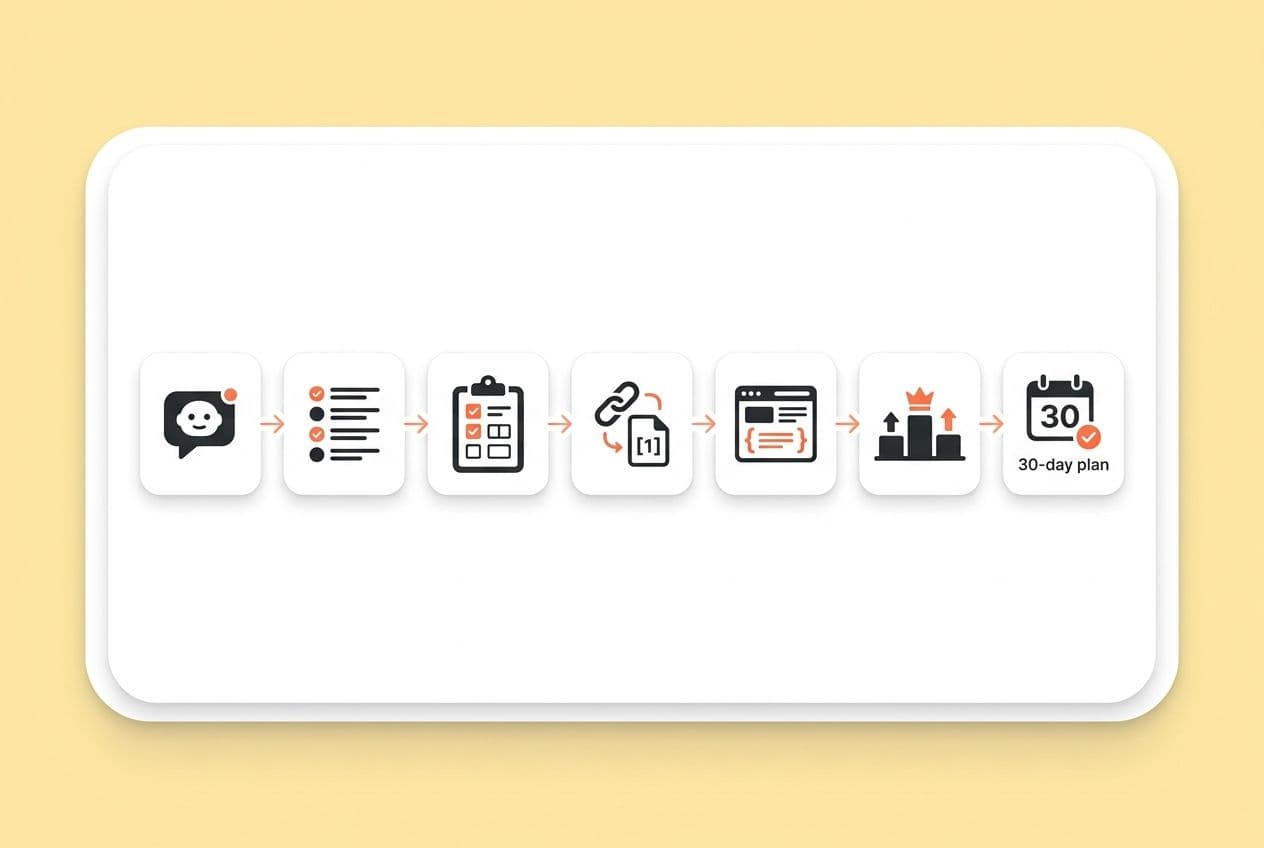

The 6 page types that reduce misrepresentation fastest

This is the playbook we run. It’s a set of six core pages that give an AI the clearest possible picture of who we are.

- Positioning page: A standalone page for "what we are and who we're for," written in plain language. It gives the AI a primary source for your identity.

- Product facts page: A list of specific capabilities, integrations, and tech specs. This is written for machines to read and provides structured data on what your product does.

- Use case pages: Pages for each persona or problem that show who gets value and how. These help the AI correctly match your tool with the right customer problems.

- Comparison pages: Your side of the story on how you differ from alternatives. If you don't publish your own, the AI will just use what your competitors say about you (and trust me, it’s never flattering).

- FAQ content: Real questions from your buyers, answered in your own words. This lets you shape the narrative around common objections.

- "We don't do this" guardrails: Explicit statements about what you don't do. This stops the AI from getting creative and assigning you features you don’t have.

“Citation-ready” writing patterns (without sounding robotic)

Just write like you're explaining it to a smart friend. Lead with the answer, then explain it. Use specific nouns instead of vague pronouns. Avoid internal jargon. Write in short, complete statements that can be lifted as standalone quotes. At the top of your key pages, add a one or two-sentence summary that an AI can use without needing the rest of the page for context.

Guardrails for accuracy (what you should explicitly say “we don’t do”)

It felt really weird at first to advertise our limits, but it was a game-changer. If an AI thinks you have a feature you don't, it's probably because you never said you didn't. Add a "What we don't do" or "Who this isn't for" section to your key pages. This kind of negative framing dramatically reduces the chance that an AI will overgeneralize what you can do.

Handling language and cultural variation for conversational queries

If you sell in multiple markets, just translating keywords is not enough. How someone in France phrases a problem is different from someone in Spain. You have to localize the intent, not just the language. This means building locale-specific versions of your core pages with phrasing that feels natural there, not just something run through a translation tool.

Technical foundations for being readable, extractable, and trustworthy

Think of this as the floor. Without these technical basics, all your great content work won't matter.

Structured data that matters (FAQ/HowTo/Speakable) and when to use it

Schema like FAQ, HowTo, and Speakable helps search engines (and AI systems) understand your content. Use FAQ schema on your actual Q&A pages. Use Speakable for your main "this is who we are" statements. But don't treat schema as a magic bullet. It amplifies good content; it can't rescue weak content.

Crawlability basics that disproportionately affect answer engines

Your pages need to be indexable, load fast, and use clean HTML. AI extractors like content they can read in a straight line. Put your most important claims in simple <p> tags and headers, not buried in complex code or behind interactive elements. And do a quick check of your robots.txt file to make sure you aren't accidentally blocking the AI crawlers.

Practical approaches to reduce JavaScript rendering risk

For a non-technical founder, this can sound intimidating. Here’s the simple version: ask your developer to server-render your most critical pages, especially your positioning and product facts pages. If that's not possible, ask them to set up prerendering for those key pages. The test is simple: if you "view source" on a page and can't find your key messages in the raw HTML, the AI crawlers probably can't either.

Monitoring + mitigation: an “AI visibility” early warning system for lean teams

Spot-checking ChatGPT once a quarter isn't monitoring. It's wishful thinking. Brand drift happens gradually, so you need a rhythm to catch it before it snowballs.

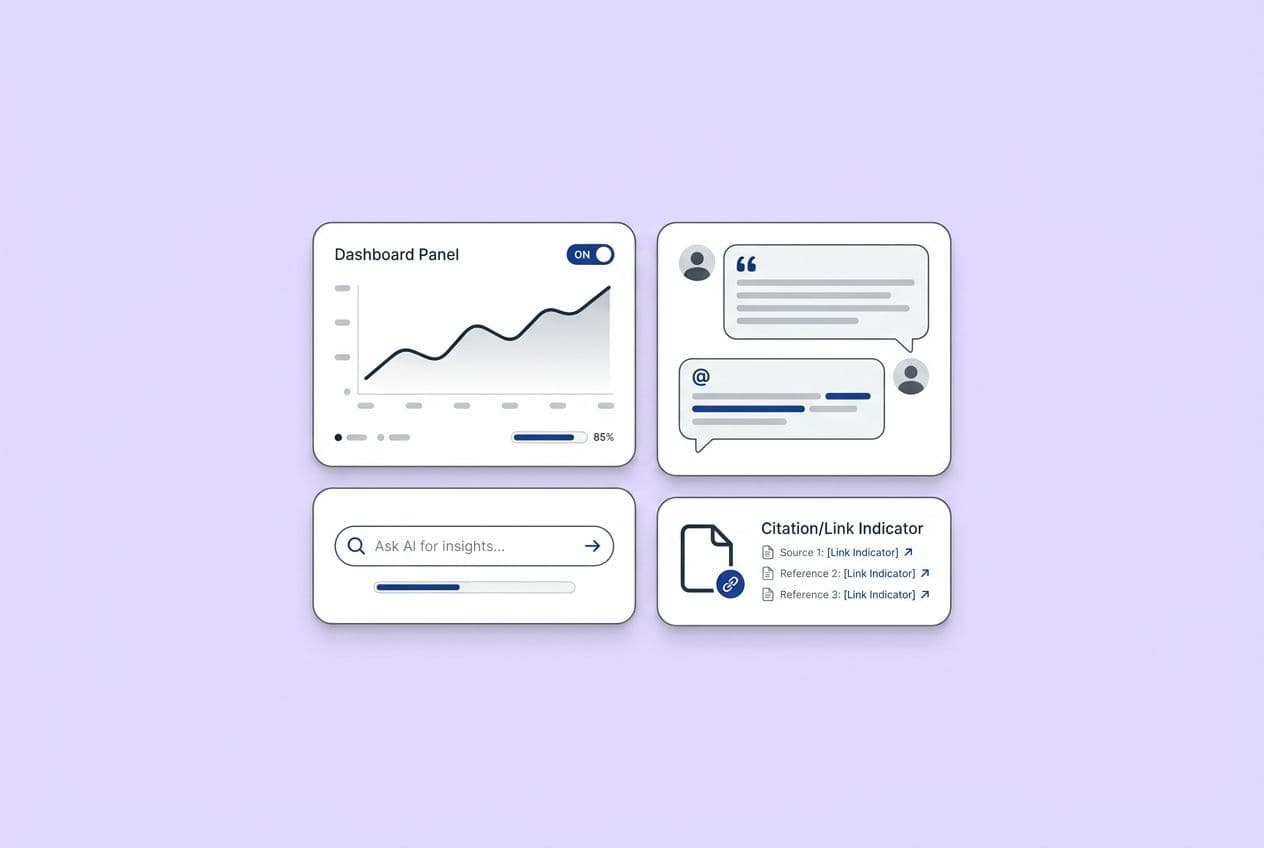

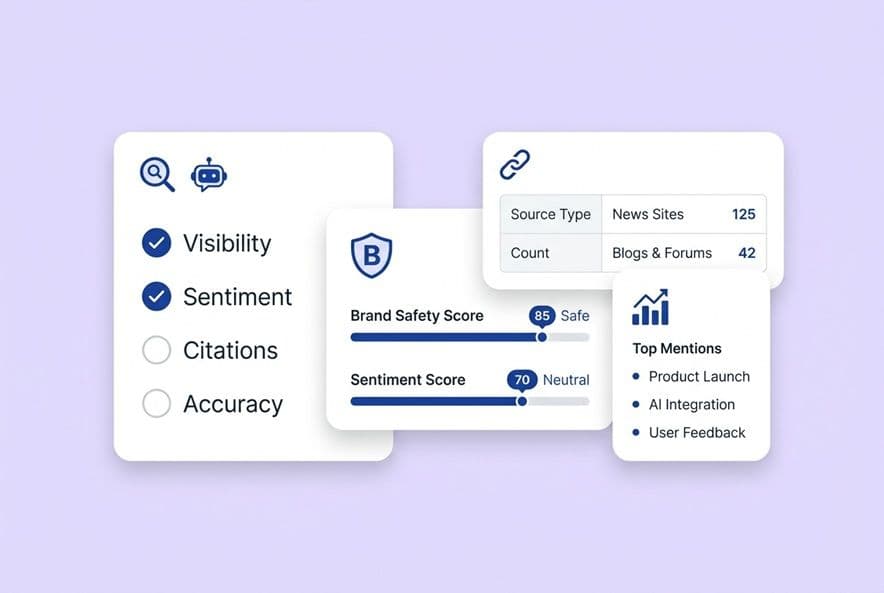

What to monitor (prompts, citations/mentions, competitor comparisons, sentiment)

Track a consistent set of prompts across a few platforms. I recommend category questions ("What tools help with X?"), comparison prompts ("How does [your product] compare to [competitor]?"), and direct brand queries ("What is [your product]?"). Keep an eye on whether you're being cited, if the descriptions are right, and whether competitors are showing up where you should be.

A lightweight monitoring rhythm (weekly, monthly, quarterly)

This doesn't have to be another thing that burns you out. Keep it simple.

- Weekly: Run 5-10 core prompts on a couple of platforms. Log the answers. Flag anything that's wrong.

- Monthly: Look for trends. Are your citations going up? Is one specific misrepresentation popping up again and again?

- Quarterly: Do a full audit. Update your source pages and check that everything is still technically accessible.

This process can feel like a chore. Tools with built-in AEO capabilities, like the ones in DeepSmith, can help turn this into a repeatable workflow instead of something that only happens when you find a spare afternoon.

What to do when AI is wrong (correction playbook)

- First, go update your own source pages with clearer, more extractable language.

- Publish a new piece of content that directly corrects the error (like a new FAQ or comparison post).

- Strengthen your third-party signals. Ask happy customers to update their reviews or refresh your G2/Capterra profiles.

- Wait a few weeks, then re-run the prompts to see if things have improved.

Comprehensive monitoring without an enterprise stack

You don't need a ten-tool stack to get started. All you need is a shared doc to log prompts and a spreadsheet to track citations over time. Manual is fine when you're small. As you grow, you can look into systems that centralize this work so it's not all on your shoulders.

Proving ROI when clicks disappear: measurement that maps to pipeline

When AI answers questions directly, your referral traffic can flatline. If your board is anything like mine, they don't love hearing "attribution is fuzzy." It doesn't mean the work isn't paying off, it just means you need a new way to measure it.

The metrics ladder: visibility → consideration → conversion signals

Here’s how I tell the story of our progress.

- Leading indicators: Are we getting cited? How often? This shows we exist.

- Mid-tier signals: Is our branded search traffic growing? Are our sales cycles getting shorter for some customers?

- Lagging indicators: The big ones, like win rate, CAC, and pipeline velocity. These take time to move, but they show the real business impact.

Tracking indirect conversions (what to look for in branded demand and sales cycles)

Add this one question to every demo form: "How did you hear about us?" A rising number of "ChatGPT" or "Google AI" responses is your direct signal. Also, watch your branded search volume in Google Search Console. When people hear about you in an AI answer, they often search for you directly. That search-after-discovery pattern is proof, even if the click isn't directly trackable.

Where paid search fits (and how to coordinate messaging)

This work doesn't replace paid search, especially if you need pipeline tomorrow. It reduces your dependence on it over time. Run paid ads to capture buyers who are ready now. Run this AEO playbook to shape their perception earlier, so they come to you already warmed up. Just make sure your message is consistent. If your ads say one thing and AI says another, you’re creating confusion right when you need to be building trust.

Governance that prevents brand damage (without slowing a startup to a crawl)

This isn't about creating a bunch of corporate red tape that suffocates your team. It's about putting a lightweight system in place to protect your brand.

The minimum roles and responsibilities (even if it’s 2 people)

Even with a two-person team, you just need clear ownership. One person owns the source-of-truth content (probably the founder or growth lead). One person owns technical accessibility (a dev or fractional SEO). Have a quick review for anything that touches pricing or legal. That's it. Don't over-engineer it.

A simple “single source of truth” workflow for updates

Every time you ship a big product update, trigger a three-step action: (1) update your core source-of-truth pages, (2) push out a new piece of content about the change, and (3) re-run your monitoring prompts. This connects your product roadmap to your AI visibility and prevents your messaging from getting stale.

When you centralize your content process, accuracy checks become part of the workflow, not another founder fire drill. Systems like DeepSmith are built for this, running QA and grounding every asset in your approved product context.

Vendor / agency questions to ask if you outsource any of this

If you hire an agency, ask them these questions: How do you monitor AI citation accuracy, not just old-school rankings? How do you check for technical extractability? How do you show pipeline influence when referral traffic isn't the whole story? If they can't answer, they're doing 2022 SEO, not 2025 brand visibility work.