You Googled your competitors last week and saw it. They're everywhere in AI answers. ChatGPT recommends them. Perplexity cites their blog. Google AI Overviews are quoting their product page. Meanwhile, your brand gets a vague mention at best. Or worse, it’s described in a way that would make your best customer wince. I’ve been there.

Here’s the thing I had to learn: this isn't an SEO problem you solve by just publishing more content. AI search readiness is a leadership and operating system problem. It’s about knowing what AI platforms say about you right now, understanding why they say it, and building a repeatable workflow to improve it. This isn't a one-time audit you can just move on from.

Founders who treat AI visibility like ongoing governance will compound their authority over time. Everyone else will chase vanity scores and wonder why nothing sticks.

These five questions are the ones I use to figure out where we actually stand.

Why "AI search readiness" is a business risk now (not an SEO nice-to-have)

Buyers aren’t just Googling your category anymore. I see it every day. They're asking ChatGPT which tools to shortlist. They're checking Perplexity for comparisons. They're getting Gemini summaries before they ever even think about landing on your website. The entire discovery layer has changed.

The companies that show up confidently in those AI answers are shaping what "good" looks like in your category, whether they deserve to or not.

What's changing vs. classic SEO

Classic SEO rewarded you for ranking in a list of ten blue links. The buyer still had to do the work. They had to click, evaluate, and decide. AI-generated answers do the evaluation for them. A buyer might never see your site if an AI has already told them you’re a secondary player, overpriced, or missing a key feature (accurately or not). The stakes for how you're described just went through the roof.

Mentions vs. citations (the readiness split most teams miss)

This is a distinction that took me a while to get, but it’s critical. A mention is when an AI answer references your brand name, like "tools like Acme exist in this space." A citation is when the AI points to a specific page you published as a source of truth.

Mentions build familiarity. Citations build authority. You need both, but they come from different playbooks. Mentions come from broad brand presence and third-party coverage. Citations come from content that's structured for extraction and trustworthy enough to anchor an answer. Most teams optimize for neither, which is why you need a system to track both, not just anecdotal spot-checks.

Question 1 — Do we know what AI platforms say about us today (and is it accurate)?

Before you try to optimize anything, you need a baseline. This is the painful part, I know. But pull up ChatGPT, Gemini, Claude, Perplexity, and Google AI Overviews. You don't need a fancy tool to start.

A simple baseline audit you can do in 60 minutes

I run this playbook myself. Just run these prompt types across each platform and log the answers in a shared doc (a simple spreadsheet works fine):

-

Category prompts: "What are the best tools for [your category]?"

-

Comparison prompts: "How does [your brand] compare to [top competitor]?"

-

Use-case prompts: "What's the best tool for [specific job your product does]?"

-

Brand-direct prompts: "[Your brand name]: what is it, who is it for, and what does it cost?"

For each answer, ask yourself: Are we mentioned? Are we cited? What’s the framing? What sources does the AI reference? And maybe most importantly, which competitors appear that you don't? That last column is your visibility gap.

Red flags: inaccurate positioning, wrong comparisons, outdated features, negative framing

Bad visibility isn't just being absent. It's being wrong. The first time I did this, I found an AI claiming we had a feature we'd deprecated a year earlier. It’s maddening.

Watch for features you don't have, pricing that's out of date, comparisons that put you at a disadvantage based on old information, or vague negative signals like "may not be suitable for enterprise teams" with no basis. These inaccuracies are conversion killers. A buyer who gets a misleading AI answer isn't going to dig through your site to fact-check it. They’ll just move on.

Question 2 — Do we know which prompts/questions actually drive pipeline for our category?

This is where most founders either guess or freeze. "Track prompts" sounds obvious until you realize there are hundreds of possibilities for any category. I've wasted a lot of time chasing the wrong ones. You need a system to find what matters.

Build a "prompt map" (category → jobs-to-be-done → comparison → implementation)

We map our prompts across four layers of the buying journey. It brings much-needed clarity.

-

Category-level: "What tools help with [problem]?" This is top-of-funnel awareness.

-

Jobs-to-be-done: "How do I [specific task your product handles]?" This is mid-funnel, high-intent stuff.

-

Comparison: "Is [you] better than [competitor] for [use case]?" This is late-funnel and directly affects shortlists.

-

Implementation: "How do I set up [your feature]?" This is post-awareness and drives trial confidence.

Most teams only track category prompts and miss the high-intent ones where the real pipeline lives. Start by mapping 5–10 prompts for each layer.

How to prioritize prompts (a founder-friendly scoring model)

You can't go after 40 prompts at once with a lean team, trust me. We use a simple 1-3 scoring model for each prompt:

-

Buyer impact: How much would winning this prompt influence a purchase?

-

Winnability: How realistic is it to gain a citation here in the next 90 days?

-

Credibility gap: Does the AI currently recommend a competitor we’re actually better than?

-

Content readiness: Do we have a page that could answer this with some work?

Start with the prompts where impact and winnability are both a 3. Those are your targets for the next 30 days.

Turn prompts into content specs (what the page must answer to earn citation)

Every high-priority prompt needs to map to a specific page on your site. For that page to earn a citation, it has to answer the prompt directly, not just dance around it. Define what the page needs. What's the exact question it must answer in the first 150 words? What proof does it need (steps, comparisons, data)? What related things (integrations, use cases) should be mentioned? This is the kind of work we use DeepSmith's Topic Explorer for. It helps us find these prompt opportunities and turn them into content specs without all the manual translation work.

Question 3 — Is our site technically "extractable" by AI systems (or are we invisible by default)?

AI systems can't cite what they can't read. This technical part isn't glamorous, but it’s the foundation for everything else.

Access basics: crawl permissions, robots.txt, sitemaps, HTTPS

I know you don't want to look at your robots.txt file. Just do it. Some AI crawlers (like GPTBot for OpenAI or ClaudeBot for Anthropic) will respect your blocking rules. If you blocked crawlers during a security update and forgot about it, you might be completely invisible. Confirm your key pages are crawlable, your XML sitemap is current, and your whole site is on HTTPS.

Structure basics: semantic HTML, clean heading hierarchy, schema markup

AI systems parse your page structure to find answers. If your blog post is a giant wall of text with no subheadings, or your heading structure is a mess (like using heading tags for styling), you're making it hard for them. Use clear H2/H3 hierarchies and add schema markup for things like FAQs, articles, and product pages. These signals are like signposts for AI, pointing them to the good stuff.

What to fix first if engineering time is scarce

I know engineering time is gold. Here's the order of impact: (1) Fix crawl blocks for AI bots. Seriously, this is a five-minute fix. (2) Add FAQ schema to your most important pages. (3) Fix the heading hierarchy on your top 10 pages. Save the site-wide overhauls for later. Get the high-leverage wins first.

Question 4 — Are we publishing "citation-ready" content, or just more content?

Volume doesn't earn citations. Structure and specificity do.

The anatomy of citation-ready pages

Pages that get cited share a few traits. They answer a clear question in the first paragraph, without a long preamble. They use concrete claims like definitions, steps, and data, not vague marketing fluff. They name relevant entities (integrations, competitors, personas) so the AI knows what the page is about. And they're scannable.

Here’s a gut-check I use: If an AI system pulled just one paragraph from your page, would it stand alone as a useful, accurate answer? If the answer is no, the page has low answer density.

Build a small set of "AI source pages"

Pick 5–8 pages to be your citation anchors, the ones you want AI systems to treat as your official voice. Good candidates are your category definition page, a transparent pricing explainer, a competitor comparison page, an implementation guide, and your security page. These pages can earn citations across dozens of different prompts. Make them thorough, keep them current, and link to them internally.

Upgrade path: refresh vs. rewrite vs. consolidate

Before you create something new, audit what you already have.

-

Refresh pages that cover the right topic but lack structure. Add clear sections and tighten the opening.

-

Rewrite pages where the core message is wrong or outdated.

-

Consolidate when you have three thin blog posts on the same topic. Combine them into one authoritative page that can actually earn a citation.

Question 5 — Do we have an authority and governance system to keep (and grow) visibility?

This is the question almost nobody asks. It’s the one that separates compounding authority from a few sporadic wins. This is about building an engine.

Earning authoritative citations (without a big PR budget)

You don't need a huge PR budget for this. AI models favor sources that other authoritative sources reference. That means getting your product featured in niche industry publications your buyers actually read. It means publishing original research (even simple surveys work) that others cite. It means getting into roundups on G2 and Capterra, and building relationships with newsletter writers who link to good work. This isn't about hiring an agency. It's about dedicating 20% of your content time, consistently.

Monitoring brand portrayal and correcting drift

Set a standing monthly task: run your baseline prompt map and compare the answers to last month. Are new inaccuracies showing up? Has a competitor swooped in on a prompt you were winning? When you find something wrong, the playbook is simple: (1) publish a clear, factual page that corrects the record, (2) get that page referenced by at least one third-party source, and (3) update your own source pages to make the accurate version unmissable. Corrections take time, but they do work.

The governance cadence: owners, checks, and triggers

Assign one owner for AI visibility, even if it’s a part-time role. Every week, they should check how your priority prompts are doing. Every month, they run the full audit. You also need triggers. When a competitor launches something new, or when you update your own product, you have to revisit your source pages immediately.

How to measure success (without fooling yourself)

You'll never perfectly attribute a signed deal to a single AI answer. But you can build a model that shows directional momentum, and that’s usually enough to justify the investment.

Leading indicators (the stuff you can control)

Track these monthly: How many of your target prompts mention your brand? Of those, how many include a citation? Is the quality of sources citing you going up? Is the framing around your brand positive, neutral, or negative? These metrics tell you if your playbook is working.

Lagging indicators (the results that follow)

Over a few quarters, watch for an increase in branded search volume and referral traffic from publications citing you. In your CRM, flag deals where prospects mention "I saw you recommended" or "I found you via ChatGPT." You won't track these perfectly. Just look for the patterns. Logging your prompts and tracking citation changes over time (which is why we use prompt and citation trackers like DeepSmith's) makes this progress auditable, not just anecdotal.

What to report to your board

Keep it tight. One slide, five bullets: (1) prompts won vs. monitored, (2) citation count change month-over-month, (3) source quality trend, (4) one big mention we got, and (5) one screenshot of a great AI answer that shows our positioning. It signals disciplined execution without taking 30 minutes to explain.

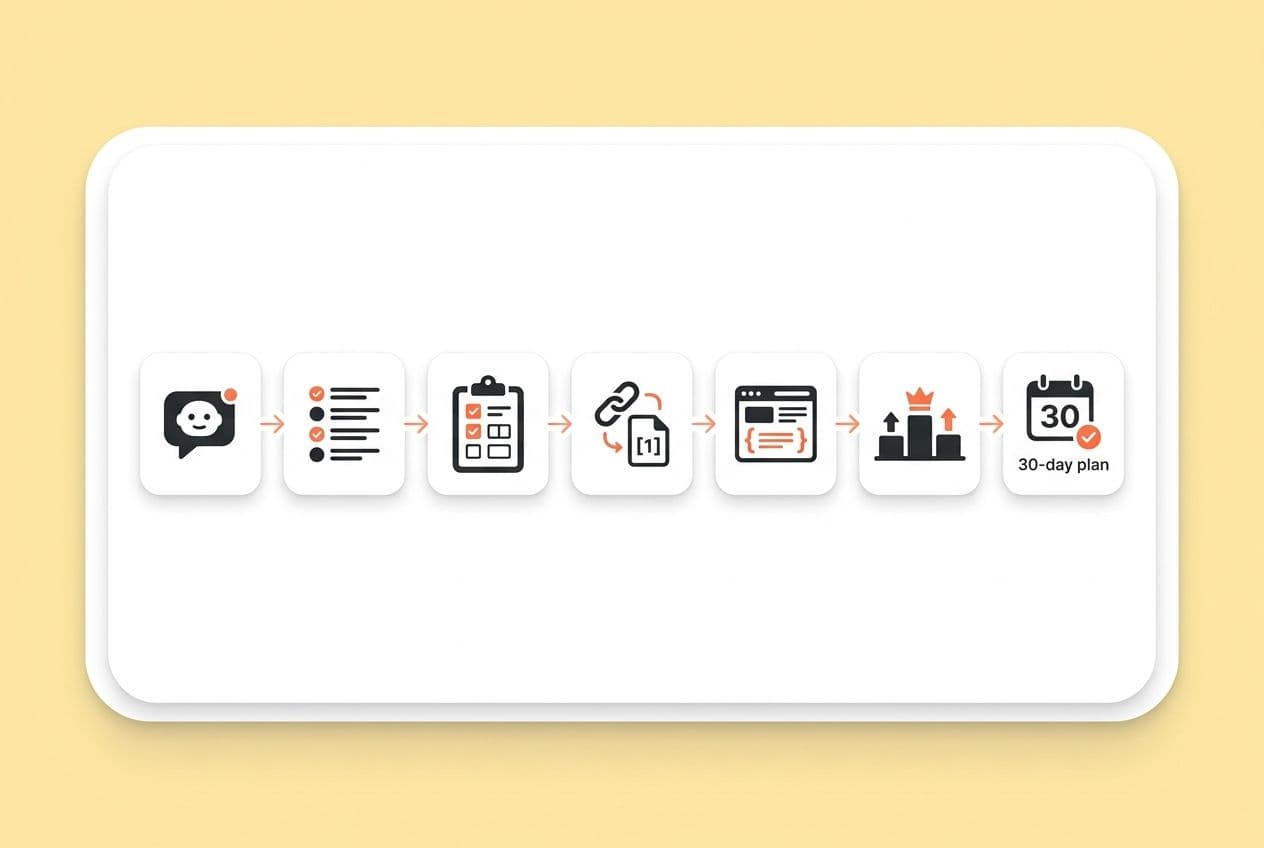

Your 30-day AI Search Readiness plan

Don't try to solve all five questions at once. You'll burn out. Here’s what to do in the next 30 days.

Week 1: Run the 60-minute baseline audit. Document what's right, wrong, and missing. Pick your top 5–8 pages to act as your "AI source pages."

Week 2: Build your prompt map. Score your top 20 prompts and identify your 3–5 highest-priority targets.

Week 3: Do the technical check. Fix crawl blocks, add FAQ schema to your source pages, and clean up the headings on your top 5 pages.

Week 4: Update your source pages. Make them "citation-ready." Publish or refresh one piece of content that directly targets one of your high-priority prompts.

Then, set your governance cadence. Weekly spot-check, monthly audit, one owner. And repeat. AI visibility compounds for the founders who treat it like a system, not a sprint.