I’ve been there. You open ChatGPT, you type in the exact problem your product solves, and you watch your competitor's name pop up in the answer. Twice. Your brand? Nowhere. Not even a hint.

It’s not a fluke. And it’s not because their product is better. It’s because AI models are making choices based on a specific set of inputs, and right now, your competitors are just better at feeding them.

The good news is you can fix this without hiring a whole content team. I promise. The uncomfortable news? You’re probably making at least one of three predictable mistakes that are a recipe for invisibility. Let’s walk through each one so you have a concrete path out.

The uncomfortable truth: AI "visibility" isn't rankings. It's being included in answers.

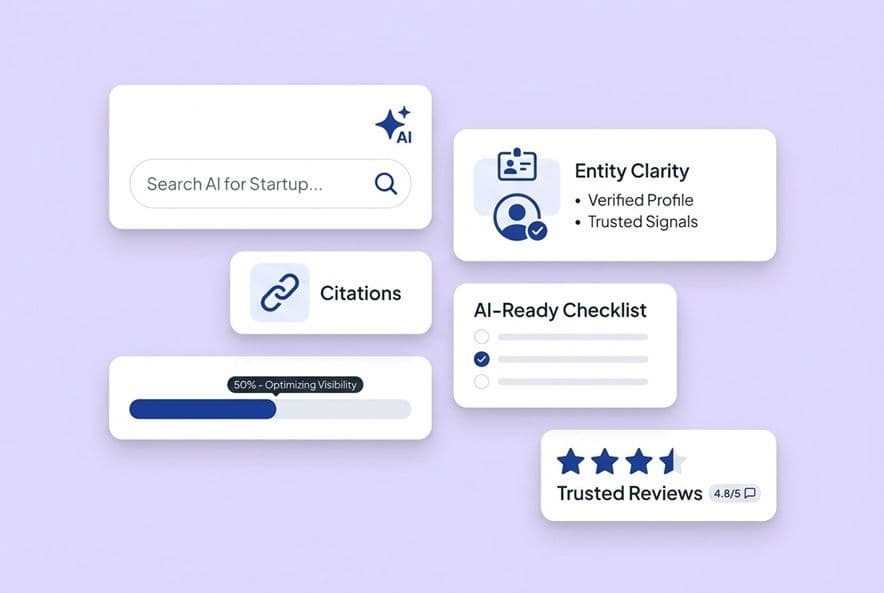

Forget everything you think you know about ranking number one. In AI search, there is no number one. You’re either included or you’re not. That's it. Systems like ChatGPT, Google AI Overviews, Perplexity, and Gemini synthesize answers from sources they find credible. They don't show you a list of links. They just talk.

What "winning" looks like in AI answers (and how it feels when you're losing)

When a competitor is winning, their brand name shows up in the answers to the prompts your buyers are actually typing. They get brand mentions, sometimes with a link and sometimes without, and over time, the AIs start recommending them by name. That's share of voice you're just handing them.

When you’re losing, you’re invisible. Or worse, the AI mentions a competitor and then says "alternatives include" without naming you. We have to remember that buyers take these answers at face value. If you’re not in the answer, you might as well not exist.

The minimum set of platforms to care about (and why one-engine checks are a trap)

Here's a mistake I see so many lean teams make. They check one platform, usually ChatGPT, and call it a day. But GPTBot, ClaudeBot, PerplexityBot, and Google's crawlers all behave differently. They weigh trust signals differently and have different citation patterns. A brand that shows up constantly in Perplexity might be a ghost in Google AI Overviews. You have to run your prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews at a minimum to get an honest read on where you stand.

Mistake #1 — You're measuring the wrong thing (so you can't prove ROI or prioritize)

Most founders I talk to who care about AI visibility are looking at one thing: "Did we get mentioned?" That’s not a measurement strategy. That’s just checking your pulse. Without a real way to measure, you can't prioritize work, you can't defend the investment to your board, and you have no idea if what you’re doing is actually working. It feels like shouting into the void.

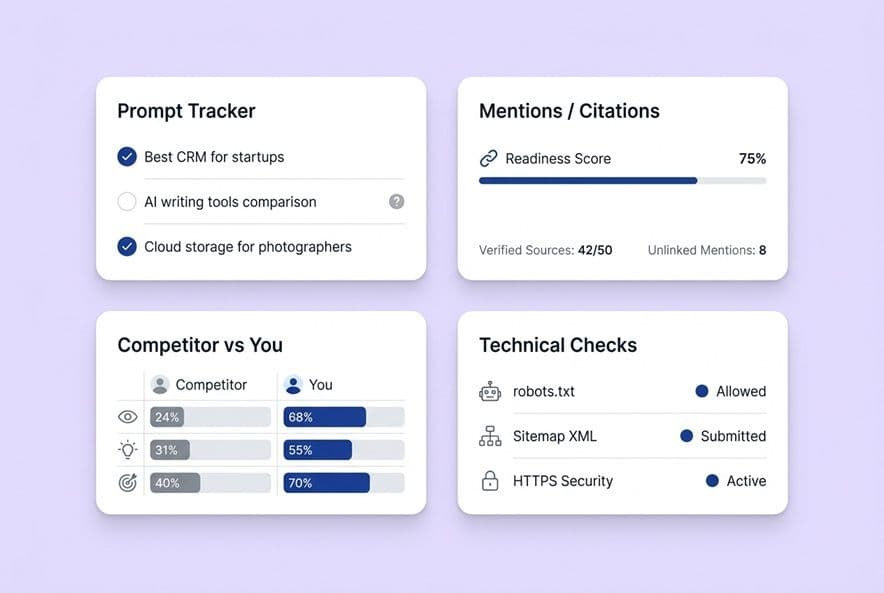

The 3 layers of measurement: visibility → influence → outcomes

I think about this in three connected layers.

Layer 1: Visibility. Are you being mentioned and cited at all? Track your brand mentions (linked and unlinked) across the platforms that matter. This tells you if the AI systems even know you exist. It’s the first step.

Layer 2: Influence. Are the right prompts surfacing you? This is where you need to track specific prompts. I always tell founders to map out the natural language queries their ideal buyers would type, then check which ones include their brand, which ones include competitors, and which ones have no one useful. The gaps you find are your new content plan.

Layer 3: Outcomes. Is this whole thing actually turning into business? You won't get perfect attribution, so let’s just accept that right now. But you can track proxies. Look at branded search volume trends, direct traffic, and demo request rates. When you see your brand mentioned more in AI answers, are those other numbers ticking up?

A founder-friendly ROI method when tracking is a mess

Stop waiting for a perfect attribution model. It’s not coming. Instead, run a simple correlation check once a month. When your AI brand mentions go up, do branded searches and direct conversions trend up too? It's not perfect causation, but it’s a pattern you can point to.

You should also add a qualitative signal. Ask your sales team if prospects are mentioning things they learned from an AI. It’s amazing how often buyers now show up to discovery calls already educated. That's a shorter sales cycle, which is a win you can track even if it just lives in your CRM notes.

What to track weekly vs. monthly (so this doesn't become your second job)

I know you don't have time for a whole new project. Here's how to keep it simple.

Weekly (15 minutes): Run 5–10 of your most important prompts across two or three platforms. Note who shows up. Log it in a simple spreadsheet. Done.

Monthly (1 hour): Review your visibility trend. Update your prompt list if you've launched new features or have new competitors. Refresh that correlation check against your conversion numbers, and set one single content priority for the next month based on where you're missing.

That’s the whole system. If it takes longer, you’ve made it too complicated.

Mistake #2 — Your competitors aren't "better," they're more citable (and you're not)

It took me too long to learn this. AI models don't cite brands because they're popular. They cite content that's easy for them to pull from, verify, and reuse in an answer. Your competitor might have a so-so product and just-okay content. But if their pages are structured so an AI can pull a clean, direct answer, they get the citation. You don't.

The citation readiness checklist (structure + clarity + verifiability)

Content that gets cited has specific traits. It answers questions directly, not after three paragraphs of throat-clearing. It uses clear definitions, labeled comparisons, and explicit framing like "use this when..." or "don't use this if..." It states verifiable facts, not vague marketing claims like "we're the industry leader."

Structurally, this means using H2s and H3s that sound like real questions. Put the answer right in the first sentence after the heading. Use short paragraphs. Tables and numbered lists are gold because AIs love to extract structured information.

Trust signals that change whether AI recommends you

AI models also look for trust signals. Things like verified reviews on third-party sites, expert endorsements, and consistent facts across the web matter a lot. If your product is described one way on your website, another on G2, and a third way in a press mention, the AI sees inconsistency and will likely ignore you.

The fix is straightforward. Audit how you're described in a few external places. Write a single, canonical product description, just one or two precise sentences, and make it your mission to get that language reflected everywhere your brand appears. To an AI, consistency looks like authority.

Prompt-level optimization tactics that actually move the needle

The prompts your buyers use fall into predictable buckets: "best [category] tools," "how to [solve problem]," "what's the difference between X and Y," and "should I use [tool A] or [tool B]." Your content has to address these directly.

Practically speaking, you should add a comparison section to pages about your category. Add a "who is this for?" section. Write explicit definitions of the problems you solve. I'm not talking about marketing fluff, but the kind of clean definition an AI would feel comfortable quoting.

Trying to track all these prompts across multiple engines by hand is a nightmare. Teams that get ahead of this usually find a system that tracks prompts and citations together, so they can see what's working and where they're still invisible. DeepSmith's AEO capabilities are built to do exactly this, tracking which prompts surface your brand and where citations land, so you're not just guessing what to fix.

Competitor teardown method: from "they're mentioned" to "here's what we publish next"

Here’s my exact workflow. Run 10–15 prompts that match how your buyer talks. For each one where a competitor appears and you don’t, open their cited page. Then answer three questions: What specific subtopic does this page cover? What format does it use (like a definition, comparison, or how-to)? What's the exact claim that probably got it cited?

This gives you a list of missing subtopics and formats. That’s a content backlog, not some vague to-do list. Prioritize the prompts that are closest to a buying decision first.

Doing this manually is slow. A tool that shows you keyword clusters, content gaps, and AEO prompt opportunities, like DeepSmith's Topic Explorer, can turn days of research into an afternoon. It can even push those insights into a production queue so the gap between finding a problem and publishing a fix almost disappears.

Mistake #3 — Technical and narrative drift blocks you (even if your content is good)

You can write the most perfect, citable content in the world and still be invisible if AI crawlers can't access it. You can also be blocked if the narrative the AI has built about you is quietly working against you. I've made this mistake, and it's a frustrating one.

Step-by-step: fix the most common AI visibility blockers

Check your robots.txt file first. Think of it as the bouncer at a club. If GPTBot, ClaudeBot, and PerplexityBot aren't on the list, they aren't getting in. Make sure you haven't accidentally disallowed them.

Verify HTTPS is consistent. If some pages are on HTTP or have mixed content errors, crawlers might just skip them. A quick site audit in any standard SEO tool will find these.

Submit an updated sitemap. A clean XML sitemap is like giving crawlers a map to your house. It helps them find all your content. If pages are missing from your sitemap, they're much harder to discover.

Fix crawl errors. Broken pages (404s) and long redirect chains slow crawlers down and reduce the chances they'll index and use your content.

Schema that helps AI understand and reuse your content (without going crazy)

You don't need every type of schema. Just focus on three: FAQ schema for any page with question-and-answer content (which is exactly what AIs love), HowTo schema for process pages, and Product schema for your main product pages. These tell the AI what kind of content it's looking at and make it easier to pull from.

Don't just add schema everywhere for the sake of it. Add it where the content genuinely fits the schema type.

What to do when AI mentions you negatively (or inaccurately)

First, find the source. Run the prompt that gives you the bad answer across multiple platforms. See if there's a pattern. Usually, you can trace it back to a specific piece of content somewhere on the web.

Next, publish a correction. Write a clear, factual page that directly addresses the inaccuracy. Don't be defensive, just be authoritative. Make sure the correct information is in a format an AI can easily quote. Then, work on strengthening other pages, like third-party reviews or press mentions, that reflect the correct story.

Finally, monitor the situation. AI responses update as new content gets indexed. Re-check the problem prompts every few weeks and watch for the narrative to shift in your favor.

How to run an "AI search war room" in 60 minutes a week (SMB workflow)

The goal here is not to build a new department. It's to build a simple habit that compounds over time. Every week, you'll know a little more about where you stand and have one concrete thing to fix or publish.

The weekly loop: prompts → gaps → publish → check citations

Monday: Run your prompt list (10–15 queries across two or three platforms). Log where competitors appear and you don’t. Find one gap.

Wednesday: Assign that gap to your content queue. Write or brief one piece that directly addresses the prompt you identified.

Friday: Check if any content you published in the last month has started generating citations. Note what’s working.

That’s maybe 15 minutes on Monday, a quick brief on Wednesday, and 15 minutes on Friday. The rest of the week, you get to do your actual job.

The monthly loop: refresh winners, fix losers, expand your scope

Once a month, look at which of your pages are getting cited and which are duds. For the pages that are working, expand on them. Add new sections, update the data, or add a comparison table. For pages that aren't working, check them against the citation readiness checklist from Mistake #2 and fix the most obvious problem.

Then, expand your prompt list with five to ten new queries based on what you're hearing from buyers in sales calls or support tickets. This is how you build real coverage instead of just chasing one-off wins.

Consistency is almost always the bottleneck, not strategy. The founders who stay on top of this usually need a system that supports a steady cadence from brief to draft to publish without a lot of tool-hopping. That’s what Autowrite in DeepSmith is built for. It helps you keep the pipeline moving even when you’re being pulled toward product or fundraising.

Where to integrate this with the rest of marketing (so your message stays consistent)

This work shouldn't live in a silo. The prompts you optimize for should inform your paid search campaigns. The canonical product description you write for AI consistency should be the same language in your sales deck. The comparison content you publish for AEO is also great competitive enablement for your sales team.

The connection point is simple: any time you publish something for AI citation, make sure your social and email channels know about it. AI systems give weight to content that gets engagement and links, so distribution actually speeds up citation readiness.

Vendor/tool evaluation questions (if you decide to use a tracker)

You don't need a tool to get started. But once your prompt list gets to 30+ queries across four platforms, spreadsheets become a real pain. If you decide to get a tool, here's how to figure out what's worth paying for.

Capabilities checklist: what matters vs. what's noise

Here are the non-negotiables: multi-engine support (ChatGPT, Perplexity, Gemini, Google AI Overviews at minimum), prompt-level tracking, citation context (what the AI said about you), competitor benchmarking, and historical trend data.

Nice-to-haves include automated gap identification and direct integration with your content workflow.

Skip any tool that only tracks one platform, only gives you an aggregate score without details, or doesn't show you the actual AI response text.

Questions to ask before you trust any "visibility score"

How is the score calculated? A score built on 20 generic prompts on one platform is basically useless.

How often is the data refreshed? AI responses change fast. You should expect weekly updates at a minimum.

Does the tool show you competitor scores for the same prompts, or just your own? Without competitive context, a score tells you very little about where to focus your effort.

Your Next Steps for Showing Up in AI Answers

Just pick one thing from this article and do it today. Run the competitor teardown with five prompts on two platforms. It'll take 30 minutes. Write down the first content gap you find. Fix one technical blocker this week. Publish one citation-ready page before the month is over. Then, put a 60-minute block on your calendar every week and protect it.

AI search visibility is built with small, consistent moves. The founders who are closing the gap aren't working harder than you are. They just started the loop a little earlier.