ChatGPT is amazing at getting words on the page fast. If you're staring at a blank doc, it's basically a defibrillator.

But if you've tried to publish what it gives you "as-is," you already know the problem: the output reads like content instead of something a real person would stake their name on.

That's not a knock on the tool. It's a mismatch of expectations.

ChatGPT is strongest when it's executing decisions you've already made (audience, angle, proof, boundaries, voice). When you don't provide those decisions it has to guess. And the safest guess is usually a generic first draft.

This article explains where the gap comes from and the workflow that consistently fills it.

What ChatGPT does well (and where it breaks down)

If you use ChatGPT like a slot machine you'll get a lot of "kind of fine" writing. If you use it like a thinking partner it becomes wildly more useful.

But first you need to know what it's actually good at and what it's not.

The speed advantage: structure and ideation

ChatGPT is a productivity multiplier for the parts of writing that are expensive in time, not necessarily expensive in judgment.

It's great at:

- Turning a messy topic into a coherent outline

- Brainstorming angles, counterpoints and subtopics

- Drafting starter paragraphs so you're not stuck

- Reformatting content (bullets, tightening, headline options)

- Producing variations quickly for different audiences or tones

In other words: it's excellent at converting intent into text. Once the intent is clear.

Why first drafts feel so… meh

When you give it a prompt like "Write a blog post about onboarding emails" you didn't actually give it a writing task. You gave it a guessing task.

It now has to infer:

- Who the reader is in their lived reality (not just a job title)

- What the reader already knows

- What you believe (your stance)

- What you've seen work (your experience)

- What claims are allowed vs. off-limits

- What examples would be credible

When it guesses it defaults to what I call the "professional internet voice." Polite, balanced, broadly correct. Mostly forgettable.

That's pattern completion at work (not real-world insight). So you get boilerplate writing that sounds like a summary of other summaries. It's not wrong. Just not publish-ready.

Why ChatGPT alone doesn't get you to published

Publishing isn't "drafting but longer." Publishing is drafting plus ownership.

Ownership means: the article sounds like someone real wrote it, the claims hold up and the reader leaves with something they can actually use.

What AI drafts usually miss

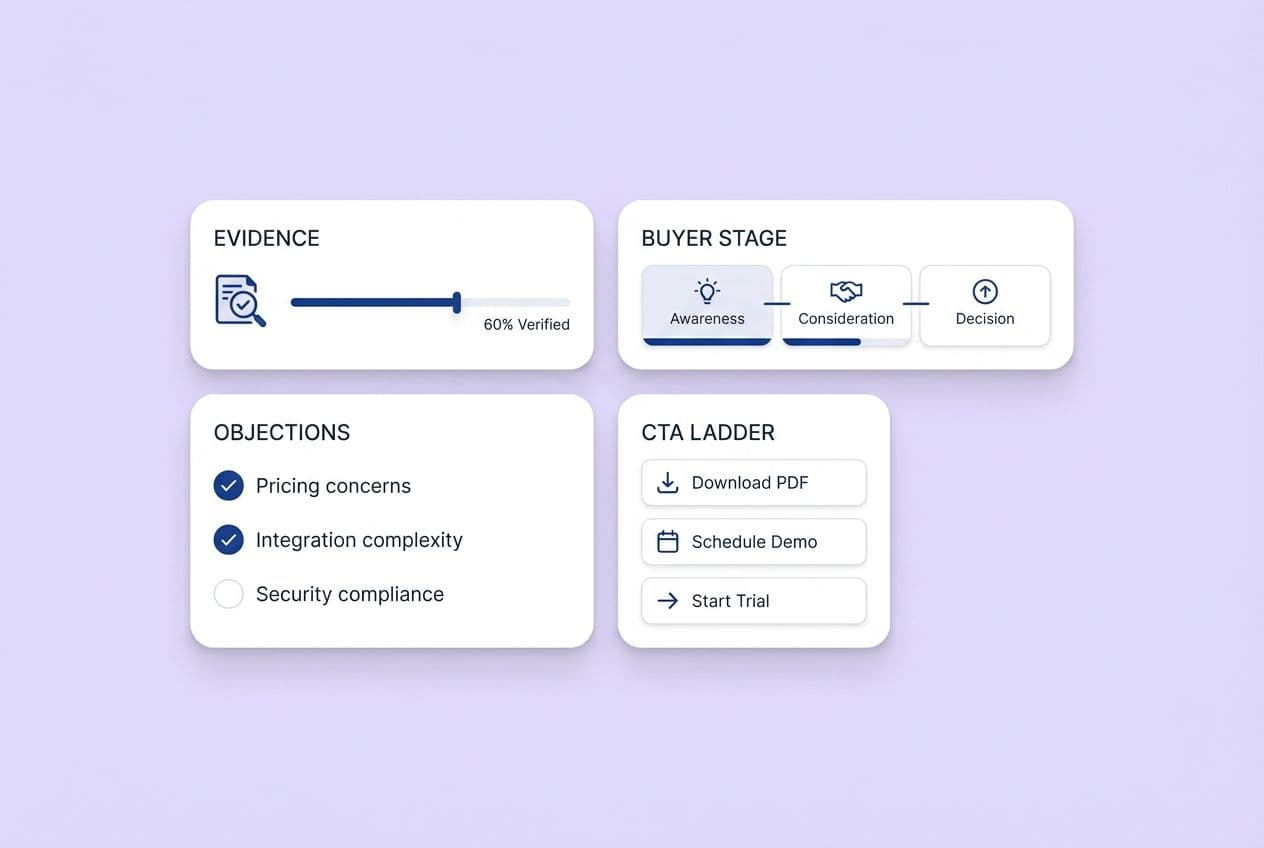

Most AI-generated content misses the same things:

- Reader context: It speaks to a role ("marketers") instead of a situation ("you're shipping weekly and your lifecycle emails are an afterthought")

- Voice: It's competent but doesn't sound like you. No edge, no taste, no opinion

- Proof: It makes points without mechanisms or examples that show the point is earned

- Claim discipline: It can overstate or imply certainty where you should be qualifying

- Specificity: It says what's true in general, not what matters in practice

- Originality: It recombines common patterns but doesn't originate ideas from lived experience

A publishable article usually contains proof elements (scenarios, templates, mechanisms or use-now assets). AI drafts often contain advice but not equipment.

Why editing weak drafts costs more than writing fresh

Here's the frustrating part: rewriting a weak AI draft can cost more than writing fresh.

Why?

You spend time untangling structure that looks organized but isn't aligned to your real argument. You end up doing line-by-line repairs because the tone is "almost right" but consistently off. You're forced into reactive editing instead of proactive thinking.

And you still have to fact-check and add proof. Which is the hard part anyway.

If your process is "generate → cringe → rewrite everything" you're using AI as a bad intern. The fix isn't a bigger prompt library. The fix is a workflow that prevents the bad draft in the first place.

A workflow that actually works

This is the workflow I keep coming back to because it's realistic. It doesn't require 40 prompts or a complicated system. Just forces the right decisions in the right order.

Brief → Structure → Draft → Voice pass → Proof pass → Final skim

Start with a detailed brief (not a topic)

Briefing is where most people fail. And it's why the draft comes out generic.

A useful brief answers:

- Who this is for (their lived reality, not just their job title)

- What problem they're trying to solve this week

- What you want them to think or do differently after reading

- Your stance (what you agree or disagree with in the usual advice)

- Claim boundaries (what you will not claim and what you're unsure about)

- Proof requirements (what must include an example or template)

Here's a "good enough" briefing template:

- Reader context: [describe situation, constraints and what they've tried]

- Purpose: [thought leadership / SEO / content marketing]

- Angle: [your stance in one sentence]

- Must include: [3–5 points that must appear]

- Proof slots: [where you want examples or templates]

- Claim boundaries: [no stats unless provided, qualify uncertain claims]

- Voice rules: [short sentences, direct, no corporate filler]

Claim boundaries matter more than people think. They don't just reduce hallucinations, they force you to decide what you actually know versus what you're hand-waving.

Ask for structure before drafting

Don't ask for a full article first. Ask for structure.

Why this works: structure is where you decide what gets emphasized, what gets cut and how the argument flows. If you skip this step you'll be "editing with a chainsaw" later.

Prompts that work well:

- "Propose 3 possible outlines for this brief. Each outline should include where proof elements go."

- "Give me a structure that starts with the reader's problem, then a clear mechanism, then practical steps."

- "Include proof slots as placeholders like: [EXAMPLE], [SCENARIO], [TEMPLATE]."

Then you pick one outline and adjust it like an editor. Cut sections you won't support with proof, reorder to match how your reader thinks, add the one point you actually care about.

Draft with proof slots already in place

Now you draft, but with intent.

Instead of hoping ChatGPT invents good proof you tell it where proof must appear. Proof slots are placeholders that prevent the article from turning into pure abstraction.

Examples:

- [SCENARIO: founder with no content team trying to publish weekly]

- [MECHANISM: why topic-only prompts force guessing]

- [TEMPLATE: a briefing checklist the reader can reuse]

Experience signals are small details that communicate "this was written by someone who's been in it." A few safe signals you can add:

- The tradeoff you had to choose (speed vs precision)

- What usually goes wrong in practice

- A constraint (time, budget, approvals)

- The moment you realized the obvious advice didn't work

Simple rule: every major section should contain at least one proof element. If it doesn't it will read like boilerplate.

Run a voice pass (consistency without adding new claims)

A voice pass is not "make it better." It's "make it sound like us."

Rules:

- Don't add new facts

- Don't add new claims

- Don't introduce fresh advice

You're only adjusting:

- Sentence rhythm (shorter, clearer)

- Vocabulary (your real terms)

- Confidence level (more direct where you're sure, more qualified where you're not)

- Removing filler (e.g. "In today's fast-paced landscape…")

If you mix voice with proof you'll lose track of what's been validated. Keep them separate.

Run a proof pass (fact-check and claim discipline)

The proof pass is where "publishable" is won or lost.

This pass is about credibility not style.

Checklist:

- Validate any factual statements you intend to keep

- Remove implied numbers ("most," "always") unless you can support them

- Add qualifiers where reality is messier

- Replace vague claims with mechanisms ("because X leads to Y") or cut them

- Ensure you didn't accidentally create a case study or statistic

When AI drafts sound confident it doesn't always mean they're correct. A proof pass is you taking back responsibility.

Add use-now assets

This is the part that turns "nice article" into "I'm bookmarking this."

Use-now assets are things the reader can apply immediately without extra tooling or research.

Examples:

- A one-page checklist

- A prompt template

- A rubric ("if your draft has these 3 symptoms it's still first-draft quality")

- A set of copy/paste placeholders (proof slots)

- A lightweight workflow guide

You don't need to write a novel. You just need to leave the reader with something that survives beyond the tab being closed.

Final skeptical read

This sounds goofy. It works.

In the final skim read it like a skeptical reader who's busy and slightly annoyed.

You're looking for:

- Places you make a claim without earning it

- Sections that could be deleted with no loss

- Advice that's technically true but not actionable

- Jargon you wouldn't use in a real conversation

If a paragraph doesn't change what the reader understands or does it's probably fluff. Cut it.

Common mistakes that kill AI content quality

Most AI content problems are workflow problems (not model problems).

Mistake 1: Topic-only prompts

Topic-only prompts force the AI to guess everything that makes an article worth reading.

Fix: Always include reader context, angle, proof requirements and claim boundaries. A job title isn't enough. "Head of Growth at a Series A SaaS who needs content but has no writer" is closer.

Mistake 2: Confusing structure with substance

A clean outline can still produce a hollow article because structure is just containers. Substance is what you put inside: mechanisms, scenarios, templates, tradeoffs.

Fix: Add proof slots to the outline before drafting. Require at least one proof element per major section.

Mistake 3: Mixing voice with proof

If you edit tone and facts at the same time you'll miss both.

Fix: Do a voice pass where you change style only. Do a proof pass where you validate claims. This separation sounds pedantic but it's how you keep your edits from turning into a messy rewrite spiral.

Mistake 4: Ignoring claim boundaries

AI loves confident language. Publishing loves accuracy.

Fix: Tell the model upfront what it's not allowed to claim. Remove superlatives unless you can support them. Replace hype with specificity ("here's when this works, here's when it doesn't").

Mistake 5: Overwriting instead of guiding upfront

If you find yourself constantly rewriting it usually means the AI wasn't given enough constraints early.

Fix: Spend more time on the brief and structure. Iterate prompts in smaller chunks (section by section). Ask for alternatives ("give me 3 intros with different stances") instead of accepting the first attempt.

Make AI your thinking partner (not a vending machine)

The biggest upgrade isn't a better model. It's treating the model differently.

If you treat ChatGPT like an "article vending machine" you'll be disappointed. If you treat it like a thinking partner that drafts after you decide you'll get leverage.

What AI is guessing when you provide limited input

When you provide limited input AI is guessing:

- What you mean by "best practices"

- What level of reader sophistication to assume

- What objections to address

- Whether you want thought leadership or SEO filler

- What examples would be believable

And when it guesses it tends to choose the safest middle. That's why the same prompt produces content that feels like it was written for everyone and therefore no one.

Shared decision-making produces better content

Shared decision-making sounds fancy. It's simple:

- You make the high-level calls

- The AI accelerates execution

Practical ways to do this:

- Ask it to propose options then you choose ("3 angles, 3 outlines, 3 hooks")

- Tell it your stance and have it argue against you (to sharpen your position)

- Have it generate questions a skeptical reader would ask then answer them

- Use it to compress time between decisions and draft without outsourcing ownership

When you do this the output starts to feel like it has a brain behind it. Because it does: yours.

Practical next steps: how to start this week

If you want a better publish-ready hit rate starting this week do this:

- Stop asking for full articles from a topic-only prompt

- Write a one-page brief with reader context, angle, claim boundaries and proof requirements

- Ask for structure first with proof slots included

- Draft section-by-section instead of generating one massive first draft

- Run two separate editing passes: a voice pass then a proof pass

- Add at least one use-now asset (template, checklist, rubric) before publishing

- Do a final read-aloud skim as a skeptical reader and cut anything that doesn't earn its space

That's the gap-filler. Not "better prompts" but a better sequence.

Take one article you've been meaning to publish and run it through the workflow in this post. You'll feel the difference by Step 3 because the draft will stop guessing what you mean.