Bootstrapped content marketing has one core problem: you don't have enough of anything.

Not enough time. Not enough people. Not enough cash. Not enough "we'll fix it later" bandwidth.

So if you try to do content like a well-funded company, you'll either burn out or quit after two weeks. The fix? Treat content like an experiment: tight scope, clear hypothesis, simple measurement, and aggressive reuse.

This post gives you a full 90-day strategy you can actually run with a micro-budget. No fluff. No "just publish 4x a week." Just a system you can execute.

What is a 90-Day Content Experiment?

A 90-day experiment is long enough to create signal but short enough to stay honest.

You're not "becoming a content brand." You're running a controlled test: If we publish X for Y audience, distributed through Z channels, we expect to see measurable movement in a few key metrics.

The core elements

A 90-day content experiment is a time-boxed content program with:

- A defined goal (not "get more traffic")

- A specific audience segment

- A small set of content types

- A distribution plan (content amplification)

- A measurement plan

- A cadence of review + iteration

The point is learning. Not perfection.

Done right, you finish 90 days with answers like:

- "Our MOFU content drives demo requests, but top-funnel list posts don't."

- "LinkedIn distribution beats X community for us."

- "Our audience engages with teardown posts, not opinion pieces."

- "We can produce one high-quality pillar per week if we batch it."

That's worth way more than a random pile of blog posts.

Why 90 days works when you're bootstrapped

Bootstrapped teams need fast feedback loops.

A year-long content plan sounds responsible but it's usually fiction. Your positioning changes. Your ICP tightens. Your product changes. AI search behavior changes. Your time disappears.

A 90-day plan works because:

- It forces ruthless prioritization.

- It gives you enough reps to improve (writing, distribution, topic choice).

- It's short enough to pivot without ego.

- It fits how startups actually operate—sprints, launches, chaos.

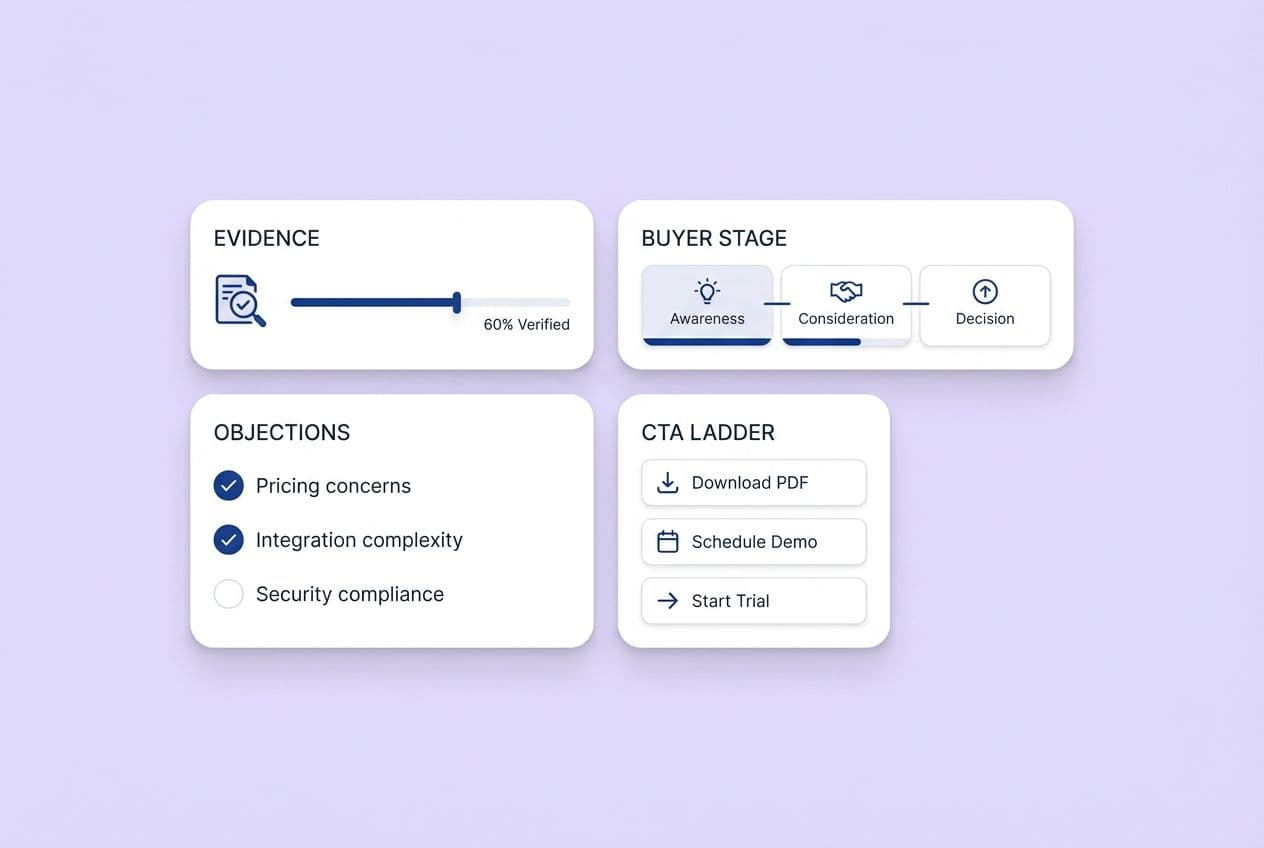

Also, search is shifting. With more top-funnel queries getting answered directly in AI interfaces, the content that often matters most is mid-funnel (MOFU) content. Trust-building stuff. Objection-handling stuff. "Help me choose" content. That stuff can move pipeline even before you're ranking #1 for big head terms.

Goals that match your stage

You need goals that match your stage. Pick one primary goal and two supporting goals.

Here are realistic options for bootstrapped teams:

Primary goal options

- Build a repeatable weekly publishing workflow you can sustain.

- Generate a small, consistent flow of qualified leads (even if it's single digits).

- Improve pipeline velocity by answering common objections with MOFU content.

- Build an email list you can nurture (owned channel beats rented attention).

- Increase AI citation frequency for a small set of prompts (AEO / AI answer optimization).

Supporting goal examples

- Publish 8–12 "main" pieces in 90 days.

- Ship 40–80 distribution assets (posts, snippets, emails).

- Improve conversions on one key page (e.g., demo request page) via internal linking.

- Run 2–3 marketing experiments (A/B tests or channel tests) with clear outcomes.

One warning: don't set a goal like "10,000 sessions in 90 days" unless you already have momentum. You'll either lie to yourself or make bad decisions chasing volume.

How to design your experiment on a shoestring budget

This is where most plans fail—they plan content but not the experiment. You want to design it like a small science project.

Here's the simplest structure that works:

- Goal: what you want

- Hypothesis: what you believe will cause it

- Variables: what you'll change vs keep constant

- Cadence: what you'll publish and when

- Distribution: how people will actually see it

- Metrics: how you'll judge it

What to prioritize when resources are limited

When you're bootstrapped, you can't do everything. So you need a priority system that favors leverage.

Use this rule:

- If it helps you learn faster, prioritize it.

- If it helps you ship faster, prioritize it.

- If it helps you convert faster, prioritize it.

In practice, that means your first 90 days should skew toward consideration-stage content (MOFU content) and "painkiller" topics. Things like:

- "Alternatives to X"

- "How to choose Y"

- "X vs Y"

- "Common mistakes when doing Z"

- "What to look for in a [category] tool"

- "Implementation guide / checklist"

- "Pricing and budgeting guides"

- "Security/compliance explainers" (if relevant)

Why? Because MOFU content matches active buyers. It also tends to perform better in AI-driven discovery environments because it's specific, comparative, and decision-oriented. (Plus, it actually helps people instead of just fishing for clicks.)

If you're stuck, prioritize topics using a simple scoring method. For each topic, score 1–5:

- Buyer intent (will this help a real buying decision?)

- Specificity (does it answer a narrow question clearly?)

- Differentiation (can you say something real from experience?)

- Reusability (can it become many downstream assets?)

- Effort (lower effort gets higher score)

Pick the top 8–12 topics and ignore the rest.

Time vs. money tradeoffs

Bootstrapped budgeting is really time allocation disguised as money allocation.

Below is a practical way to allocate both.

The "bootstrapped 80/20" rule

A good default:

- 80% of your effort goes to proven channels and formats.

- 20% goes to experimentation (new channel, new format, new CTA, new distribution approach).

That 20% experimentation slice matters because it's how small teams discover unfair advantages. This idea is commonly recommended in growth circles because it keeps you learning without blowing up execution.

Micro-budget categories (cash)

Even if you spend close to $0, think in buckets:

- Tools (AI, scheduling, analytics helpers)

- Design (optional...templates help)

- Distribution (optional small boosts)

- Talent (optional fractional editing)

If you have some cash, one important note: AI-generated content can be significantly cheaper than fully human-written content per post. Many companies keep AI tooling costs under a few hundred dollars a month. You still need human judgment but AI can reduce the cost of drafts, outlines, repurposing, and first-pass edits. Ahrefs has published benchmarks showing AI content costing roughly 5x less than traditional approaches.

Micro-budget categories (time)

Time is your real constraint. Here's a sane weekly baseline for a solo founder or 1-person marketer:

- 2–3 hours: research + outlining

- 2–3 hours: drafting + editing (with AI help)

- 1 hour: publishing (formatting, images, links)

- 1–2 hours: distribution + community engagement

- 30 minutes: measurement + notes

That's roughly 6.5–9.5 hours/week. Aggressive but survivable.

If that sounds impossible, cut cadence (not quality). One great post every other week beats four rushed posts you're embarrassed to share.

Picking bootstrapped-friendly content types

In a 90-day experiment, you want formats that are:

- Fast to produce

- Easy to repurpose

- Aligned with real buyer questions

- Not dependent on a big brand or huge audience

These are the best "high impact for low spend" types:

- MOFU blog posts: comparisons, selection guides, objection-handlers

- Checklists and templates: "copy/paste" assets people save and share

- Short newsletter (weekly): summarizing what you learned + linking to the week's post

- Founder-led LinkedIn posts: extracts from your main post (insight, not promotion)

- Customer-question posts: "I keep getting asked X, here's the answer"

If you already have product usage data, you can also do:

- "How we do X" playbooks

- Teardowns of common workflows

- "Mistakes we made" retrospectives

Those are naturally differentiated and don't require paid research.

Tools and workflows to accelerate content production

You don't need a massive stack. You need a workflow that reduces friction.

Your goals:

- One place to store ideas and drafts

- One repeatable process for producing a post

- One repeatable process for distribution

- One place to track results

Using AI for ideation and drafting

AI is most useful when you treat it like a junior assistant. Not a ghostwriter.

Use AI assistants for:

- Expanding an outline into sections

- Creating variants of hooks and headings

- Generating examples you can then correct and adapt

- Turning a blog post into social snippets and email drafts

- Editing for clarity and structure

Use ChatGPT prompts (or similar) that force specificity and prevent generic output. For example:

- "Ask me 10 questions to clarify the audience, pain, and desired action for this post. Then propose 3 angles."

- "Turn this outline into a first draft without adding statistics or claims. Mark any statements that need evidence."

- "Rewrite this section to a clear 8th-grade reading level, keep the voice direct, and remove fluff."

Two rules that keep AI-generated content from turning into mush:

- Provide real inputs (your positioning, your examples, your constraints).

- Do a human pass for accuracy, tone, and "would I actually say this?"

AI can speed you up. It can't own your credibility.

Low-cost tools for scheduling, internal linking, and distribution

You can run this experiment with mostly free tools. What matters is that you actually use them.

Here's a lean stack by job:

Planning and calendar

- Notion / Google Sheets / Trello (simple editorial calendar)

- A basic checklist template for every post

Writing and editing

- Google Docs (collaboration + version history)

- A grammar checker if you like (keep it lightweight)

Publishing

- Your CMS (WordPress/Webflow/Ghost)

- A simple reusable format: intro, sections, CTA, FAQ

Internal linking

- Manual: keep a "link bank" sheet (URL, topic, when to link)

- If you use a platform with automated internal linking capabilities it can remove a surprising amount of manual work, especially when you're publishing quickly and don't want to maintain a spreadsheet of every old post.

Distribution

- Native scheduling inside LinkedIn / X where possible

- A free/freemium scheduler if you need it

- Email via whatever you already have (even a basic tool is fine)

- UTM builder (you can do this manually, just be consistent)

Worth noting: some platforms compress the entire content lifecycle—from ideation through writing, QA, internal linking, and publishing—into one system. This type of approach can help when your bottleneck is management overhead rather than ideas. But the experiment design in this article stands regardless of your tooling choices.

Maintaining quality with minimal overhead

Quality is not "longer." Quality is:

- Accurate

- Clear

- Specific

- Useful

- On-brand

To maintain that without a big team, create a minimum quality bar you never break.

Here's a practical checklist you can reuse for every post:

- The post answers one clear question.

- The intro states who it's for and what they'll get.

- Every section has a point. No filler.

- At least 3 concrete examples, steps, or screenshots are included (when relevant).

- One primary CTA (newsletter signup, demo, template download...pick one).

- 3–5 internal links to relevant pages/posts.

- One "truth check" pass: remove unsupported claims and vague statements.

If you outsource anything, outsource editing before writing. A good editor can turn your rough drafts into publishable pieces faster than a new writer can learn your product and voice.

Measuring ROI and optimizing during your 90-day experiment

Bootstrapped measurement should be simple enough that you'll actually do it.

If your dashboard feels like a second job, you'll stop looking at it. Then you'll stop learning. Then the experiment dies.

Metrics that matter for bootstrapped content experiments

Most teams obsess over top-funnel metrics because they're easy to see. Your job is to connect content to business reality.

Use a KPI ladder:

Level 1: Consumption (are people seeing it?)

- Page views (directional)

- Time on page (directional)

- Scroll depth (optional)

- Email opens (directional)

Level 2: Trust signals (do they care?)

- Replies to newsletter

- Comments and DMs

- Saves/bookmarks (on social)

- Returning visitors

Level 3: Conversion metrics (does it create opportunities?)

- Newsletter signups

- Demo/contact clicks

- Free trial starts (if relevant)

- "Assisted conversions" (content touched before signup)

Level 4: Revenue impact (later, but track it early)

- Influenced pipeline

- Pipeline velocity (are deals moving faster?)

- Closed-won influenced by content

Also worth tracking now: AEO / AI answer optimization signals. If you care about AI visibility, track:

- Which prompts/questions you're targeting

- Whether you're getting cited (AI citation frequency)

- Which pages seem to earn those citations

You don't need perfect data. You need consistent data.

Setting up lightweight tracking and reporting systems

Here's a bootstrapped setup you can build in an hour.

1) One tracking sheet

Columns:

- Publish date

- URL

- Topic

- Funnel stage (top/mid/bottom)

- Primary CTA

- Distribution channels used

- UTMs used (yes/no)

- 7-day page views

- 30-day page views

- Conversions (newsletter, demo, etc.)

- Notes (qualitative feedback)

2) Basic UTMs

Use UTMs for anything you control:

- Email links

- Social links

- Community posts

- Partner shares

Keep naming consistent:

- utm_source=linkedin

- utm_medium=social

- utm_campaign=90day_experiment_q1

- utm_content=post1_hookA

3) CRM tagging (if you have a CRM)

Add one field:

- "First content touch" or "Content source"

Or tag contacts that came from:

- Blog CTA

- Newsletter

- Specific post

If you don't have a CRM, don't panic. Track what you can and add lightweight structure later.

4) One weekly "scorecard"

Every week, answer:

- What did we publish?

- What performed best and why?

- What did people say (comments, replies, sales calls)?

- What's the one change we'll make next week?

That's it.

Using feedback loops to iterate and pivot

A 90-day strategy only works if you adapt.

Here's a simple review cadence that fits lean teams:

- Weekly: check distribution + quick performance

- Every 2 weeks: review topics + conversion signals

- Day 30 and Day 60: make a real pivot decision

- Day 90: decide scale, repeat, or stop

What counts as a pivot?

- Shift from top-funnel to MOFU content if conversions are weak

- Double down on one channel that actually drives clicks and replies

- Change CTAs (newsletter vs demo vs template)

- Change content type (from "ultimate guides" to "decision pages")

A pivot is not "we'll try TikTok." A pivot is "we learned X so we're changing Y."

Common mistakes to avoid when running bootstrapped content experiments

Most bootstrapped experiments fail for boring reasons: too big, too complex, too inconsistent.

Here's how to avoid the big ones.

Balancing content work with other business priorities

This is where founder fatigue shows up.

If content only happens when you "find time," it won't happen.

Use a few survival tactics:

- Batch work: do outlines for 4 posts in one sitting. Drafts in another sitting.

- Theme weeks: one problem, multiple angles (post + LinkedIn + email).

- Office hours: one fixed block per week that's "content only."

- Definition of done: publish when it meets the quality bar, not when it's "perfect."

Also: make content smaller before you make it more frequent.

One strong post with strong distribution beats three posts no one sees.

Don't overcomplicate the experiment

Bootstrapped teams love complexity because it feels like progress.

Don't do this in your first 90 days:

- 5 channels at once

- 8 content types at once

- A full SEO pillar + cluster buildout for 10 pillars

- A multivariate test matrix you'll never finish

Instead, keep constants and change one variable at a time.

Good first experiments:

- Same content type, different distribution channel

- Same distribution, different CTA

- Same CTA, different topic category (MOFU vs TOFU)

If you can't explain the experiment in one sentence, it's too complex.

Keep your voice consistent when moving fast

Speed kills voice, especially when AI is involved.

To keep consistency:

- Write a one-page "voice sheet" (words you use, words you avoid, tone rules).

- Create 3–5 reusable intros/outros you can customize.

- Keep a "proof points" doc: your real stories, real examples, real opinions.

- Do a final pass reading out loud. If it sounds like a consultant wrote it, rewrite.

If you use AI heavily, anchor it with context. That's the difference between "generic AI-generated content" and content that sounds like you. Some teams do this by storing structured brand context and applying it to every draft, but you can also do it manually with a simple brand doc you paste into your prompt.

Building on proven ROI: scaling beyond 90 days

If your experiment works, the goal isn't "publish more." The goal is "build a content engine you can sustain."

Sustainable means:

- repeatable workflow

- consistent quality

- distribution baked in

- measurement that doesn't require heroics

Repurposing content to maximize reach and impact

Repurposing is the bootstrapped superpower. It's content amplification without doubling your workload.

Here's a simple repurposing checklist for every "main" blog post:

- 3 LinkedIn posts (different angles: story, takeaway list, contrarian point)

- 1 email newsletter issue (summary + link + one extra insight)

- 5 short social snippets (quotes, steps, "do this not that")

- 1 internal enablement note (for sales/support: key points + links)

- 3 FAQ answers you can reuse later (website, future posts, sales replies)

No nested workflows. No fancy production. Just reuse the thinking.

This also helps with Answer Engine Optimization (AEO) because you're producing multiple clear question-answer style assets from one source, which can increase your chances of being surfaced or cited when people ask AI tools for help.

When to increase investment in content production

Don't scale because you feel motivated. Scale because the data says it's working.

Increase investment when:

- You have at least 2–3 posts that consistently drive conversions or qualified conversations.

- Your distribution channel is producing predictable engagement (comments, replies, clicks).

- You can describe your audience and winning topics clearly.

- You have a workflow that doesn't rely on one person having a "free weekend."

How to scale without blowing budget:

- Add a fractional editor (small retainer) to increase quality and speed.

- Increase distribution effort before increasing publishing volume.

- Create one "pillar" per month and repurpose aggressively.

- Add small paid boosts only to proven winners (not brand-new posts).

Building systems for sustainable growth

The system is the product.

Your sustainable setup should include:

- A rolling quarterly plan (always looking 90 days ahead)

- A single source of truth for topics and priorities

- A repeatable production checklist

- A distribution checklist

- A monthly content review ritual

If you want to reduce manual overhead further, this is where workflow tooling can help. Systems that automate steps like QA, internal linking, scheduling, and turning posts into distribution assets can remove the parts that cause you to stall. The key is not any specific tool; it's removing friction points that prevent consistent execution.

How to evaluate your 90-day experiment's success and next steps

At the end of 90 days you need a decision. Not vibes.

You're choosing one of three paths:

- Scale up (more volume, more distribution, maybe more budget)

- Repeat with a pivot (same effort, smarter direction)

- Stop (content isn't your lever right now)

Setting clear criteria for success

Define success before you start. Otherwise you'll move the goalposts.

Use both quantitative and qualitative measures.

Quantitative (pick 2–3)

- Number of published "main" pieces shipped

- Number of email subscribers gained

- Number of qualified leads attributed or assisted

- Number of demo/contact clicks from content

- Improvement in conversion rate on a key CTA

- AI citation frequency for 3–5 tracked prompts (if you're tracking AEO)

Qualitative (pick 2–3)

- Sales/support says content answers real objections

- You get replies like "this is exactly what I needed"

- People share the content unprompted

- You can clearly name your best-performing topic themes

A win doesn't have to be huge. It has to be real and repeatable.

Running a post-experiment review

Even if it's just you, do a real review. Put it on the calendar.

Agenda:

- What did we ship? (be factual)

- What performed best? (and why?)

- What surprised us?

- What was harder than expected? (bottlenecks)

- What did the audience tell us? (comments, calls, emails)

- What should we stop doing immediately?

- What is the next hypothesis?

Document this. Your future self will forget.

Planning the next 90 days based on data and capacity

Your next quarterly plan should be a tighter version of the first.

Use what you learned to make obvious moves:

- Double down on the top 2 topic clusters.

- Drop the worst-performing channel.

- Keep one experiment slot (the 20%).

- Improve the workflow bottleneck (editing, distribution, publishing).

If capacity is the limit (it usually is), don't increase volume. Increase reuse and distribution consistency first.

Appendix: 90-day content experiment budget template and simple experiment planner

You can copy these tables into a doc or spreadsheet and adjust them in 15 minutes.

Sample budget allocation by experiment phase

Below are three examples: $0, micro-budget, and "tiny but real" budget. Pick the closest.

Option A: $0 cash budget (time-only)

| Phase | Time focus | What you actually do |

|---|---|---|

| Weeks 1–2 (Setup) | 6–10 hours total | Pick ICP, pick 8–12 topics, set up tracking sheet, write voice sheet |

| Weeks 3–10 (Execution) | 6–9 hours/week | Publish 1 post/week or every other week, share on 1–2 channels, send 1 email/week |

| Weeks 11–12 (Optimization + review) | 2–4 hours/week | Update top posts, improve internal links, tighten CTAs, run final review |

Option B: Micro-budget ($50–$200/month)

| Phase | Spend | What to spend it on |

|---|---|---|

| Setup | $0–$50 | A simple newsletter tool upgrade or domain/email setup if needed |

| Execution | $50–$200/mo | AI assistant subscription, lightweight scheduler, small design template purchase |

| Optimization | $0–$100 | Small boost to your best-performing post or a one-time edit pass |

Option C: Tiny but real budget ($200–$800/month)

| Phase | Spend | What to spend it on |

|---|---|---|

| Setup | $0–$100 | Templates, basic tooling |

| Execution | $200–$800/mo | AI + scheduling + a fractional editor (even a few hours/month) |

| Optimization | $50–$200 | Limited promotion of proven winners, landing page improvements |

No matter which option you choose, the bigger lever is usually distribution discipline and not more writing.

Experiment design checklist

Use this as your simple experiment planner.

- Define your primary goal (one sentence).

- Define your audience (ICP + "pain you solve").

- Write your hypothesis ("If we do X, we expect Y because Z").

- Choose 1–2 content types you'll commit to.

- Choose 1–2 distribution channels you'll commit to.

- Set your cadence (what you can sustain for 12 weeks).

- Choose 3–5 KPIs (mix of consumption, trust, conversion).

- Set up tracking (UTMs + one spreadsheet).

- Reserve 20% effort for experimentation (one variable at a time).

- Schedule review points (weekly, day 30, day 60, day 90).

- Define "success," "meh," and "failure" outcomes before you start.

- Decide your default next step (scale, pivot, stop).