Content can feel like the worst kind of marketing bet.

You publish consistently, track metrics religiously, and... nothing definitive happens. No spike. No flood of leads. Just a slow drip of "maybe this is doing something?"

So you stay in permanent "testing mode." Or you quit after three posts and move on to the next shiny thing.

This article charts the middle path: a clear set of signals that tell you when content is working well enough to commit, plus what "commit" actually means operationally.

Ground rules before we get tactical:

- Content is primarily a brand-building and inbound channel (not pure direct response). That changes how you measure "working."

- Attribution will be imperfect. Your goal isn't perfect measurement—it's confident performance evaluation.

- The right decision isn't "content or no content." It's keep experimenting, scale up, or pivot away based on evidence and team reality.

Understanding the experimentation phase in content marketing

Experimentation isn't "we wrote some blogs." It's GTM channel validation: you're learning if content can become a reliable, scalable customer acquisition path for your business.

In this phase you answer three questions:

- Can we consistently ship content without burning out?

- Does the market respond in ways that compound?

- Can we connect content to revenue well enough to justify more investment?

What does experimentation look like for content marketing?

A real content experiment has constraints. Without them it's just activity.

Effective content channel testing typically includes:

- One clear audience segment (single persona) you want to win

- One content motion to validate first—usually SEO content, sometimes editorial or technical

- A repeatable cadence (not heroic bursts)

- A small set of KPIs tied to business outcomes

- Documentation of what you did and what happened

What you typically test during experimentation:

- Topic types: pain-focused vs use-case vs comparison vs how-to

- Content formats: long-form guides, landing-page-style posts, templates, opinion pieces

- CTA styles: newsletter, demo, free tool, pricing page

- Distribution add-ons: LinkedIn, email list, founder-led sharing

- Conversion paths: direct signup vs lead gen vs email capture

One critical constraint: don't test 12 things at once. If you can't explain what changed from month to month you can't learn.

How to measure progress during experimentation

During early experiments you're rarely measuring clean ROI. You're measuring momentum and tractability—whether you're seeing early signs of compounding and whether your team can sustain the effort.

Think in three buckets:

- Output health: Are we publishing? Is quality consistent?

- Market response: Are people engaging, returning, clicking deeper?

- Business pull-through: Any meaningful leads, signups, sales conversations or pipeline influence?

The trap is treating content like paid ads. Paid asks: "Did we spend $X and get $Y back this week?" Content asks: "If we keep doing this for 6–12 months will the curve bend?"

That's why you need both quantitative and qualitative signals to decide when to commit.

Key quantitative signals indicating content marketing success

You want signals that are:

- Hard to fake

- Trend-based (not one-off spikes)

- Comparable over time

- Connected to business outcomes

Thresholds matter only when paired with your baseline. A seed-stage SaaS going from 200 visits/month to 800 is often a stronger "working" signal than a larger company going from 200k to 205k.

Core KPIs to track: traffic, engagement, conversions, and attribution

Here are the KPIs that tend to matter most in content marketing experiments.

1) Publishing cadence stability

Before traffic, before leads—can you ship consistently?

A strong signal: you've maintained cadence for 8–12 weeks without it becoming an emergency every time.

Why it matters: content is a compounding channel. If your process can't produce reliably the channel can't compound.

A simple operational metric: planned posts vs published posts each week or month. If you're hitting around 80% of plan for two months you've got something scalable.

2) Organic traffic trend

Early on absolute numbers can be depressing. Look for trends instead.

What matters:

- Organic sessions trending upward over at least 6–10 weeks (excluding one-off spikes)

- More pages beginning to rank

- More queries appearing in Search Console

- Fewer flatlined weeks

If the curve is jagged but the baseline is rising? That's real signal.

3) Share of content earning search demand

A useful validation metric: What percentage of posts get meaningful impressions or clicks within 60–90 days?

In early-stage content a good sign is a growing portion of your content getting consistent impressions, even if clicks are modest. You're not relying on one hit.

If only 1 out of 20 posts gets traction you're still guessing.

4) Engagement indicating intent

Focus on actions suggesting a visitor might become a customer:

- Clicks to product pages

- Clicks to pricing

- Clicks to docs (for technical audiences)

- Newsletter signups

- Demo or contact form views

Track depth: visitors landing on content who then move into high-intent pages.

5) Conversion rate on content-to-next-step

You don't need content to convert like paid. You need it to contribute.

Track one or two conversion paths (content → email capture, signup or demo) and look for:

- Conversion rate stability (not "we got 3 demos last week")

- Conversion rate improvements after purposeful changes

Even if volume is low a stable conversion rate gives you something to scale.

6) Lead quality signals

If content drives leads but they're garbage that's not a win.

Lightweight ways to evaluate quality:

- Sales notes: "they mentioned X article"

- Qualification rate: percentage of content-sourced leads matching ICP basics

- Time-to-first-meeting: are these leads moving faster or slower?

If content leads consistently fit your ICP better than other channels? Major commit signal.

7) Impact-to-effort ratio

This is the KPI founders actually care about.

A simple version:

- Impact = conversions, qualified leads, pipeline influence, sales conversations started

- Effort = hours plus dollars (writers, tools, editing time, founder time)

You want to see impact rising faster than effort—or effort dropping while impact stays steady. This often happens when process improves or you add automation.

If impact stays flat and effort stays high for months don't commit. Fix the engine or pivot.

A practical threshold set (use together)

No single metric should decide. Use a bundle:

- You can publish consistently for 8–12 weeks

- Organic impressions and/or sessions show clear upward trend over 2–3 months

- Multiple posts (not one) are earning impressions or clicks

- You can point to at least one measurable business outcome: email subs, signups, demos or sales mentions

- Your impact-to-effort ratio is improving month over month

If you've got 4 out of 5 you're not guessing anymore.

Attribution challenges and practical workarounds

Attribution is where many teams get stuck. They say "we can't track it" which becomes permission to either quit too early or experiment forever.

Content is multi-touch by nature: someone reads an article, sees a founder post, gets referred, searches your brand, then converts. Forcing that into a single "source" is maddening.

Practical workarounds:

1) Decide what you're trying to attribute

Two different questions:

- Direct attribution: Did this piece lead to a conversion right now?

- Influence attribution: Did content appear in journeys that later converted?

Early-stage, influence is often the bigger story.

Pick one: if you're PLG track content → signup and signup activation. If sales-led track content touches in closed-won journeys (even just "they visited the blog").

2) Use "good enough" multi-touch

You don't need a complex attribution model to make a decision. You need consistency.

What "good enough" looks like:

- UTM parameters on links you control (newsletter, LinkedIn, partner shares)

- Consistent definition of "content-sourced" vs "content-influenced"

- Monthly review of assisted conversions or path exploration in analytics

Your aim is performance evaluation not courtroom-grade proof.

3) Add a human layer

Add a field on signup or demo forms: "What prompted you to check us out?"

Review responses monthly. You'll see patterns like "Found your guide on X" or "Been reading your blog."

This is qualitative attribution but it's real signal—especially when it repeats.

4) Document experiments like a scientist

Most teams can't tell if content worked because they didn't log what they did.

A lightweight experiment log should include:

- Hypothesis

- Content produced (topics, formats)

- Dates published

- Distribution done

- KPI snapshot at 30/60/90 days

- Learnings and next action

Worth noting: this is boring operational work. It also makes the difference between learning and guessing.

Integrating qualitative and psychological cues in the commitment decision

Even with data, committing to content is emotional. It's a decision to play a longer game, say "no" to other channels temporarily and sustain effort when results are delayed.

Your gut matters—but in the "we can keep doing this without breaking" way not the "I feel like it" way.

Founder and team alignment: assessing capacity and burnout risk

Founder-channel fit is real. If the channel doesn't match how your team works consistency dies.

Ask honestly:

- Do we have someone who can own content weekly without constant negotiation?

- Do we have access to real expertise (founder knowledge, customer insights, product depth)?

- Does writing or editing energize anyone here?

- Are we shipping content calmly—or only in last-minute sprints?

Burnout shows up early in content because content is "never done." Overload is dangerously easy.

Operational warning signs you're not ready:

- Founder is the bottleneck for every draft

- Publishing depends on weekends

- Every post is a brand-new process

- Your team dreads content days

A commitment decision should include a capacity plan: who owns topic selection, drafting, review, distribution?

If the answer is "uh… me I guess" you're still experimenting.

Balancing data and gut: an integrated decision-making framework

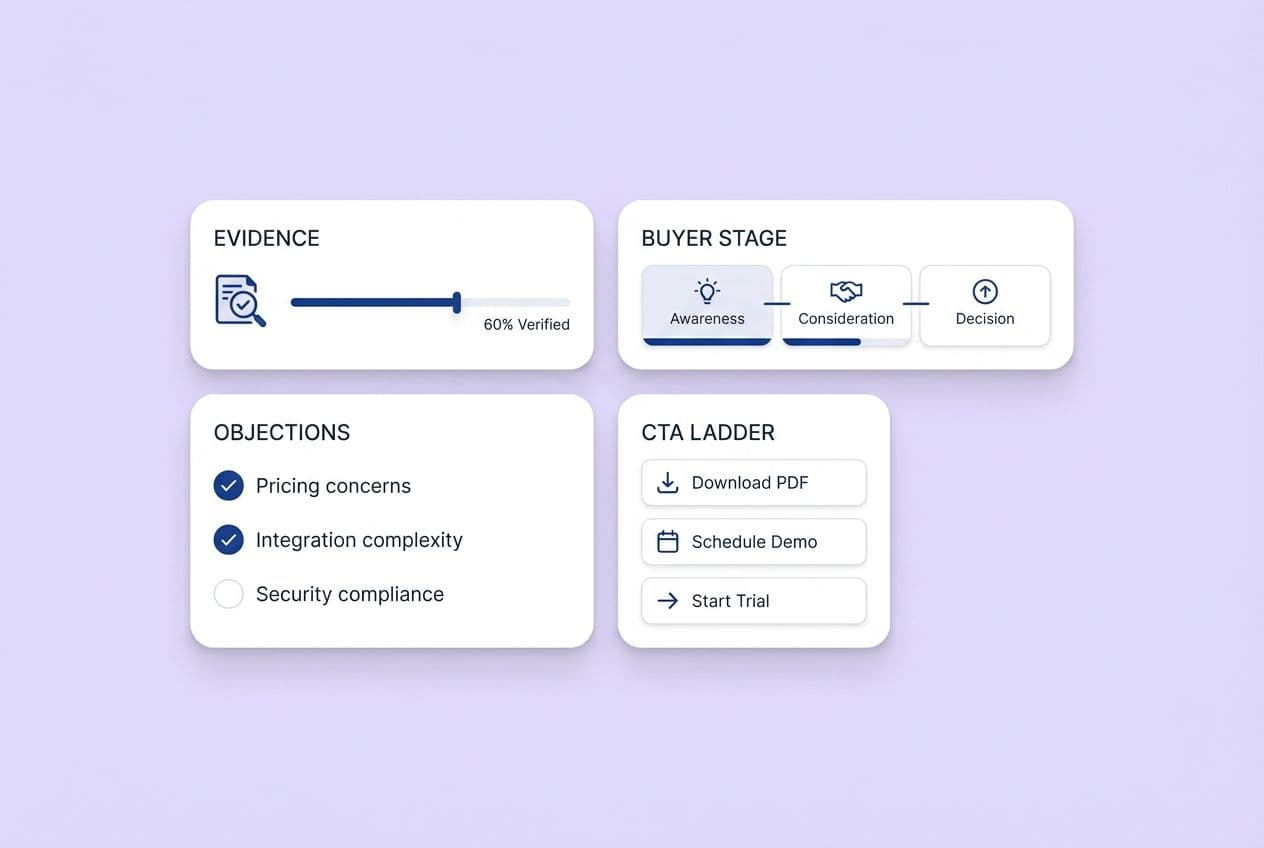

Score content on three dimensions:

- Performance: Is the market responding?

- Repeatability: Can we execute consistently?

- Strategic fit: Does content align with our GTM and strengths?

Use a 1–5 rating for each, consistently month to month.

What "commit" looks like:

- Performance: 4–5 (clear upward trends plus early conversions)

- Repeatability: 4–5 (cadence is stable not heroic)

- Strategic fit: 4–5 (topics map to product value, sales cycle, ICP needs)

What "keep experimenting" looks like:

- Performance: 2–3 (some traction but inconsistent)

- Repeatability: 3–4 (you can ship but it's clunky)

- Strategic fit: 3–4 (close but positioning needs tightening)

What "pivot" looks like:

- Performance: 1–2 (flat for months despite effort)

- Repeatability: 1–2 (team can't sustain it)

- Strategic fit: 1–2 (audience doesn't buy this way or your sales motion needs outbound first)

Your gut is allowed to be a metric—as long as it's grounded in operational reality not hope.

When and how to transition from experimentation to commitment

"Commitment" doesn't mean "we will publish forever no matter what."

It means:

- You stop treating content like a side quest

- You invest enough to learn faster and compound

- You build a simple content operating system

Most teams should commit in phases:

- Phase 1: Experimentation (prove early signal)

- Phase 2: Commitment (build consistency plus scale production)

- Phase 3: Optimization (improve conversion, refresh winners, expand clusters)

Common signals that it's time to scale content production

You're ready to commit when you see multiple signals together:

- Content cadence is stable enough that increasing volume won't collapse quality

- You're seeing compounding indicators: more pages ranking, more branded search, more returning visitors

- Content starts appearing in real revenue workflows: sales mentions, demo form notes, "I read your article on X"

- Your topic strategy is sharpening: you know which themes drive the best leads

- Internal linking and site structure start to matter because you have enough content

A simple "yes or no" moment: If you paused content for a month would you expect your pipeline or inbound interest to dip in 1–3 months?

If the honest answer is "yeah probably" you're already committed—you just haven't admitted it.

Avoiding common pitfalls in making the commitment

Most content commitments fail for boring reasons:

- Scaling production before you've nailed distribution

- Scaling volume while positioning is still fuzzy

- Chasing vanity metrics (traffic without intent, engagement without conversions)

- Ignoring channel category mismatch (measuring a brand-building channel like direct response)

- No documentation, so you repeat the same mistakes

- Siloed execution: writer doesn't know the product, SEO person doesn't know the ICP, founder has no time to review

- Endless "growth experiments" instead of building the base

At some point you need fewer experiments and more reps. Content rewards consistency more than cleverness.

Role of AI and automation in accelerating content commitment and scaling

AI can help you commit faster—if you treat it like an operations lever not a magic content button.

The goal of AI-assisted workflows is to improve speed, consistency, coverage and repeatability. Not to remove humans entirely (especially in B2B where nuance and credibility matter).

How automation supports consistency and frequency without growing headcount

The real bottleneck in content isn't typing words. It's the workflow around the words.

Common time sinks: picking topics, creating briefs, editing for clarity and brand voice, adding internal links, formatting, publishing, repurposing for distribution.

Automation helps by turning content into a system: a repeatable pipeline from idea → draft → QA → publish, a consistent cadence so you don't rely on motivation, and a place to store positioning, ICP pains and brand voice so content doesn't drift.

This matters for commitment because your decision often hinges on: "Can we keep this up?"

If automation reduces effort without reducing quality your impact-to-effort ratio improves—one of the strongest commit signals.

Maintaining brand voice and quality with AI content

If you're using AI-generated content at scale quality control can't be "I'll skim it when I have time." You need guardrails.

Practical quality rules:

- Every article must reflect a specific persona and stage of awareness

- Every article must make at least one non-obvious point (something your team actually believes)

- Every article must have a clear next step matching intent

- Every article must be reviewed by someone who understands the product and customer reality

AI is great at first drafts, variations, structure, summaries and repurposing. Humans are essential for truth checking, original POV, sharp positioning and making it not sound like everyone else.

Hybrid wins.

Platforms built around context-first generation—where your positioning, personas and voice rules are stored as a reusable source of truth—help output stay consistent even as volume increases. The principle: systematize your context so quality doesn't collapse when you scale.

Post-commitment monitoring and sustainable growth framework

Most teams think the hard part is deciding to commit. It's not.

The hard part is staying committed long enough for compounding returns—and knowing when the channel is getting tired.

Post-commitment your job shifts from "is this working?" to "What's working best?", "How do we compound it?" and "Are we still aligned with the market?"

Key KPIs and feedback loops to track after commitment

Post-commitment KPIs should help you protect what's working, improve conversion efficiency and spot decay early.

A practical monitoring set:

- Organic traffic trend by content cluster (not just whole site)

- Impressions and clicks for priority topics (Search Console is enough)

- Content → product page click-through rate

- Content → signup or demo conversion rate (pick one primary)

- New vs returning visitors (returning is a strong brand plus trust signal)

- Sales/customer feedback loop (monthly): what objections came up that content could address?

- Content production health: cadence hit rate, review time, bottleneck tracking

You also need a rhythm:

- Weekly: production plus light KPI check

- Monthly: content performance evaluation plus experiment planning

- Quarterly: strategy refresh (clusters, positioning, GTM alignment)

This prevents drifting into "we publish but we don't know why."

When to reassess or pivot away from content marketing

You don't pivot because one post flops. You pivot because the channel's return curve flattens and stays flat.

Signs it's time to reassess:

- Organic traffic is flat for 3+ months despite consistent publishing and basic SEO hygiene

- New content isn't earning impressions even after 60–90 days

- Your best-performing content is off-ICP (brings the wrong people)

- Conversion rates from content are declining as volume increases—quality drift

- The team is burning out and automation or process improvements aren't fixing it

Before pivoting entirely try a focused reset: tighten ICP targeting and topic selection, refresh and consolidate older posts close to ranking, improve internal linking and conversion paths, add distribution so content isn't relying on Google alone.

If you do that for a quarter and still see no movement another channel category (outbound, partnerships, community-led growth) should likely take priority.

That's not failure. That's GTM channel validation working as intended.

Decision-support tools: commitment checklist and founder readiness self-assessment

You don't need more advice. You need a tool you can use on a Monday morning.

Commitment decision checklist

Use this to decide: keep experimenting, commit or pivot. Answer each yes or no.

- We've published consistently for 8–12 weeks (at least 80% of planned cadence)

- Organic impressions or sessions are trending up over 2–3 months

- More than one piece of content is performing (not a single outlier)

- We can identify at least one conversion path from content and track it consistently

- We have at least light attribution in place (UTMs, self-reported "how did you hear," or content-influenced tracking)

- Our impact-to-effort ratio is improving month over month

- We have a documented experiment log of what we tested and learned

- We know which 2–3 topic clusters best match our ICP and product value

- We have a clear owner for content operations (even if part-time)

- The team believes we can sustain this for the next 2 quarters without burnout

How to interpret:

- 8–10 yes: Commit and scale

- 5–7 yes: Keep experimenting but tighten the system (measurement, cadence, topic focus)

- 0–4 yes: Pause and redesign the approach or pivot channels

Founder and team capacity self-assessment

Rate each 1–5.

- Ownership: We have a clear owner who can protect content time weekly

- Expertise: We can source real insights (customers, product, data, POV) regularly

- Workflow: Draft → review → publish is predictable and not chaotic

- Energy: Creating content is not actively draining the team every week

- Patience: We can commit for 6 months without panicking at week 3

- Cross-functional support: Sales, product or customer success can feed topics and feedback

- Tooling: We have a system to reduce busywork (planning, briefs, editing, distribution)

- Quality bar: We know what "good" means and can enforce it at speed

Interpretation:

- Mostly 4–5: You're operationally ready to commit

- Mostly 3: You can commit but you need process fixes or automation to prevent stalls

- Mostly 1–2: Don't scale yet. Fix capacity and workflow first or content will become a recurring failure loop

Ready to confidently commit to content marketing? See how to apply a structured decision framework today

If you only do one thing after reading this do this:

Copy the Commitment Decision Checklist into a doc, score it honestly with your team and pick one outcome for the next 90 days: keep experimenting, commit and scale or pivot.

Then make it real with a calendar: set a cadence you can actually hit, set one monthly measurement review and set one feedback loop with sales or customers.

If you're finding that commitment is less about ideas and more about execution drag—briefs, edits, internal linking, publishing, repurposing—this is where an end-to-end content automation platform can help. DeepSmith is built for that "we need consistency without adding headcount" stage: context-first content generation anchored to your brand positioning and voice, a multi-agent production pipeline that handles research through publishing, and built-in distribution asset creation so the channel is easier to sustain once the signals say it's working.