Let me guess: your competitor just got cited in a Perplexity answer your buyer was reading right before they hit your comparison page. You didn't. And it stings, right? It wasn't because their product is better. It was because their page gave the AI engine something verifiable to work with, and yours just gave it marketing copy.

Look, this is a solvable problem. But it's not a quick "add a few outbound links" patch job. I've seen teams try that, and it never works. Making your pages truly citation-friendly for AI is an architecture decision. It's about building a repeatable system of claims, evidence, and honest disclosures right into your page templates. Then you have to maintain it, just like any other part of your technical SEO stack.

I'm handing you the system we built to solve this. It includes a claim taxonomy, placement maps for your pages, audit tools, and a maintenance workflow. The goal isn't to make your pages look like a journal article. It's to get pages that both convert and get cited.

What does "citation-friendly" mean for product and comparison pages (not blog posts)?

Blog posts have it easy. They get cited by being useful and well-organized. But our commercial pages have a much harder job. They need to earn citations and close deals. That tension is real, and most of the advice I see online completely ignores it.

So what does citation-friendly mean in a commercial context? It's really about three things all working together.

The 3 requirements: verifiable claims, extractable formatting, and honest disclosure

Verifiable claims just means that every fact a buyer might question can be traced back to a source that has a name, a date, and a link. A performance benchmark, a compliance cert, a pricing tier. No more "leading analysts say" or "studies show." We have to do better than that.

Extractable formatting is how the AI engine finds the claim and the evidence without having to guess. This is about being really deliberate with your page structure. Think claim-first sentences, keeping the proof right next to the assertion, and using a consistent HTML structure.

Honest disclosure means you're upfront about what's objective fact, what's just your positioning, and what's your opinion. Both AI engines and actual human buyers are (rightfully) skeptical of pages that disguise marketing fluff as hard research. When you label your opinion as opinion, you actually build trust.

How product/comparison pages fail AEO in the real world

I see the same mistakes over and over. The most common one is the uncited superlative. We've all written them: "the fastest," "the most secure," "enterprise-grade." They're everywhere, and they almost never have a shred of evidence. An AI can't cite a vague claim with no source to check.

Second, the proof is always buried. I once saw a page where the SOC 2 badge was in a footer-adjacent logo farm, totally disconnected from the security claims made way up in the hero section. An AI engine scanning for a claim-evidence pair is never going to connect those dots.

Third, comparison tables almost never explain their work. You put a checkmark under your column and an X under a competitor's. Why? How did you decide that? If there's no methodology, an AI has nothing to trust, and it will just ignore you.

Which types of claims on commercial pages need citations—and which don't?

This is where most teams get stuck. They either do too little (and cite nothing) or way too much (and turn their product page into a bibliography). What you need is a taxonomy, basically a clear decision rule for every type of claim you make.

"Must-cite" claims (performance, security, compliance, customer outcomes, market statements)

For these categories, there's zero tolerance for naked assertions. If you say it, you have to back it up with a source.

Performance claims: Stuff like "Reduces time-to-close by 40%" or "Processes 10,000 requests per second." These need a date, a clear methodology, and a link. That could be your own published benchmark, a case study, or a third-party audit.

Security and compliance claims: SOC 2 Type II, GDPR compliance, ISO 27001. Your B2B buyers are going to verify these anyway, so make it easy. AI engines give these a lot of weight, especially when they link directly to the certificate or trust documentation.

Customer outcome claims: "Teams using [Product] save 3 hours per week." You have to attribute this, even if it's just to your own case study. "According to a case study we published in 2024" is something an AI can audit. A random statistic floating on the page is not.

Market statements: "The SaaS security market is growing 22% annually." That data isn't yours. Cite the analyst report or research by name and date.

"Should-cite" claims (comparisons, best-for statements, methodology)

These are not quite at the "must" level, but they're close, especially on your comparison pages.

When you say your product is "best for mid-market teams," is that just your positioning (which is fine, just label it) or is it a conclusion from your analysis? If you're claiming it's based on a feature comparison, you need to show your work.

Your comparison criteria, things like "we evaluated tools on five dimensions: X, Y, Z," need a methodology section. It can be brief. An AI can cite a page that says "based on our evaluation of 12 tools using these criteria..." It can't do anything with "we think we're the best."

"Don't-cite" content (positioning, opinions, vision) and how to label it honestly

Not everything needs a source. Your company philosophy, your market vision, your take on the category, those are all opinions. And that's okay! Treating them like opinions is far more trustworthy than dressing them up with fake objectivity.

The fix is simple labeling. Just add "We believe..." before a vision statement. Or "Our perspective:" before you share your take on the market. This clarifies what kind of claim you're making, which is exactly what AI engines need to do their job.

Source hierarchy: what counts as strong evidence for commercial claims

Not all sources are created equal. I've found this rough order of trust to be a good guide for my teams:

- Peer-reviewed or third-party audited data: Think Gartner, Forrester, government data, academic research, or independent security audits. This is the gold standard.

- Your own published primary research: This includes your own surveys, benchmarks, and case studies, as long as you name your methodology and sample.

- G2, Capterra, or similar review platforms: You can attribute aggregate ratings and the total number of reviews.

- Reputable trade publications: TechCrunch or other industry press are good sources, just make sure you include the publication date.

- Competitor and third-party commercial pages: You can use these for factual claims (like their pricing or feature list), but you have to attribute it and include the date you verified it.

And a simple rule of thumb: if you'd be embarrassed to name the source, don't use it.

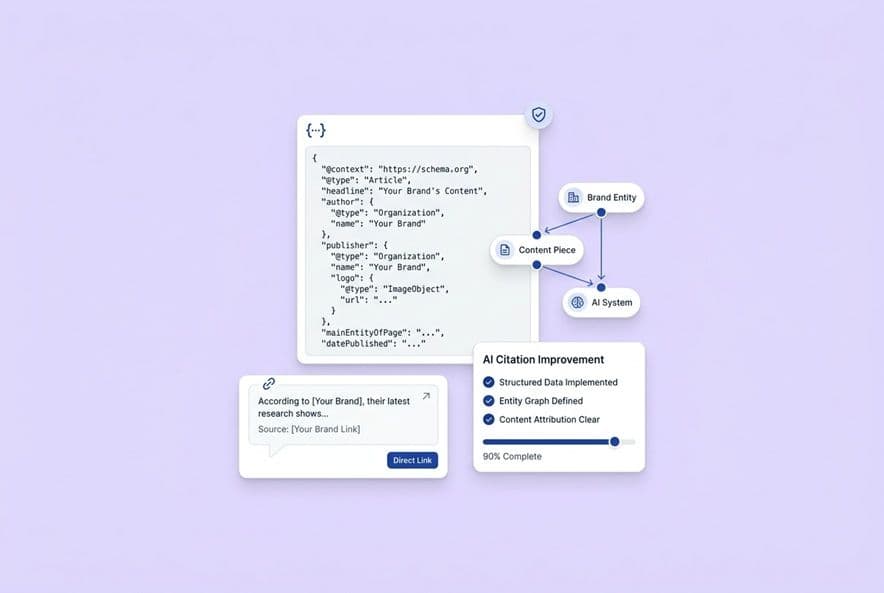

How do you structure citations so AI engines can actually extract and reuse them?

This is where your AEO efforts meet information architecture. It sounds technical, but it's really just about being organized. AI engines look for claim-evidence proximity, consistent structure, and clear attribution. If you give them that, they can cite you.

Write at the "claim level": one claim, one proof path

The easiest unit of content for an AI to understand is a single sentence that makes one specific claim and immediately shows its origin. The pattern is simple: claim → evidence → attribution.

Here's an example: "[Product]'s uptime averaged 99.97% in 2023 (based on our independently published status page data, January–December 2023)."

See that? One claim, one clear path to the proof. Everything an AI needs is in that one sentence. Now compare that to "Our platform is highly reliable." An engine can't do a thing with that. You need to apply this thinking at the paragraph level, not just the page level.

Placement rules: keep evidence close to the claim (and consistent)

An AI won't reliably scroll to the bottom of a page, find a reference, and match it to a claim you made 800 words earlier. It's just not how they work. The evidence has to live right next to the claim, either in the same paragraph or flagged with a numbered reference that points to a block on the same page.

Consistency is also huge. If you're using footnotes on one part of the page, inline links on another, and reference blocks somewhere else, you're just creating a mess for an extractor. This is a template decision, not something you should decide paragraph by paragraph. Pick one system and stick with it.

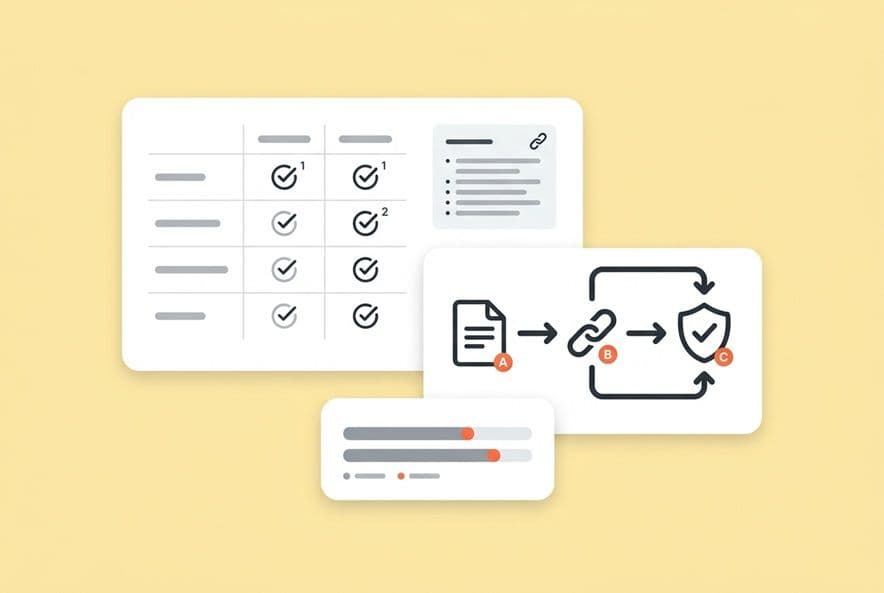

Citation format options for commercial pages (inline links vs. footnotes vs. endnotes)

Inline hyperlinks: These work best for claims where clicking is a natural next step, like linking to a G2 page, a certificate, or an analyst report. They're simple and don't really hurt conversion, but you can't add context like publication dates in the link itself.

Footnote-style markers (superscript or bracketed numbers): I love these for comparison tables and sections that are heavy with claims. They keep the reading experience clean, but you have to have a corresponding reference block on the same page that's easy to find.

Reference blocks / "Sources & methodology" sections: This is the strongest signal you can send to an AI. A clearly labeled section, usually at the bottom, that lists every source with its name, date, URL, and the claim it supports. This pattern is the closest to what AI engines were trained to trust from academic and research contexts.

Reference blocks that work: "Sources & methodology" sections that don't kill conversions

I hear this fear all the time: a "Sources" section looks too academic and will confuse buyers. In my experience, especially with B2B buyers, the opposite is true. It signals that your claims are real and that you're not afraid to show your work.

Placement and labeling are key. Put it below your main call-to-action. Label it something simple, like "Sources & Methodology" or "How We Built This Comparison." And keep it scannable with a numbered list format showing the source name, date, and link.

This is a pain point we ran into so often that we built a solution for it into our own tool, DeepSmith. Our SEO + AEO pipeline handles the structure and metadata during content creation, so the groundwork for this stuff is in place from the start, not left for a messy cleanup before you publish.

Where should citations live on a SaaS product page vs. a comparison page?

The layout of the page and the journey a buyer takes across it should dictate your citation strategy. You have to put the trust signals where they'll have the most impact.

Product page placement map (pricing claims, feature claims, security claims, customer proof)

- Hero section: Absolutely no citations here. Your headline is your positioning. Don't undermine it with a footnote.

- Feature claims section: Cite specific capabilities inline, for example, "supports up to 50,000 API calls per month (see pricing documentation)." But only do this for claims a skeptical buyer would actually want to verify.

- Security and compliance section: This is critical. Every single certification badge must link to the live certification document or a trust page. A "SOC 2 Type II" logo without a link is a red flag, not a proof point.

- Customer proof section: Link your testimonials to the original G2 review, case study, or press release. Attribution is what makes social proof credible.

- Pricing section: If you mention third-party pricing, you must date-stamp it: "As of [Month Year], based on publicly listed pricing."

- Reference block: This lives below your primary CTA and contains the sources for every cited claim on the page.

Comparison page placement map (category definitions, criteria, table cells, "why us" section)

- Category framing (intro section): If you're defining the category for the reader, either cite your source or explicitly label it as your own unique framing.

- Comparison criteria: Explain how you chose the criteria. This methodology disclosure is what separates a trustworthy comparison from a self-serving sales page.

- Comparison table: Every single cell with a factual claim (like pricing or feature availability) needs attribution. Use footnote markers inside the table.

- "Why us" / positioning section: This is your opinion. Label it as such. "Our perspective on where [Product] fits best:" works great.

- Reference block: This is required and should be even more detailed than on a product page. List every competitor source with the date you verified it and the URL.

How to cite inside comparison tables without turning them into link farms

This is a common worry, but the solution is simple. Use superscript or bracketed footnote numbers in the table cells. Then, create a numbered reference list directly below the table. This keeps the table itself clean and readable, while the reference list handles the attribution.

For a SaaS comparison, I also like to add a general "Methodology" note right above the table. Something like, "Feature data sourced from public documentation as of [date]." Then you only need to use footnote numbers for the specific claims that require a more detailed source, like proprietary data or a third-party audit.

How SaaS citation differs from physical product pages

The principles are the same, verifiable claims and clear attribution, but the evidence is different. A product page for a blender might cite UL safety certifications or ASTM materials testing standards. Your SaaS page has different proof points. You'll be focused on software-specific evidence: performance benchmarks (like uptime or API response time), security attestations (SOC 2, ISO 27001), integration docs, and customer outcomes from case studies. Don't just copy the citation patterns from a Wirecutter review. Your B2B buyer needs proof of business value and technical reliability.

UX best practices: disclosure labels, tooltip patterns, and "show sources" toggles (conceptually)

Here are a few patterns I've seen that keep pages skimmable while still providing the proof:

- Disclosure labels: A small line of gray text near a claim, like "Verified by [Source] as of [Date]." It's not intrusive, but an AI can read it.

- Tooltip-style attribution: If your CMS can handle it, a hover state that reveals the source is a really clean way to avoid cluttering the layout.

- "Show sources" toggles: An expandable section for your reference block keeps the conversion elements front and center while still making all your sources accessible.

How do you balance citation density with conversion UX?

I get it. A product page with a footnote on every sentence reads like a legal deposition and probably kills conversions. Here's how you stay on the right side of that line.

The "minimum viable evidence" rule for each page section

For each section, focus on one primary citation anchor. It should be the strongest single source that validates the most important claim in that section. Ask yourself, "If a prospect challenged me on this section, what one source would shut them up?" That's the source that has to go in. Everything else is just optional depth.

As a general rule, proof-heavy sections like security and compliance need more citations because the stakes are higher. Feature sections can be lighter. Link when a claim is something a user would actually check, but skip it when it's self-evident from using the product.

What to do when a citation interrupts flow (rewrite patterns that keep the sentence clean)

- Parenthetical attribution: My favorite. It looks like this: "...reducing onboarding time by 35% (Company Benchmark Report, 2023)." It stays in-line and is minimally disruptive.

- Footnote marker: Just a superscript number¹ after the claim, with the full attribution in the reference block at the bottom. This is best for tables and really dense sections.

- Evidence block: This is a short, indented block that comes right after a paragraph. It might say: "Source: [Name], [Date], [URL]."

What not to do: overlinking, weak sources, and proof dumping

- Overlinking: Don't link every other phrase. It looks like spammy SEO manipulation, not genuine attribution.

- Weak sources: Citing a competitor's marketing page to prove their limitations isn't credible. But citing an aggregate G2 rating is. Know the difference.

- Proof dumping: Stacking five testimonials in one section without connecting them to specific claims is just noise. Evidence needs a claim to support.

How do you handle competitor citations, biased sources, and conflicting information?

If you handle them the right way, these tricky edge cases can actually build trust instead of eroding it.

Citing competitor pages and third-party commercial content safely

You absolutely can cite competitor pages for factual, checkable information, like their stated pricing, feature list, or compliance docs. You're not endorsing them; you're just using their own published data.

The rules are simple: verify the claim is accurate when you cite it, and include a verification date ("as listed on [Competitor] pricing page, [Month Year]"). You're not responsible for keeping up with their update cycles.

One thing to avoid is citing competitor blogs for broad, category-level claims. "According to [Competitor's Blog]" is a weak source for an objective statement. It is, however, a perfectly fine source for "here's how [Competitor] describes their own approach."

When sources disagree: how to write "best available truth" without sounding evasive

So, you find two sources that disagree on a market size. Or one benchmark says onboarding takes 45 days, and another says 21. What do you do? You cite both and you explain the context.

"Estimates vary. [Source A] puts the average at 45 days for enterprise deployments, while [Source B] reports 21 days for mid-market segments. Our own customer data shows [X]." That's not being evasive; it's being accurate. And an AI can use it because it reflects the real, messy evidence.

Some language patterns I use for this kind of uncertainty are: "as reported by [Source] in [Year]," "varies by deployment model," or "our analysis of public data suggests."

Date-stamping and "as-of" disclosures for fast-changing product facts

In SaaS, facts change constantly. Pricing, features, integrations. Any claim that could be outdated in six months needs an "as of [date]" disclosure.

Our internal best practice is to store a "last verified" date next to each citation, separate from the source's own publication date. That verification date tells us if our evidence is still good.

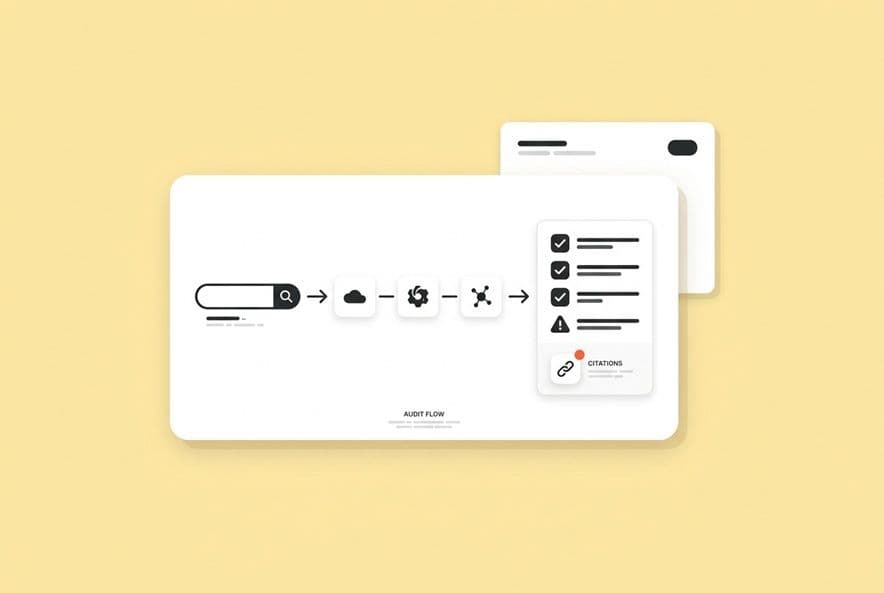

How do you audit and maintain citation-friendliness across a whole site?

Running an audit doesn't require a special tool to get started, but you do need to know where to look.

A citation-friendliness audit checklist (structure, sources, disclosures, freshness)

For each of your key product or comparison pages, check these four things:

- Structure: Does every "must-cite" claim have a source? Are the citations close to the claims? Is the format consistent across the page?

- Sources: Are your sources from the top of the hierarchy we talked about? Are you only using competitor sources for facts?

- Disclosures: Are opinions clearly labeled? Are there "as of [date]" disclosures on facts that change? Is your methodology documented?

- Freshness: Are any sources older than 18 months? Are any of the links broken?

Prioritization: which pages to fix first (high-intent, high-visibility, high-risk claims)

Fix your pages in this order: (1) the ones with the highest organic traffic, (2) pages that are already appearing in AI answers but aren't being cited, (3) pages with your highest-stakes claims (like pricing and security), and (4) your direct comparison pages.

The fastest way to prioritize is to know which pages are already getting attention from AI. This is another area where we built our own solution. DeepSmith's AI Visibility tools show us exactly which pages are earning AI citations and which competitor pages are winning. It lets us start the audit with real evidence instead of just a gut feel, so we can rank fixes by impact.

Ongoing maintenance: link validation, refresh cycles, and change logs

Citation decay is a slow-motion trust killer. You have to set a maintenance cadence. We do quarterly link validation (automated checkers are great for this), a semi-annual review of all market data sources, and we keep a changelog entry for every single citation update. That changelog becomes your audit trail.

Dynamic/personalized pages: how to keep evidence stable when the page changes

If your pages show different content to different segments, you've got a governance headache. The claim you make to an enterprise buyer needs its own evidence trail, separate from the claim you make to an SMB buyer. The fix is just simple governance. Treat each content variant as its own version with its own citation rules. And label the dynamic sections in your internal docs so reviewers know to check each variant.

This fails because governance is often fragmented. We built DeepSmith's Deep IQ + Content Studio to store claim boundaries and positioning by product and persona. That way, writers aren't just inventing facts that later need citations tacked on. The claim integrity is built into the brief before a single word is written.

Building a team workflow for citation ownership

This can't be a last-minute task for one person. It has to be a cross-functional responsibility. A simple ownership model can prevent things from drifting:

- Content/Product Marketing (Accountable): Owns the page, the claims, and the final sourcing.

- Subject Matter Experts / Product Team (Consulted): Verifies the accuracy of performance, feature, and security claims.

- Legal/Compliance (Consulted): Reviews high-risk claims (competitive, security) and advises on the disclosure language.

- SEO Team (Consulted): Advises on linking strategy and helps track AI citation visibility.

This process adds rigor, but it can slow down publishing. To keep up momentum, we use platforms like DeepSmith to automatically generate distribution assets with the Agent Library and tee up the next piece with Autowrite. This lets the team focus on getting the claims right without stalling the entire content pipeline.

Internal alignment: what to ask before you publish

Before you roll this out, you need to get your stakeholders aligned. Here's a checklist of questions to ask:

- For Legal/Compliance: "What's our risk tolerance for citing competitor data? What specific disclosure language do you need for our performance and competitive claims?"

- For Product/Engineering: "Who is the single source of truth for our performance metrics? How will we know when that data gets updated?"

- For SEO/Marketing Ops: "What's our process for tracking and fixing broken links? How are we going to monitor our AI citation rate?"

Getting these answers upfront turns what could be a contentious project into a defined, cross-functional process.

Build a repeatable citation system (not a one-time cleanup)

The brands that are consistently earning AI citations on their high-intent commercial pages aren't doing anything magical. They're just applying basic information discipline to content that, historically, got a pass on rigor.

The playbook is concrete. Build a claim taxonomy so your team knows what needs a citation. Design your page templates with citation placement baked right into the structure. Write at the claim level. Keep your evidence next to your claim. Use a reference block. Be honest about what's opinion. And date-stamp anything that's likely to change.

Then you just audit, prioritize by impact, and maintain it like you would any other technical SEO asset.

Here's your immediate next step. Take your highest-traffic comparison page and run it through the citation-friendliness checklist. Identify the two or three structural changes that would make every claim verifiable. Then do the same thing for your top product page. Just like that, you'll have two pages that have a real shot at being cited by the AI answers your buyers are reading, instead of sending that traffic to your competitor.