A business case for an AI content platform rests on three measurable outcomes: reducing the fully loaded cost per article (typically $400–1,200 when director-level time is included), increasing publishing cadence without adding headcount, and earning brand visibility in AI search results—where half of all consumers now look for answers. What follows is a fill-in-the-blank ROI model you can take to your leadership team tomorrow without doing a week of research first.

If you’re a content leader, I bet this sounds familiar. You have an SEO strategy. You have the tools. You have the writers. And yet, you’re still the one writing briefs at 9 pm, manually adding internal links on a Friday afternoon, and watching your content backlog grow faster than you can possibly publish.

The problem isn’t that you or your team aren’t working hard enough. The problem is you’re running a modern factory with hand-cranked tools and handoffs designed for chaos.

Let’s be real. The business case for an AI content platform isn’t about “writing faster.” That’s a side effect. The real win is getting rid of the soul-crushing operational cost buried in every single article: the briefing, the SEO review, the internal linking, the image sourcing, the formatting, the publishing, the distribution... all of it. It’s about building a system, an engine, that produces measurable outcomes in both Google rankings and the new world of AI citations.

What problem are you actually solving with an AI content platform (speed, cost, or visibility)?

Everyone says they want more speed. But speed is just a symptom. The real disease is that your content operation is a painfully manual assembly line pretending to be a strategy. I’ve seen it a dozen times. Every article ends up taking 8 to 12 hours of total work, spread across multiple people and tools, and most of that work has nothing to do with creative or editorial judgment.

The tell: if you're using 6 tools and still the bottleneck, you don't have an AI strategy—you have tool sprawl

Ahrefs for keywords. Surfer for on-page suggestions. Google Docs for briefs and drafts. ChatGPT for a little help here and there. Canva for images. Your CMS for publishing. And a Notion board pretending to be a content calendar.

Sound familiar? I thought so.

Each tool solves one tiny piece of the puzzle and then hands the problem right back to you. You are the human integration layer. That’s not a strategy, my friend. It's tool sprawl. The bottleneck isn't your team's output; it’s the miserable stitching work you personally have to do between every single tool in your stack.

The new requirement: SEO performance plus AI search visibility (mentions and citations)

Here’s the shift that completely changes the investment math: organic search is no longer the only game in town. AI search visibility is now a real thing we have to track. Are you the brand that gets mentioned when a buyer asks ChatGPT, Perplexity, or Google’s AI a question you should own?

If you’ve ever searched for your core use case in one of those tools and seen a competitor’s name pop up, you know the feeling I’m talking about. It’s a punch in the gut. Any business case you build today must account for winning in both channels.

Fill in the blank: what does one "publishable" article really cost you today?

This is the part most teams skip, and it’s the exact reason their pitch fails. Before you can talk about ROI, you need a baseline cost that you can defend. And I mean really defend.

Cost categories to include (that most teams ignore)

Most people just calculate the writer’s fee and call it a day. That’s a rookie mistake that misses more than half the real cost. Let's get honest about what actually goes into publishing one article.

- Brief writing: Who writes it, how long it takes, and what’s their hourly rate? (Hint: it’s often a very expensive person.)

- Keyword research and SERP review: Usually the SEO lead or you. More expensive time.

- Draft production: The writer's time, including all the back-and-forth for revisions.

- SEO review and optimization: Fiddling with header structures, keyword density, and chasing a score in a tool.

- Internal linking: Manually finding and adding links across your site. This alone is a 30 to 60-minute tax on every article.

- Image sourcing or creation: A designer's time, your time in Canva, or both.

- Formatting for CMS: The joy of copy-pasting, setting heading tags, updating the slug, and writing a meta description.

- Review and approval cycle: Getting feedback from stakeholders (and the time it takes to manage them).

- Publishing: The final work in the CMS and hitting schedule.

- Distribution: Writing the LinkedIn post, the newsletter blurb, the social thread... if it even happens.

And don’t forget the opportunity cost of all the distribution that you meant to do but never got around to. That one hurts.

Fill-in table: time, owner, fully loaded cost, and frequency

Do this. Seriously. Copy this into a spreadsheet and fill it in with your real numbers.

| Step | Owner | Avg. Time (hrs) | Fully Loaded Rate ($/hr) | Cost Per Article |

|---|---|---|---|---|

| Brief writing | [You / SEO lead] | ___ | $___ | $___ |

| Keyword research | [You / SEO lead] | ___ | $___ | $___ |

| Draft (writer) | [Writer] | ___ | $___ | $___ |

| Revision round(s) | [Writer + you] | ___ | $___ | $___ |

| SEO review | [You] | ___ | $___ | $___ |

| Internal linking | [You] | ___ | $___ | $___ |

| Image | [Designer / you] | ___ | $___ | $___ |

| CMS formatting + publishing | [You / writer] | ___ | $___ | $___ |

| Distribution assets | [You] | ___ | $___ | $___ |

| Total | ___ hrs | $___/article |

Every team I’ve walked through this exercise with lands somewhere between $400 and $1,200 per article once director-level time is properly accounted for. At four articles a month, that’s $1,600 to $4,800 a month right there.

Baseline outputs: cadence, backlog gap, and opportunity cost of "topics you'll never get to"

Now for the gut-punch calculation.

- Current monthly cadence: ___ articles/month

- Identified topic backlog: ___ topics

- Months to clear backlog at current cadence: ___ months

- Topics you'll actually never get to: ___

That last number is your real opportunity cost. Those are ranking positions you’ll cede to competitors. Those are AI citation opportunities you’ll miss. Those are content coverage gaps that your competition will happily fill. That’s the real cost of staying put, and it absolutely belongs in your business case.

What should be automated vs. kept human (so quality doesn't collapse)?

This is where most AI rollouts go off the rails. I’ve seen it happen. A team automates everything, the content becomes bland and generic, and six weeks later everyone’s arguing to go back to the old, slow process. The failure isn’t the AI. It's the lack of a clear plan for the division of labor.

Automate the assembly line (repeatable steps)

Anything that is process-heavy, repeatable, and doesn’t require your unique strategic brain is a candidate for automation. This includes things like:

- Research scaffolding and brief structure: Getting the basic topic context, SERP summary, and keyword targets organized.

- First draft generation: A draft with SEO elements and brand context baked in from the start.

- Internal link insertion: Automatically scanning and adding relevant links from your site during the draft stage.

- Metadata generation: Title tag, meta description, and slug.

- Image generation: Creating a cover image based on your visual guidelines.

- CMS publishing: Pushing directly to WordPress or Webflow without the copy-paste dance.

- Distribution asset generation: Creating a LinkedIn post, a newsletter section, and an X thread.

- Local SEO data: For businesses with physical locations, this means automating location-specific details and GMB signals.

This is the assembly line work. It should not require you. A true end-to-end platform connects these steps into a pipeline, which is what separates it from a simple writing assistant.

For example, a platform like DeepSmith Content Studio uses a multi-agent pipeline to do this. Research, briefing, drafting, editorial QA, voice humanization, internal linking, image generation, metadata, and CMS publishing all run in a sequence. Your team’s job is to review and approve, not to manually assemble all the pieces.

Keep humans on strategy, judgment, and claims

Automation handles the steps where there’s a knowable, correct answer. Humans must own the decisions that require judgment.

- Editorial stance and POV: What’s our unique take on this topic? What makes this article different?

- Audience-specific examples: Real customer scenarios, product nuances, and patterns you’ve observed.

- Differentiation from competitors: What do you know that they don’t? This is your secret sauce.

- Final factual review: Any claim that could damage your credibility if it's wrong.

- Strategic prioritization: Deciding which topics to tackle next and why.

These things are not automatable. If your platform is making these decisions for you, you don't have a speed gain, you have a looming quality problem.

Quality control checklist template (originality, voice, accuracy, ethics)

Run this checklist before you hit publish on anything. It takes 10 minutes and can save you from a world of hurt.

- Originality: Does this article have a distinct point of view, or is it just a summary of other summaries?

- Voice: Does this sound like us? Read the first three paragraphs out loud. You'll know.

- Accuracy: Are all product claims, data points, and named examples 100% verified?

- Claim boundaries: Does anything in here promise an outcome we can't reliably deliver?

- Competitive differentiation: Is there at least one part of this article a competitor couldn't have written?

- AEO structure: Is the article structured with clear, citable claims and scannable sections for AI engines?

This little checklist is the firewall between AI-assisted quality and AI-produced mediocrity.

How do you measure ROI for an AI content platform (without hand-wavy "time saved")?

"We'll save time" is not a business case. It’s a hope. Here's how to build a model your CFO will actually take seriously.

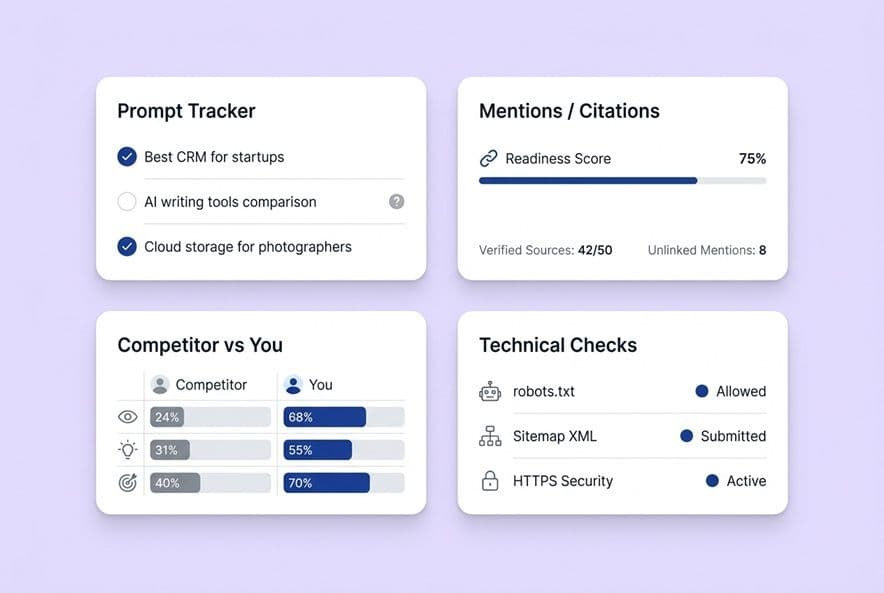

The three ROI buckets: production efficiency, SEO outcomes, and AI visibility

Don't just measure one thing. If you only talk about production speed, it sounds too internal. If you only talk about rankings, it sounds too slow. You need all three to tell the whole story.

- Production efficiency: Your cost per article, cycle time, content cadence, and backlog clearance rate.

- SEO outcomes: Organic traffic growth, keyword coverage expansion, and (most importantly) pipeline influenced by your content.

- AI visibility: Your brand mention and citation rate across AI platforms, plus your competitive citation share.

Standardized metrics to track (inputs → outputs → outcomes)

| Category | Metric | Frequency | Baseline → Target |

|---|---|---|---|

| Production | Articles published/month | Weekly | ___ → ___ |

| Production | Avg. cycle time (brief to publish) | Weekly | ___ days → ___ days |

| Production | Cost per article (fully loaded) | Monthly | $___ → $___ |

| SEO | Organic sessions from content | Monthly | ___ → ___ |

| SEO | New keywords entering top 20 | Monthly | ___ → ___ |

| SEO | Pipeline-influenced deals from content | Quarterly | ___ → ___ |

| AI Visibility | Brand mention rate (tracked prompts) | Monthly | ___% → ___% |

| AI Visibility | Citation rate (tracked prompts) | Monthly | ___% → ___% |

| AI Visibility | Competitive citation share | Monthly | ___% → ___% |

AI visibility measurement: prompts → mentions/citations → page attribution → competitive delta

Checking ChatGPT once a week and calling it "tracking" isn't going to cut it. A real measurement system looks like this:

- Define buyer prompts: The specific questions your ideal customers are asking AI tools.

- Track mention rate: Does your brand even appear in the response?

- Track citation rate: Is your content linked as a source?

- Attribute to pages: Which specific pages on your site are getting cited?

- Benchmark against competitors: Who else is getting cited for the same prompts? (And why aren't you?)

This gives you actionable data. You're not just watching a number go up or down; you can connect a citation gap to a specific content gap on your site.

Platforms like DeepSmith AI Visibility can do this automatically. It tracks your brand mention and citation rates across ChatGPT, Gemini, Perplexity, Claude, and Google AI Mode at the prompt level. The Pages module shows you which of your pages are earning citations, and the Competitors module shows you who’s winning the ones you should be getting.

Simple ROI formula you can defend (fill-in-the-blank)

Annual ROI = (Value created + Cost eliminated) ÷ Platform cost

Fill this out for a conservative, base, and aggressive scenario. Always show the conservative case first to build trust.

| Variable | Conservative | Base | Aggressive |

|---|---|---|---|

| Articles/month increase | +2 | +4 | +6 |

| Cost reduction/article | 20% | 35% | 50% |

| Current cost/article | $___ | $___ | $___ |

| Monthly cost savings | $___ | $___ | $___ |

| New organic sessions/month | ___ | ___ | ___ |

| Pipeline value per 1,000 sessions | $___ | $___ | $___ |

| Monthly pipeline contribution | $___ | $___ | $___ |

| Annual value | $___ | $___ | $___ |

| Platform cost (annual) | $___ | $___ | $___ |

| ROI | ___% | ___% | ___% |

What does "AEO and zero-click search" change about your content strategy—and your business case?

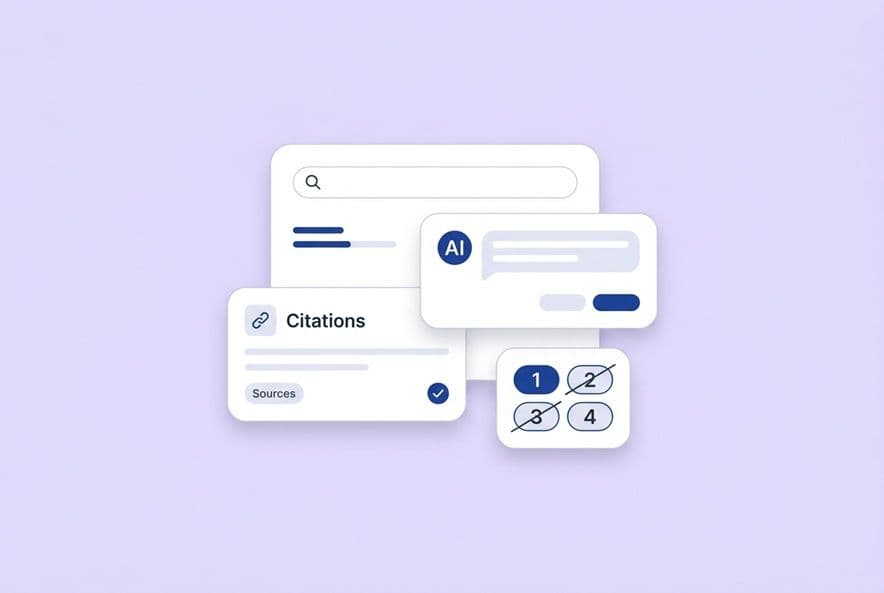

Answer engine optimization (AEO) isn't some future thing we need to worry about later. It’s here. If your buyers are already asking AI tools for recommendations, the race for citations has already started. Structured, answer-first content is what earns those spots.

Write for citation: structure, discrete claims, and scannable sections

AI engines are lazy; they excerpt content they can easily trust and attribute. That means your content needs:

- Clear H2/H3 structure that maps to how people ask questions.

- Discrete, attributable claims, not vague, fluffy assertions.

- Definition-first formatting, where you answer the question in the very first sentence of a section.

- Lists and tables, because structured data is much easier for an AI to parse than dense paragraphs.

These are no longer nice-to-haves. They are concrete writing requirements for getting cited. Research from Princeton University found that content with specific statistics and cited sources earns 30–40% more AI citations than purely qualitative content.

Update strategy: why refresh velocity becomes a competitive advantage

AI answers are not static. They update as new content is indexed. If a competitor publishes a fresh, authoritative article on a topic you covered two years ago, they will likely steal your citation. This means refresh velocity, or how quickly you can update and republish content, is a new competitive advantage. This is a powerful argument for a platform that can handle the mechanical work of refreshing articles without a full, painful editorial cycle.

What not to expect: when citations won't translate into immediate traffic

Let's be honest with ourselves and with leadership. AI citations don't always create a click. In many zero-click scenarios, the value is brand exposure, authority, and influence, not a session in your analytics. You need to frame AI citation wins as a brand visibility play, not a direct traffic play.

Vendor scorecard: how to evaluate AI SEO platforms beyond feature lists

Every platform's website claims they are "end-to-end." Here’s how to cut through the marketing fluff and find out what’s real.

Workflow fit: does it replace steps or add another tab?

The first question you should ask is not "What does it do?" but "What does it replace?" A tool that helps you write faster but still leaves you to do the internal linking, image sourcing, and CMS formatting is just a writing assistant, not a platform. Map out your current, painful workflow and ask vendors to point to the specific steps their tool makes disappear.

Governance: brand voice, product accuracy, and claim boundaries

This is the one most people get wrong. They don't think about it until they're cleaning up a mess of bad AI output. Ask every vendor: where does our company context live in your system? If the answer is "you just add it to the prompt," run. For any team with multiple products or specific personas, a structured governance layer is non-negotiable.

For instance, DeepSmith Deep IQ is a structured context layer. It stores your company positioning, product details, buyer personas, and brand voice as data that shapes every output. This is how you reduce drift and enforce guardrails automatically.

AI visibility: what "tracking" must include to be real

Minimum viable AI visibility tracking must include prompt-level tracking, coverage for all major platforms (ChatGPT, Gemini, Perplexity, Claude, Google AI), page-level attribution, and competitive benchmarking. If a vendor's "AI tracking" is just a manual spot-check tool or only covers one platform, it's a feature, not a measurement system.

Pricing models and value: what's normal, what's risky

You'll see a few common models: per-seat SaaS, usage-based (per article or credit), or a hybrid. If you're a growth-stage company, watch out for pricing that punishes you for publishing more. The whole point is to increase volume while lowering the per-unit cost. A plan that caps you at 10 articles a month defeats the purpose. Push for plans that align with your cadence or offer a flat rate.

Proof questions to ask every vendor (implementation + outcomes)

- "Walk me through the last time a customer's first article failed their quality bar. What happened, and how did you fix it?"

- "What does your onboarding process actually replace versus what you require us to configure manually?"

- "Show me what your 'AI visibility tracking' looks like for a real brand across three different platforms."

- "How do you handle product-specific accuracy when our offerings change?"

- "What does your internal linking automation actually do? Does it just suggest links, or does it insert them?"

- "How does your platform support multilingual content creation and global SEO workflows?"

The answers will separate the real platforms from the polished demos.

Implementation plan: a 30-day rollout that won't die after week two

Most tool rollouts fail from a lack of focus. Here’s a tight 30-day plan to get it right.

Week 1: pick one content type + define your "done" standard

Don't try to migrate your entire workflow at once. That's a recipe for disaster. Pick one specific content type, like a "how-to guide" or a "use-case page," and commit to running one article through the platform. Before you start, write down exactly what "publishable" means: QC checklist passed, all SEO elements present, internal links inserted, image ready, and metadata complete. This is your quality standard.

Week 2: run the pipeline and measure cycle time + rework

Now produce 2-3 more articles of that same type. For each one, log the data: How long did the platform take? How much time did the human reviewer spend? What needed rework and why? This gives you real cycle time data and shows you where the platform might need some configuration tuning.

This is where a feature like DeepSmith Autowrite comes in. Once the pipeline is configured, you can schedule articles to be generated automatically. Your production cadence becomes driven by the calendar, not by your team's bandwidth.

Week 3: add AI visibility tracking and competitor benchmarking

Time to set your baseline. Define 10–15 of your most important buyer prompts, run them through your tracking tool, and record where your brand appears versus your top competitors. This is your week-zero benchmark for proving ROI down the line.

Week 4: lock the cadence (calendar + automation) and distribution assets

By the end of the first month, you should have a repeatable system. Articles are generating, your QC process is solid, and distribution assets (like a LinkedIn post or newsletter blurb) are being created as a standard part of the workflow. If you aren't generating those assets as part of the main run, trust me, you will never get around to doing it consistently.

Fill-in-the-blank business case template (copy/paste)

Use this structure for your actual document. Just fill in the brackets.

Executive summary

- Current State: We currently produce [X] articles/month at a fully loaded cost of [$X] each. We are bottlenecked by [primary bottleneck], which has created a [X] topic backlog. Meanwhile, competitors like [competitor name] are winning AI citations while we have no systematic presence.

- Proposal & ROI: We propose an investment of [$X/year] in an AI content platform. This will increase our cadence to [X] articles/month, reduce our cost/article by [X%], and help us achieve a [X%] citation rate in AI search. This yields a conservative ROI of [X%] from production savings alone, with significant upside from traffic and pipeline growth.

Current state and bottlenecks

- Cadence: [___] articles/month

- Cost: [$___]/article (fully loaded)

- Bottleneck: [Your role / specific step] is the primary bottleneck.

- Backlog: [___] identified and approved topics.

- AI Visibility: Our brand appears in [%] of tracked buyer prompts, while Competitor X appears in [%].

- Toolstack: [List tools and manual steps connecting them].

Proposed solution requirements

The platform must:

- Cover the entire workflow from research to publishing in a connected pipeline.

- Store and automatically apply our brand, product, and persona context.

- Track AI citation rates across key platforms at the prompt level.

- Generate distribution assets as a standard part of the publishing workflow.

- Support scheduled and automated production to build a predictable cadence.

ROI model (assumptions + scenarios)

| Scenario | Articles/mo (new) | Cost reduction/article | Monthly savings | Platform cost | Net annual value |

|---|---|---|---|---|---|

| Conservative | +2 | 20% | $___ | $___ | $___ |

| Base | +4 | 35% | $___ | $___ | $___ |

| Aggressive | +6 | 50% | $___ | $___ | $___ |

Risks and mitigations

| Risk | Mitigation |

|---|---|

| Generic AI output | Use a platform with a structured voice/product context layer; enforce human QC checklist on every article. |

| Low adoption by the team | Run a structured 30-day rollout focused on one content type first to build a quick win. |

| AI citations don't drive traffic | Set clear expectations that AI visibility is a brand and influence metric, measured separately from direct traffic. |

| Privacy/data concerns | Review vendor data handling policies carefully. Consolidating on a single platform reduces risk vectors compared to tool sprawl. |

| Overpromised on technical SEO | This isn't a magic wand for your whole site. A content platform handles on-page SEO (headings, meta). It can't fix site speed or architecture issues. |

Decision and next steps

- Complete the cost model with our actual data.

- Run vendor evaluations using the proof questions from the scorecard.

- Request pilot access to test one content type.

- Establish our AI visibility baseline before launch.

- Define pilot success metrics: [cycle time target], [articles published], [QC pass rate].

Fully filled business case for DeepSmith

Why DeepSmith fits the "platform" definition (not a point tool)

To make this concrete, here's how a platform like DeepSmith fits this model. It covers the complete production pipeline from research and briefing through drafting, QA, internal linking, image generation, publishing, and distribution. It replaces the manual stitching we do between our 6+ fragmented tools, which is the core bottleneck we identified.

Where DeepSmith reduces operational cost in the workflow

| Cost line-item | Current state | With DeepSmith |

|---|---|---|

| Brief writing | Director writes manually (45–90 min) | Multi-agent pipeline generates research scaffold; director reviews & approves. |

| Draft + SEO optimization | Writer drafts; director reviews for SEO. | Content Studio produces an SEO-optimized draft with keywords and structure built in. |

| Internal linking | Director manually cross-references site (30–60 min). | Automated internal link insertion during the generation process. |

| Cover image | Designer helps or we spend 20 min in Canva. | Automatic image generation guided by our stored visual rules. |

| CMS formatting + publishing | Manual copy-paste and meta entry. | Direct CMS push to our WordPress/Webflow instance. |

| Distribution | Usually skipped due to time constraints. | Agent Library generates channel-specific posts as a standard workflow step. |

| AI visibility tracking | Manual spot-checks, if we remember. | Prompt-level citation tracking across 5 AI platforms with competitive benchmarking. |

How DeepSmith supports AI visibility measurement and strategy

DeepSmith's AI Visibility module is designed for this. We define the buyer prompts our customers are asking, and the system tracks how often we get cited, which of our pages are driving those citations, and how we stack up against competitors. The Competitors module is key: it shows us which competitor pages are winning citations we want, which we can then use to generate new ideas for our own pipeline.

What we would measure in the first 30–60 days

Production efficiency (days 1–30):

- Articles published vs. our current baseline

- Average cycle time from brief to publish

- Director-level hours spent on production tasks (the most expensive time)

SEO and content coverage (days 30–60):

- New keyword clusters we've covered

- Early organic sessions from the new articles

- Our topic backlog clearance rate

AI visibility (days 30–60):

- Our baseline citation rate established

- Competitor benchmarks documented

- The first pages that start earning citations identified

The 30-day pilot proves the operational gains. The 60-day window gives us the first real signal on visibility. Together, they provide credible, data-backed inputs for a full budget decision.