You've been there, right? Your page is sitting pretty at position 7. You've refreshed it twice. The keyword targeting is solid. And yet, your competitor's thinner article keeps getting referenced in AI answers, linked from industry blogs, and cited in roundup posts. Yours just collects impressions and a bit of dust.

This isn't a keyword problem. You already proved you can rank. What you're looking at is an authority and packaging problem.

The fix isn't to burn the page down and rewrite it from scratch. It's to engineer the page for extractability, add the right citation signals, and run a focused promotion campaign for a URL that already has search validation.

I'm going to give you the repeatable system we use to do exactly that. You'll learn how to diagnose why a ranking page isn't getting cited, make targeted upgrades that attract links and AI mentions, and measure if it's actually working. Let's get into it.

What does "not getting cited" mean when your page already ranks?

Before you start tweaking things, you need to know which type of citation you're actually missing. The fixes are different for each.

Backlinks vs AI citations vs featured snippets: how they overlap (and don't)

Backlinks are the classic one. This is when another site links to your URL (think editorial mentions, resource pages, or expert roundups). They signal authority to search engines and bring you referral traffic. To get them, your content has to be genuinely reference-worthy to a human writer or editor.

AI citations are the new game in town. This happens when platforms like ChatGPT, Perplexity, or Google's AI Overviews pull from your page to answer a user's question in AI search. These don't always follow Google's rankings. AI engines love pages with clean, extractable structures, like definitions, frameworks, and comparison tables. A page that ranks #6 can get cited heavily while a page at #2 gets ignored if it's harder for the AI to parse.

Featured snippets (or position zero) are Google's own version of this. They pull a definition, list, or table directly into the search results. If you're not winning snippets for queries where you rank in the top 10, it's a huge sign your formatting isn't ready for prime time.

These three overlap, but they respond to different signals. Backlinks are a people problem (are you worth referencing?). AI citations are a packaging problem (can an AI lift your claim?). Featured snippets are a formatting problem (do you have the right structure?). Most advice lumps these together. We're going to treat them separately.

The core idea: you're solving an authority + extractability gap, not a keyword gap

Here's my whole philosophy on this: if your page is already ranking, you don't need more keyword coverage. You need a different kind of upgrade. Specifically, we're focusing on three levers:

- Authority signals: referring domains, link quality, and internal link equity flowing to the page.

- Extractability: a claim-first structure, defined blocks, tables, and quotable sections.

- Packaging: schema, metadata, and headings that match how AIs and SERP features interpret intent.

Everything in this playbook connects back to one of those three things.

Why pages rank but don't get cited: the 7 root causes (and how to diagnose yours)

This is the step everyone wants to skip. Don't. You have to know what's broken before you can fix it. Take five minutes to identify the real root cause.

Root cause #1 — You match intent, but you're not the "source" (no quotable claims)

I see this all the time. The content answers the question well enough, but it doesn't make any claims worth repeating. If your page is just a well-structured summary of common knowledge, without any original frameworks or defined terms, there's nothing for a writer or an AI to quote. You're background reading, not a source. Building AI brand authority requires giving people something specific and original to reference.

How to spot it: Read your page and ask, "What sentence would someone put in quotation marks and link back to?" If nothing comes to mind, this is your main problem.

The fix: Add "source blocks" like defined terms, named frameworks, or decision criteria. We'll cover exactly how in the next section.

Root cause #2 — The page has weak authority signals (few relevant referring domains)

A page can absolutely rank from technical SEO and good topical relevance while having almost no backlinks. It's a classic chicken-and-egg problem. Both human writers and AI engines tend to cite pages that already have some citation momentum. It's a trust proxy. If you have zero or just a few low-quality backlinks, you're starting without that social proof.

How to spot it: Plug the URL into Ahrefs or Semrush. How many referring domains does it have? Are they any good? Now compare that to the pages ranking in the top three for your keyword.

The fix: This is an off-page problem. The outreach section later in this post tackles this head-on.

Root cause #3 — The page is hard to extract (structure blocks AI/snippets)

We've all been guilty of this. You bury the key answer in the fourth paragraph after some throat-clearing and context-setting. AI engines and snippet algorithms hate this. They need a clear signal that says, here is the direct answer to the question, right at the top of the section.

How to spot it: Try to manually pull a definition or a list of steps from your page. Was it a pain? If you had to read three paragraphs to piece it together, so does an AI.

The fix: Rewrite your key sections with a "claim-first" structure. More on that soon.

Root cause #4 — SERP feature mismatch (you're competing with a different format)

If the main search result for your query is a definition snippet, but your page offers up a bulleted list, you're bringing the wrong format to the fight. Same for AI Overviews. If the query pulls comparison tables from your competitors but all you have is prose, you're going to lose, even if you rank higher in the classic blue links.

How to spot it: Google your query. What does the featured snippet look like? What format does Perplexity or ChatGPT use to answer the prompt? Compare that to your page.

The fix: Match the dominant format in the relevant section of your page. You don't have to change your whole article.

Root cause #5 — Content decay or staleness eroded trust

A page that was a star in 2022 can quietly lose its shine. Writers stop linking to stale sources. AI engines learn to ignore content with outdated stats or references. A targeted content refresh can recover lost lead generation value without starting from scratch.

How to spot it: When was the page last meaningfully updated? Are you citing stats from two or more years ago? Are the tools or processes you mention still relevant?

The fix: Do a proper content refresh. Update your data and claims, add new examples, fix broken links, and update the publish/modified date. This is also the perfect time to add a new "source block" to signal a major upgrade.

Root cause #6 — Internal discoverability is weak (site hierarchy doesn't support it)

If your own site doesn't treat this page like it's important, why would anyone else? Internal links distribute authority and help crawlers understand what a page is about. Pages with few or no internal links are basically being ignored by their own website.

How to spot it: Use a tool like Screaming Frog to check how many internal links point to your URL. Is the page an orphan, or close to it?

The fix: Add internal links from 3–5 related, high-authority pages on your site using relevant anchor text. Manually cross-referencing your whole sitemap is a pain, which is why this gets skipped. Honestly, we built a tool inside DeepSmith's Content Studio to automate this because we knew we'd never keep up otherwise.

Root cause #7 — You're targeting the wrong buyer stage for "citation gravity"

Informational, top-of-funnel content tends to attract links and citations. Bottom-of-funnel pages (like comparisons, pricing, or trial pages) are built to convert, not to be cited. No one is referencing your pricing page in a roundup post.

How to spot it: What's the real intent of this page? If it's bottom-of-funnel, it's not going to be a citation magnet.

The fix: For these pages, measure conversions, not citations. Focus your citation-building efforts on your informational content that has genuine reference value.

Which already-ranking pages should you optimize first (so effort turns into citations)?

You probably have dozens of ranking pages and a small team. I get it. You can't boil the ocean. You need a hit list, not a massive audit.

The "Rank-to-Cited" scorecard (quick evaluation criteria)

Score each candidate page on these five factors from 1 to 3:

- Position band: Is it in positions 5–15? These are the sweet spot, close enough to have credibility but needing a push.

- Informational or comparative intent: Is it research or comparison content that naturally earns citations? Running a content gap analysis can help you identify which pages have the strongest citation potential.

- Linkability: Does the page have (or could it easily have) a named framework, unique data point, or decision tool?

- SERP feature present: Is there a featured snippet, AI overview, or "People Also Ask" box you could win?

- Conversion proximity: Does this topic connect to buyer pain that could turn into pipeline if it got more visibility?

Pages scoring 12–15 are your top priority. Start there.

Pages to skip (or park) even if they rank

Some pages just aren't worth the effort:

- Purely transactional or product pages.

- Pages on commoditized topics where you're up against Wikipedia.

- Pages you've already tried to fix multiple times with no results.

- Pages ranking for your own brand name.

What to do when multiple pages compete (cannibalization and consolidation)

If you have two or three pages ranking for similar queries, you're splitting your authority. Pick the strongest one, consolidate the content from the weaker ones onto it, and redirect the old URLs. Focusing your efforts on one canonical URL is how you get a page to climb out of the 8–15 range.

How to make an already-ranking page "citation-ready" without rewriting it from scratch

Before you change a word, run a quick citation gap audit. Look at the top-ranking pages for your query. What formats are winning, tables or lists? What kinds of "source blocks" (like named frameworks or data) are competitors using? This gives you a concrete checklist instead of just guessing.

Add "source blocks": definitions, criteria, frameworks, and decision rules

This is the single most impactful change you can make. A source block is just a clean, clearly marked section that states a definition, framework, or rule in plain language. It's something another writer or an AI can easily lift and attribute to you.

For example:

- A named definition: "We define content decay as a measurable decline in organic impressions, clicks, or referring domains over a 90-day period."

- A named framework: "The Rank-to-Cited diagnostic has three components: packaging, authority, and extractability."

- A decision rule: "If a page ranks between 5 and 15 and has fewer than 10 referring domains, prioritize outreach before touching the on-page content."

These give writers something to quote and AIs a confident answer. Aim for two or three per page.

Rewrite for extractability: claim-first paragraphs + scannable proof

Most of us were taught to write like this: context → explanation → claim. For citable content, you have to flip it: claim → brief proof → context.

Instead of: "When we examine how AI engines process content, it becomes clear that..."

Write: "AI engines prioritize pages with a claim-first structure. A page that buries its answer in the third paragraph is harder for a model to cite confidently, and it shows in the results."

Every H3 on your page should open with a direct answer to the question in the heading.

Use comparison tables and structured lists where citations happen

Comparison tables are citation magnets. Writers love them for quick reference, and AIs love them because structured data is easy to parse. If your page compares tools, approaches, or features, a table will dramatically increase its citation potential.

A good table includes:

- Clear criteria in the columns.

- Specific, accurate data (not vague words like "good").

- A "best for" row that makes a recommendation.

The same goes for lists. For processes, use a numbered list. For options, use a clean bulleted list.

Metadata + headings that match how buyers ask AI (without keyword stuffing)

The question someone types into ChatGPT is usually more conversational than a Google search. Your H2 and H3 headings should lean into that question format ("How do I...," "What's the difference between...") without giving up your keywords.

Your title tag and meta description should answer the question right away. If someone asks Perplexity "how to get backlinks to a page that already ranks," and your title is just "Page Optimization Guide," you're invisible.

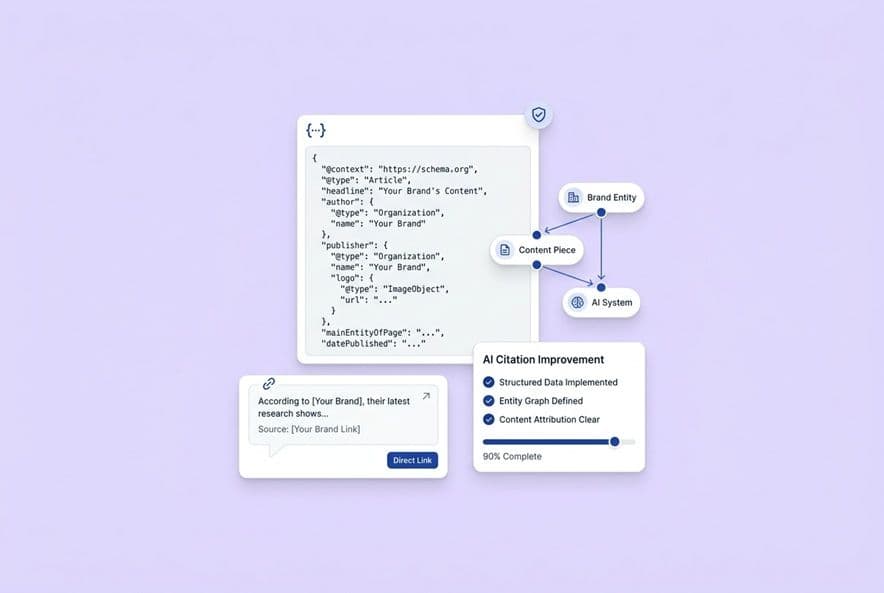

Schema markup and technical hygiene that support trust and clarity

FAQ, HowTo, and Article schema give search engines explicit clues about your content. For pages that already rank, adding schema is a low-risk, high-reward move. Google's structured data documentation covers the supported types and implementation details.

Also, run through this quick technical checklist:

- Is the URL descriptive (not

/p-1234)? - Does it have a self-referencing canonical tag?

- Is the page crawlable in robots.txt?

- Is the mobile page speed decent (over 75 on PageSpeed Insights)?

- Is the "last modified" date current?

None of this is rocket science, but it all adds up to a page that crawlers and AIs trust more.

How to win featured snippets and rich results with pages that already rank

Identify snippet opportunities: which queries and which snippet types you can realistically win

First, check if a snippet even exists for your target query. If one does, you can take it. If not, look at the "People Also Ask" box. Those questions are your opportunities.

Focus on queries where you already rank in the top 10. Trying to capture a snippet from page two is a long shot, but taking it from position 5 is very doable with the right formatting.

Snippet patterns that work: definitions, steps, lists, tables, and "best for" blocks

Every snippet type has a preferred format:

- Definition snippets: A single paragraph of 40–60 words, right after an H2 or H3 asking "What is X?"

- Step-based snippets: A numbered list (

<ol>) where each step is short and action-oriented. - List snippets: A clean bulleted or numbered list of 5–8 items.

- Table snippets: Simple two or three-column tables are best.

- "Best for" blocks: Short sections that match a solution to a use case. AI loves these.

What to change without breaking rankings

This is the key. When you edit for snippets, you must preserve:

- The core topic of the page.

- The heading structure.

- The body copy that got you ranking in the first place.

You're only changing the format and placement of your claims, not the claims themselves. You're adding definition blocks or turning prose into tables, not performing a total rewrite.

How to increase backlinks/citations to an already-ranking page (proven plays + outreach scripts)

This is the part everyone hates. Outreach can feel like a totally different job, and it can feel spammy if you do it wrong. But it's just content distribution with a specific goal: getting people with relevant audiences to link to you.

The "why would they link?" map: match the page upgrade to the linker's incentive

Before you write a single email, you have to answer this question. I call it the "why would they link?" map. It's simple: nobody links to you as a favor. They link because it makes their content better.

- You added an original framework? → Pitch journalists and roundup writers who need a concept to reference.

- You built a comparison table? → Pitch practitioners who are writing evaluation guides.

- You updated your statistics? → Pitch anyone who is currently citing old data on the topic.

5 plays that work especially well for existing URLs

1. The refresh pitch: Email sites that linked to your old version (or a competitor's old version) and tell them what changed. "Hey, we just updated our comparison table in section 3 to include X and Y. Thought it might be a useful update for your post."

2. The missing-resource pitch: Find articles on adjacent topics that have a gap your page fills. "Your guide on X is great, but you might want to add a link to our breakdown of [specific subtopic] for readers who want to go deeper."

3. The evidence swap: Find pages citing an outdated stat or study. Offer yours as a more current replacement. Be specific about why the current reference is stale.

4. The expert contribution loop: Add quotes from experts to your page, then let them know. People who are featured in content love to share it.

5. The partner/customer ecosystem play: Ask your integration partners or happy customers if they'll add your page to their resource library.

Outreach targeting: who to contact and how to build a small, high-fit list

Forget spray-and-pray. Build a tight list of 20–30 sites that:

- Publish on your topic regularly.

- Already link out to similar resources.

- Have a real audience and a DR over 30.

Keeping track of which competitors are winning citations is a nightmare to do by hand. This is another area where we rely on our own tools. DeepSmith's AI Visibility features show us which competitor URLs are getting cited, so our outreach is targeted at displacing the current winner, not just shouting into the void.

What to avoid: spam patterns that burn your domain and your reputation

Don't send lazy emails. Don't buy links. Don't use templates that are obviously templates. Don't pitch your page unless it's genuinely better than what they're already linking to. Quality of fit is everything. According to Google's link spam policies, manipulative link-building practices can result in manual actions against your site.

How to measure whether "getting cited" is working (and when to iterate)

So you did the work. How do you know if it's actually paying off?

KPI stack: rankings, referring domains, link quality signals, and SERP features

Track these metrics at the page level every month:

- Referring domains: How many new domains did you acquire?

- Domain quality: What's the average DR/DA of those new links?

- Featured snippet capture: Did you win the snippet?

- SERP position trend: Did your rank improve?

- Organic CTR & user engagement: The on-page changes in this playbook (cleaner formatting, tables, lists) should also improve user engagement. Better engagement signals to search engines that your page is a high-quality answer.

AI visibility checks: prompts, citation attribution, and competitor benchmarks

Manually checking ChatGPT once a week is a waste of time. You need systematic tracking. We use the AI Visibility modules in DeepSmith for this. We plug in the prompts our buyers use, and it monitors our citation rate across all the major AI platforms. It turns a guessing game into a real metric.

If you want a deeper walkthrough of metrics and setup, see how to Measure AI Search Citations across platforms.

A 30/60/90-day iteration loop for optimization + promotion

- Day 1–30: Make your on-page upgrades (source blocks, rewrites, schema). Start building your outreach list.

- Day 31–60: Actively send outreach emails. Track new referring domains.

- Day 61–90: Assess your KPIs. If they're moving up, keep going. If not, go back to the root cause diagnosis. You might have fixed the wrong problem.

Don't give up after three weeks. Backlinks and AI citation shifts can take a month or two to show up.

How to operationalize this as a repeatable monthly workflow (small team friendly)

Minimal process (2–4 hours/week) vs full process (a quarterly sprint)

Minimal (crawl-walk): Pick one page per month. Do the on-page upgrades in one session. Send 10–15 outreach emails. This is a sustainable pace for a small team.

Full process (run): Audit 10–15 pages at once. Spend a couple of weeks upgrading the top 5, then a couple of weeks on focused outreach. This builds momentum faster but requires dedicated time.

My advice? Build the minimal process first. Make it a habit. Then add the quarterly sprint when you want to accelerate.

Running this workflow at scale is where a connected content pipeline pays off. This is why we built DeepSmith's Content Studio in the first place. It handles the assembly-line steps (research, structure, internal links, publishing) so your team's time goes toward the strategic decisions, not the production mechanics.

If you're trying to make this repeatable across a blog, a rules-based approach like AI Automated Internal Linking can help build topical authority without manual busywork.

Where AI can help without making content generic

AI tools can be great assistants for specific tasks:

- Gap detection: Ask an AI to find questions your competitors answer that you don't.

- Source block drafting: Use AI to draft a definition, then edit it for your voice and accuracy.

- Outreach email variants: Draft a few versions of an outreach email to see which angle feels best.

- Comparison table formatting: AI is great at turning paragraphs of prose into a structured table draft.

What AI shouldn't do is rewrite the whole page. That's how you erase your voice and the unique claims that made the page rank in the first place.

Build your "rank-to-cited" workflow (and make it repeatable)

This isn't a one-time project. It's a system. The pages that consistently earn citations are the ones that are consistently maintained.

Here's your homework. Pick three to five pages from your site using the Rank-to-Cited scorecard. Diagnose the root cause for each one. Apply the right on-page upgrades. Then run a focused outreach campaign.

Before you start, set up your measurement layer so you have a baseline for referring domains, SERP features, and AI citations. Then check your progress at 30, 60, and 90 days.

If you want that system to run without your team burning out, that's what DeepSmith is for. We connect the content production, internal linking, and AI citation tracking into one workflow, so you can focus on building authority, not managing the assembly line.