I bet you know exactly how many internal links you manually added last year. You probably even have a spreadsheet to prove it. And I bet when leadership asked for your "AI search strategy" last quarter, that spreadsheet wasn't what flashed through your mind. It was the dead silence after the question.

If that sounds familiar, you're not alone. It's the double bind most content directors are living in right now: you're still buried in the day-to-day labor of getting content out the door, but you're also suddenly accountable for a visibility channel you haven't had a spare second to build a strategy for.

Buying an AI SEO platform feels like the obvious next step, but I've seen too many teams evaluate these tools completely backwards. They book a bunch of demos, watch slick, polished workflows, and get lost comparing feature lists. The result? They pick a tool, run with it for six weeks, and then wonder why they're still the bottleneck.

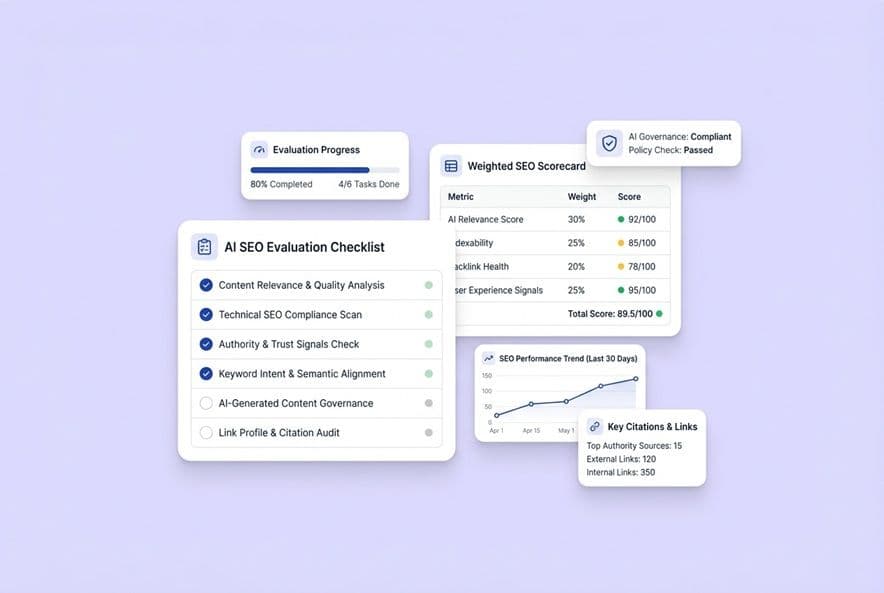

I built this checklist on a different premise, one we learned the hard way: enterprise AI SEO software is only worth it when it measurably removes your operational bottlenecks and improves your visibility in AI-driven search, without creating a new governance headache. The right way to evaluate these platforms isn't by counting features. It's about workflow fit, credible measurement, and your ability to control your brand's voice, accuracy, and compliance at scale. A platform that can't connect its outputs to real business outcomes is just an expensive toy for drafting.

Here's how to tell the difference.

What should enterprise AI SEO software actually replace in your workflow (vs. just "help with writing")?

Before you open a single demo tab, I want you to ask yourself one question: what is actually eating up all the time in your current content pipeline?

The 5 workstreams most content teams are secretly doing by hand

Most of our content pipelines are really just a series of manual workstreams we've stitched together. There's keyword research, brief writing, drafting, SEO scoring, internal linking, finding images, publishing, and then, if we're lucky, distribution.

The problem is, most AI tools only touch one or two of these pieces and call it "automation." You get a briefing assistant that helps with briefs but not linking, or an AI drafter that spits out text but ignores metadata. You end up with a Frankenstein's monster of a workflow, adding more tools without ever actually removing the labor. You've just shuffled it around.

A simple rule: if it doesn't reduce cycle time and rework, it's not enterprise-grade

Here's a little heuristic I use that cuts right through vendor hype. A platform only earns a spot in your tech stack if it reduces the time from an idea to a published article and reduces the editing burden on that draft. If it helps you create faster but you spend just as much time fixing the output, you've gained nothing.

Before you take a single demo, do a quick time-audit on your last five articles. Log every single step. Then, when a vendor shows you their shiny workflow, map it against yours and ask, "Which of my steps disappears?" If their only answer is "drafting goes faster," keep pushing. Drafting was never your biggest time suck; everything that happens around the draft was.

What are the non-negotiables in an enterprise AI SEO platform? (A pass/fail checklist)

These aren't just preferences. In my book, if a platform is missing any of these, it's a non-starter, no matter how good the demo looks.

Content quality controls (E-E-A-T reality, sourcing discipline, editability)

You can't just bolt on E-E-A-T after a draft is written. It has to be baked into the system's DNA from the start. For me, the non-negotiables are:

- Fact-claim constraints: Can you tell the system what it is and isn't allowed to say? No stats you can't source, no superlatives you can't defend.

- Source discipline: Does the tool cite real sources or pull from structured data your team provides, or does it just hallucinate facts with confidence?

- Full editability: Every single word the AI generates must be completely editable before you publish. No locked sections, no "AI recommended" fields that you can't override.

- Human review checkpoints: The workflow must make human review mandatory, not a skippable suggestion. If a platform makes it easy to bypass human eyes, it's a massive red flag for brand risk.

Generic output is the most common failure I see. If a tool can write a draft about your product, and that draft could have easily been written for your biggest competitor, the tool hasn't been given enough context, or it just isn't smart enough to use it. Test this early.

SEO + on-page optimization that's built-in (not a post-draft scavenger hunt)

Let's be honest, post-draft SEO review is where our time goes to die. A platform that's genuinely helpful handles keyword coverage, heading structure, internal linking, and metadata during the creation process, not as a separate cleanup step.

Your pass criteria should be simple. Keyword targets are woven in during the writing process, not just flagged afterward. Internal links are suggested or inserted automatically based on your sitemap. Metadata like titles and descriptions are part of the core workflow. If the platform generates a draft and then tells you to go run it through your separate SEO tool, that's not integration. That's just two tools with a frustrating gap between them.

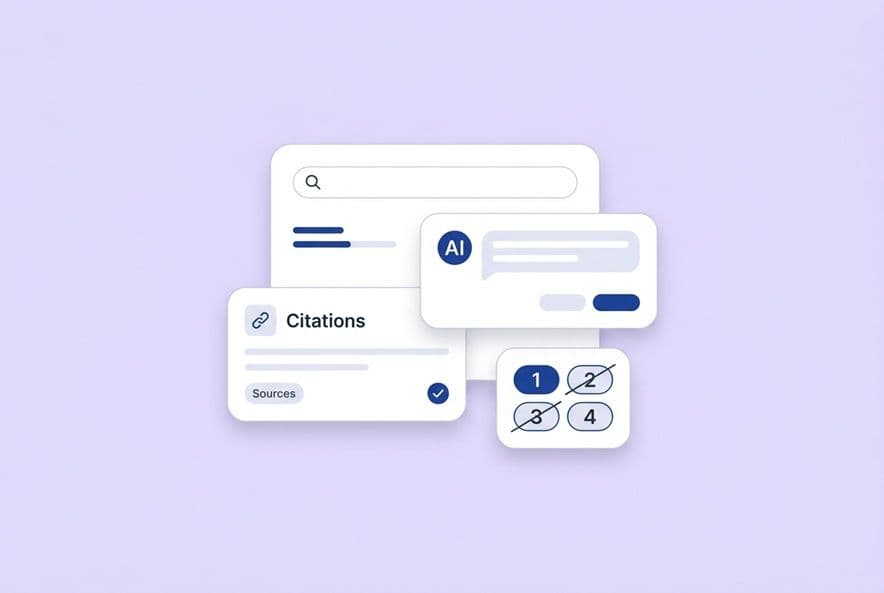

AEO/GEO readiness (structured answers, citation-ready formatting, prompt coverage)

Answer Engine Optimization isn't just a buzzword anymore. It's the thing your CMO is about to start asking you about. Your AI SEO platform has to actively help you create content that earns citations in AI-generated answers, not just rank on Google. It also means the platform itself needs a clear plan for how it will evolve as the AI search engines constantly change their models.

What you need to require is support for structured formatting (like direct answers, clear definitions, and scannable blocks of context) that AI engines can grab cleanly. You also need prompt-level tracking, which is the ability to define the questions your buyers are asking ChatGPT or Perplexity and see if your brand is the one being cited in the answer. If a platform's AEO story is just "we optimize for featured snippets," they're giving you a 2021 answer to a 2025 problem.

Governance: voice consistency, claim boundaries, auditability, permissions

This is the stuff that nobody finds exciting, but it's where enterprise deployments either succeed or silently implode. When you scale up content production, voice drift is inevitable without a strong governance layer. Someone publishes a claim you can't back up. A freelancer's draft sounds like it was written for a competitor. A product feature gets described inaccurately.

Your non-negotiables here should include a structured context layer (think company positioning, product claims, brand voice, and persona details) that shapes every single output. This shouldn't be a style guide someone has to remember to read. You also need role-based permissions so not everyone can publish directly to production, and a clear audit trail of what was generated, who reviewed it, and when. This is precisely the problem platforms like DeepSmith's Deep IQ were built to solve, by storing all that brand context as structured data that gets used automatically, so you're not re-briefing the AI for every article.

Collaboration + approvals that match how your team actually ships content

The most beautiful workflow in a demo will absolutely collapse the second it meets your real team structure. You have to ask how the platform handles review cycles. Can you comment inline? Can you route things to specific people for approval? Can you track revision history? Can you manage access for external contributors?

If your team currently lives in Google Docs because "it's just easier for everyone to comment," you need a platform that either integrates with that flow or gives you something demonstrably better. Exporting a Word doc and calling it a day is not a solution.

How do you evaluate AI search visibility tracking without getting sold vanity metrics?

Right now, every AI SEO platform is going to claim they have AI visibility features. Here's how you can tell if they're real.

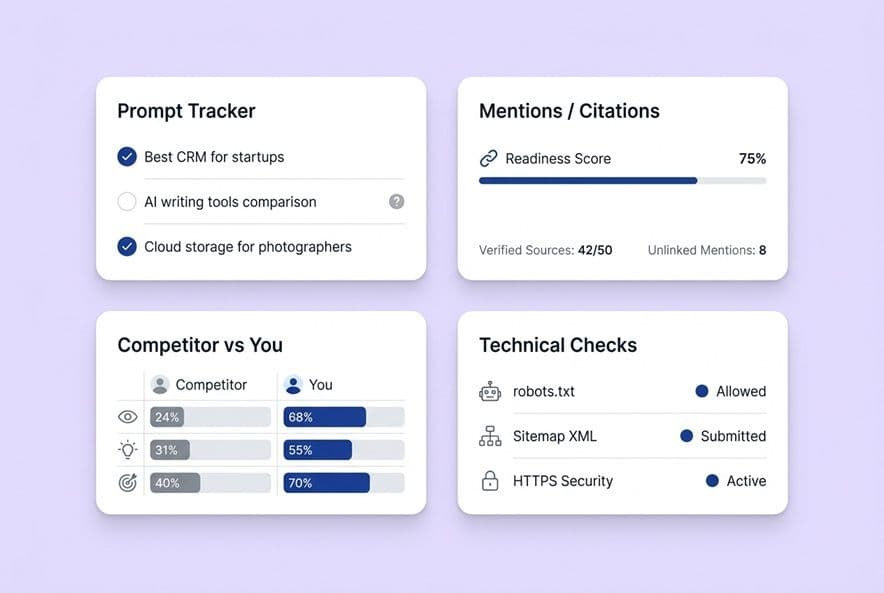

What to track: prompt-level mentions vs citation rate vs page-level attribution

These are three totally different measurement layers. Confusing them will lead you to make bad decisions.

- Prompt-level mentions: Is your brand name just appearing in an AI answer when someone asks a question? That shows presence, but not necessarily credibility.

- Citation rate: Is your brand actually being cited with a source link? This is the metric that drives traffic and builds authority. A mention is not the same as a citation.

- Page-level attribution: Which specific page on your site earned that citation, and is that page's attribution trending up or down over time?

A platform that just reports a single "AI visibility" score without breaking it down is giving you noise. You can't do anything with "your AI visibility went up 12%" if you have no idea what content caused it.

Platform coverage: which AI engines matter and how to avoid "single-engine" blind spots

Your buyers aren't using just one AI tool. Depending on your market, they're bouncing between ChatGPT, Perplexity, Google's AI Overviews, Gemini, and Claude. A visibility tracker that only covers one or two of these is giving you a tiny, skewed picture of where citations are actually happening.

You should require coverage across, at a minimum, ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews. And you need to ask vendors to show you their methodology for each. Are they actually querying these engines systematically, or are they just extrapolating from one? The answer really matters.

The credibility test: can the tool show which pages earned which citations and trends over time?

During every demo, run this simple test. Ask the vendor to show you a specific page, the AI citation rate for that page, and a trend line showing whether it's improving. Then, ask them to show you which specific prompts drove those citations.

If they can show you that cleanly, the measurement is real. If they pivot back to a high-level dashboard summary, ask them again. Platforms that have truly operationalized this, like DeepSmith's AI Visibility suite, give you something you can actually manage because they track mentions and citations across AI platforms at the prompt level and tie them back to specific pages. A number without a page is a vanity metric. A page with a trend line and a prompt is an action item.

Competitive benchmarking: how to turn competitor citations into an action plan

AI visibility data is most valuable when it shows you where you're losing and what you need to do about it. A good competitor benchmarking view should show you which of your competitors' pages are earning citations in your category, which prompts they are winning, and ideally, flag when they publish new content that starts showing up.

The point isn't to get obsessed with what your competitors are doing. It's to turn their wins into your content roadmap. If a competitor keeps getting cited for "how to choose [your category] software" and you don't have a great page on that topic, you've just found your next content priority.

How do you measure ROI from AI-assisted SEO (and AI visibility) in a way your CMO will accept?

"We published more content" is not a business case. If you want to get and keep budget for these tools, here's how to frame ROI in a way that leadership will understand and support.

Separate three ROI types: production efficiency, organic performance, and AI-assisted discovery

I've found that mixing these three things up leads to messy, confusing conversations. Keep them separate.

- Production efficiency: This is about time per article (from brief to published), the number of articles your team can produce per month, and the reduction in editorial revision rounds.

- Organic performance: This is your classic SEO metrics: keyword rankings, organic traffic, and on-page engagement for AI-assisted content compared to your manually created content.

- AI-assisted discovery: This is your brand mention rate and citation rate in AI answers, how that trends over time, and your prompt coverage (how many of your target buyer questions trigger a brand citation).

Each of these has a different time horizon. You'll see efficiency gains in weeks. Organic performance starts to show up in months. AI discovery trends really emerge over a quarter. Set those expectations with your team and your boss from day one.

What to instrument in the first 30 days (without perfect attribution)

You don't need a perfect attribution model to get started. In the first 30 days, just instrument these things:

- Baseline time-per-article. Seriously, just log it manually for 10 articles before the tool, and 10 articles with it.

- Organic sessions to content published with the tool versus content published without it.

- AI citation tracking. Get a baseline of your current mention rate across the major platforms before you change a single thing.

This gives you a simple before-and-after story that's defensible, even if you don't have perfect, closed-loop attribution yet. Directional data now is better than waiting for perfect data later.

A scorecard that includes "hidden labor" (editing, linking, revisions, approvals)

Most ROI calculations for AI tools only count the obvious time savings, and they completely miss the labor that just shifts around. Don't just track how long drafting takes; track how long editing takes. Track how long internal linking takes. Count how many revision rounds a piece needs before it's ready to publish.

If a platform cuts your drafting time in half but doubles your editing time, your net gain is zero. This is why some platforms like DeepSmith's Content Studio are built as a connected pipeline, designed to handle research, briefs, SEO, internal linking, and metadata in sequence, so you're not stuck doing all the optimization manually after the draft is done. Whether you use that platform or another, this "hidden labor" is the real thing you need to be measuring.

Downstream metrics to watch (conversion rate, assisted pipeline, sales enablement usage)

Once your content is out there, the ROI story keeps going. Are readers converting? Is your content showing up in sales conversations? If your CRM has source attribution, track the assisted conversions from your organic content. If your sales team sends content to prospects, track which articles they're actually using.

These metrics take longer to show up, but they're the ones that get your budget renewed year after year. Traffic is the story you tell in month one. Pipeline contribution is the story you tell at your annual review.

What should you demand for compliance, privacy, and "don't embarrass the brand" safeguards?

A tool that clears your SEO bar but gets blocked by the legal team two weeks before you're supposed to roll it out isn't a tool; it's a sunk cost. Do this due diligence early in the process.

Data privacy and model usage: what content is sent where, and what's retained

You have to ask vendors directly: What data is transmitted to the AI model providers? Is our content used to train their models? What's your data retention policy? Who has access to our inputs and outputs?

Your content contains your product strategy, your positioning, and your unique understanding of your customer's pain. That's sensitive stuff. "We use OpenAI's API" is not a complete answer. You need written confirmation of their data usage agreements and opt-out options before you sign anything.

Claim boundaries and factuality controls: how to prevent confident overreach

AI systems are incredibly fluent, which means they sound confident even when they are completely wrong. Without guardrails, an AI platform can publish product claims you've never made, stats you can't source, and competitive comparisons that create real legal exposure.

You must require a mechanism for defining what the system can and cannot claim, with mandatory review checkpoints before anything with a factual claim goes to your CMS. This isn't paranoia. It's the difference between running a controlled publishing operation and creating a liability.

AI detection and penalty-risk reality check: what a vendor can and cannot promise

Any vendor who promises you their content "won't be flagged as AI" or is "immune to Google penalties" is selling you something they can't possibly control. Be skeptical. What they can control is providing features that reduce generic patterns, building editorial workflows that ensure a human is always in the loop, and using structured context layers to make outputs less generic in the first place.

The question to ask is: "Walk me through exactly what happens between generation and publishing that protects our content quality." The answer should be a real workflow, not a marketing promise.

How do you evaluate multilingual and non-English SEO capabilities before you scale globally?

If your company has any plans to expand into non-English markets, you cannot skip this section. Most AI SEO tools are English-first systems with a cheap translation layer bolted on top. That approach breaks down fast.

Non-English keyword research and clustering: what "good" looks like

Real multilingual SEO requires native-language keyword data, including volumes, difficulty, and intent. It's not enough to just translate your English keywords. Ask vendors if they pull data from local search engines or if they just translate queries. The difference is critical.

Quality assurance for multilingual content (tone, terminology, localization vs translation)

Translation and localization are not the same thing. A translated article reads like a translation. A localized article reads like it was written for that market from the start. For any global market you care about, you should require native-language QA capability (either in the tool or as a defined human step), terminology management for your product names, and a way to ensure brand voice consistency across languages.

Governance for regional teams (templates, approvals, terminology locklists)

When you have multiple regional teams publishing at the same time, governance can get messy. You need the ability to create region-specific templates and approval chains, terminology locklists to prevent product names from being translated incorrectly, and a way to see what each regional team is publishing without having to call another meeting.

What implementation plan makes adoption stick (and what team structure you need)?

When I see a team abandon a new tool after 30 days, it's almost never about the tool itself. It's about the lack of a system around the tool.

The 2-week pilot: tasks, sample content set, and success criteria

Structure your pilot around your real work, not some generic demo content. During week one, your goal should be to produce three articles in your actual niche, with your actual internal linking needs, targeting keywords you're actually going after. Measure the time it takes from brief to a draft that's ready for review, and count how many revisions it needed. During week two, focus on the AI visibility tracking setup. Define five prompts your buyers actually use and pull a baseline citation report.

Define your success criteria before the pilot even starts: a minimum acceptable draft quality (you can grade it 1-5), a target for time-per-article, and whether you could successfully set up citation tracking. Score every vendor against the exact same criteria.

Training and operating cadence: who owns prompts, briefs, QA, and publishing

Adoption fails when there's ambiguity. You need to explicitly assign ownership. Who maintains the context layer of voice and claims? Who reviews drafts for editorial quality? Who handles SEO and AEO checks? Who hits the publish button? On a small team, one person might wear multiple hats, and that's fine. What's not fine is confusion. Document this operating model before you roll the tool out, not after people start complaining that they don't know what they're supposed to do.

Integration checklist: CMS, analytics, GSC, existing SEO tooling, automation

Before you sign on the dotted line, you have to verify this stuff. Does it have a native integration with your CMS (WordPress, Webflow, etc.)? Does it connect to Google Search Console for keyword data? Is it compatible with Ahrefs or Semrush? Is there an API for custom work? A great platform that forces you to copy-paste into your CMS twenty times a month has just created a new bottleneck to replace the one you thought you were solving. Some platforms, for example, have features like DeepSmith's Topic Explorer, which connects keyword gap discovery directly to the content production workflow, eliminating that "research lives in a separate tab" problem that makes planning feel like you're doing everything twice.

The vendor question list and demo gauntlet (use this to make the final decision)

You've got the framework. Now here's how to run the final bake-off.

Vendor questions by category (workflow, AI visibility, governance, integrations, support)

Workflow:

- Walk me through the complete process from topic selection to a published article. Where do humans touch it, and where does the system run on its own?

- What does a "good" draft look like right after generation, before a human touches it? Can I see a real example from another B2B SaaS customer?

AI visibility:

- Which AI platforms do you track, and how often are the queries run?

- Can you show me page-level citation attribution with a trend line for one of my pages?

Governance:

- How does the platform enforce brand voice and claim boundaries when we have multiple people creating content?

- What does the audit trail look like? I want to see who approved what, and when.

Integrations:

- Does this publish directly to [your CMS], or is there a manual export step involved?

- Do you have an API? What does the documentation look like?

Support:

- What does onboarding look like in the first 30 days? Who is my point of contact?

- What's the escalation path if we feel the outputs are consistently off-brand or low quality?

The demo gauntlet: 6 scenarios the vendor must run live with your inputs

Don't let vendors use their own canned content for the demo. Before the call, send them this list:

- Your brand voice guidelines and one article you've published that you feel is a good example of your style.

- Three real keyword targets you are actively trying to rank for right now.

- The name of your current CMS (and ask if they can push directly to a staging environment).

- Five real buyer prompts you want to be able to track in AI engines.

- A URL from your site that you know needs three internal links added logically.

- A competitor's domain you want to monitor for AI citations.

Then, on the call, you just watch what happens. A platform that can perform well using your inputs is a platform that will probably work for you in production. A platform that says "oh, we'll get that set up for you during onboarding" for half the list is one that's going to disappoint you in week five.

Comparison table template: how to score finalists and document the decision

Build a simple scoring sheet with five categories, each weighted by what's most important to your team.

| Category | Weight | Vendor A | Vendor B | Vendor C |

|---|---|---|---|---|

| Content quality + E-E-A-T controls | 25% | |||

| AI visibility tracking depth | 25% | |||

| Workflow fit + integration | 20% | |||

| Governance + compliance | 20% | |||

| Implementation + support | 10% |

Score each vendor 1–5 during the pilot. Multiply the score by the weight, and then total up the columns. This gives you a defensible, documented decision that you can take to your CMO or CFO without having to try and remember why you liked one tool more than another three weeks after the demos.

Build your enterprise AI SEO evaluation scorecard

You have the criteria, the red flags, the questions, and the pilot structure. Now, you just have to turn this into a scorecard you can use to make a decision and present it to leadership. Before you take a single demo, define your pass/fail requirements and decide how you'll weight each of the evaluation categories based on your team's biggest pain points.

Run your two-week pilot with your real inputs, and score what you actually see, not what you were promised. The leaders who get this right don't just evaluate the most platforms; they define what "worth it" means to them upfront and hold every vendor accountable to that same standard.