The ChatGPT + Google Docs + Surfer stack is a drafting toolkit - it speeds up the 20% of content production that was already manageable, while leaving the other 80% (briefing, SEO review, internal linking, images, CMS formatting, distribution) squarely on you. An integrated AI content platform collapses those steps into a pipeline where you review and approve instead of manually assembling every piece. The stack works fine at four articles a month. It starts breaking around six to eight, when the content lead's orchestration time becomes the bottleneck, not the writing.

I know because I've lived it. You have a nagging feeling your content workflow isn't working. You just haven't had a spare second to actually do the math on why.

Every article still needs you to write the brief, review the draft, fix the SEO gaps, add the internal links, find an image, format it in the CMS, and then chase down the LinkedIn post that never seems to get written. ChatGPT sped up the first draft, sure. But it didn't touch the other six hours of work that land on your plate.

That's the real question we're tackling here. It's not "which tool writes better?" It's "which approach actually removes work from your plate when you're trying to scale?" The dirty secret of the popular ChatGPT + Google Docs + Surfer stack is that it optimized the 20% of your process that was already pretty easy, while leaving the other 80% on you. All the orchestration, the QA, the publishing, and now the new panic over AI search visibility, that's still your problem.

My take is this: the stack is fine when you're just starting out. But I've seen it time and again, there's a scaling cliff. Most teams I know find it around month three when they try to jump from four posts a month to eight. An integrated platform wins when you need a repeatable, trackable, and governed way to produce content. This is especially true now that "are we getting cited by AI?" is a real question from leadership.

The right choice isn't about the quality of the first draft. It's about whether you're buying a set of drafting tools or a true production system.

Should you use a "ChatGPT + GDocs + Surfer" stack or an integrated AI content platform?

The honest answer? It depends on where your business is right now, not where you want it to be in two years. The stack is cheap and easy to get started with, especially if you're publishing four pieces a month with one solid writer. A platform feels expensive until you start counting your own hours.

The real decision: drafting tools vs. a production system

I see people confuse this all the time. Drafting tools help you write faster. Production systems help you publish consistently. They sound similar, but they solve completely different problems.

A drafting tool gives you a better first draft. That's it. A production system, on the other hand, is built to own the entire lifecycle. It handles the research, the brief, the draft, the QA, the optimization, the internal links, the metadata, the CMS publish, and even the social posts. It shrinks your job from being an assembly-line worker to being the editorial director. You get to make the final calls instead of just clicking buttons in ten different tabs.

If your goal is to double or triple your content output with the same team, you don't need faster drafts. You need fewer handoffs. That's the lens we need to look at this through.

When the stack is rational (and when it's self-sabotage)

I'm not here to tell you the stack is always wrong. It makes perfect sense if you're publishing fewer than six articles a month. Maybe your team is small, senior, and SEO just kind of happens naturally as you edit. In that world, the coordination isn't a huge headache, and the tool costs are lower than a platform license. It works.

It becomes self-sabotage when you're trying to scale. The minute you bring freelancers into the loop or leadership starts asking about AI search citations, the cracks appear. If you're spending more than three hours per article on everything after the draft is done, the stack isn't saving you money. It's just hiding the cost in your calendar and your own burnout.

What does your workflow look like end-to-end? And where does each approach break?

This is where the rubber meets the road. Forget the feature matrix. Let's look at the actual lifecycle of an article.

The stack workflow map (research → brief → draft → score → edit → links → image → CMS → distribute)

Let's walk through the old way, step by painful step. This probably looks familiar.

Research: You're in Ahrefs or Semrush pulling keywords, checking out competitors, and opening a dozen tabs for background reading. (Estimated time: 30–60 minutes)

Brief: You switch to a Google Doc to write the brief. You're outlining the target keyword, the angle, the H2s, sources, internal link ideas, word count, and persona. If a freelancer is writing it, you have to add a ton more context, which takes even longer. (Estimated time: 45–90 minutes)

Draft: You or your writer fires up ChatGPT or Claude. You fuss with the prompt, review the output, and probably re-run a few sections. You get a draft that's maybe 70% usable and 30% fluff. (Estimated time: 60–90 minutes of active work)

Score: Now you copy that draft into Surfer or Clearscope. The tool tells you all the keywords you missed and headings you need. You or your writer go back and revise. (Estimated time: 30–45 minutes)

Edit: This is where the real time suck is. You aren't just fixing grammar. You're injecting your actual point of view, cutting the AI filler, and desperately trying to fact-check things that sound a little too good to be true. This step also includes a mess of Google Doc comments, tracking approvals in Asana or Monday, and dealing with version control. It gets so much worse with more people involved. (Estimated time: 60–120 minutes)

Internal links: You have to stop and search your own site for relevant pages, figure out where to put them, and then go back into the doc to add them. It's so tedious, but you know skipping it will hurt your rankings. (Estimated time: 30–60 minutes)

Image: You hop over to Canva or a stock photo site. Or you wait for a designer. Another 20-30 minutes if you do it yourself.

CMS publishing: The glorious copy-paste from Google Docs into your CMS. You fix the broken formatting, add the metadata, set the slug, pick the categories, and add the alt text. (Another 30–45 minutes)

Distribution: The LinkedIn post. The newsletter blurb. The social thread. Let's be honest, this often gets skipped because you're already behind on the next article.

Total active hours per article, if we're being real with ourselves: 5–8 hours. And a huge chunk of that is you, the content lead, not your writer.

The integrated platform workflow map (pipeline execution + review gates)

A good integrated platform collapses most of those steps. It creates a connected pipeline where humans show up at review gates instead of being the ones turning all the cranks.

You pick a topic (or the platform suggests one). The system then does the research, generates a brief, and drafts the article with keyword coverage and headings already built in. It runs an editorial check, humanizes the voice based on your stored brand guidelines, finds and inserts internal links, generates an image, writes the metadata, and pushes it all to your CMS.

Your job becomes this: review the draft for strategic alignment and editorial judgment, approve it, and publish it.

DeepSmith's Content Studio was built exactly for this. It's a multi-agent pipeline where each step informs the next. You end up reviewing a near-final draft instead of managing a ten-step production nightmare. The human review is still critical (you still need to check claims and add your unique spin), but you're reviewing, not assembling.

The workflow map becomes: trigger → pipeline executes → you review → publish. Three steps. Not nine.

Bottleneck audit: the "last 40%" that stacks don't remove

Here's the pattern I see over and over with teams using the stack. ChatGPT genuinely helps with the first draft, and for a month, everyone feels like a hero. Then they try to double their output.

Suddenly, the bottleneck isn't the draft anymore. It's everything else. The briefs still take an hour. The SEO review still takes 45 minutes. Internal linking is still a 45-minute chore. Editing still takes 90 minutes.

The stack just moved the bottleneck. It didn't solve it. It left the orchestration bottleneck, all the managing and reviewing and chasing across a half-dozen tools, completely untouched. And when you double your volume, that orchestration work doubles right along with it. That's the scaling cliff I was talking about. It's the point where adding more work actually makes the content lead's job grow faster than the team's capacity.

How do you compare total cost of ownership (TCO) without fooling yourself?

The monthly subscription fees are a decoy. The real cost is in your team's hours, especially yours.

Cost categories to include (subscriptions, people hours, management overhead, churn risk)

When I work with teams on this, we build a TCO model that includes these things:

- Tool subscriptions: Ahrefs/Semrush + Surfer/Clearscope + ChatGPT + design tools. For a lot of teams, this is easily $400–$700 a month before you even think about a platform.

- Content lead time: Your hours. The time you spend on briefs, SEO reviews, internal linking, formatting, and just managing the whole pipeline. This is the one everyone forgets to count.

- Writer cost: Your freelancer or in-house writer salary, allocated per article.

- Management overhead: Time spent briefing freelancers, reviewing their work twice (once for quality, again for SEO), and chasing down assets. It adds up.

- Rework: We've all been there. The article that comes back from a freelancer so bad it needs a total rewrite. This happens more than anyone wants to admit.

- Opportunity cost: What about all those great topics sitting on your backlog because you just don't have the bandwidth? Those have real business value.

- Churn risk: If your go-to freelancer quits mid-sprint, what does that cost you in delays and having to re-brief a new person?

A simple worksheet-style TCO calculation you can run in 15 minutes

Do this right now. Take your last five articles. For each one, write down:

- Hours you (the content lead) personally spent on it. Be honest. Include the brief, review, SEO, linking, publishing, and distribution.

- Hours the writer spent on it, including all their revisions.

- Any other time spent by designers or ops people.

Now, multiply those total hours by a fully-loaded hourly rate (a rough way is your salary ÷ 2080 for your hours; the freelancer's rate for theirs). Add the monthly tool costs allocated per article.

Most teams who do this exercise are shocked. They find their true cost per article is often in the $400–$900 range. At four articles a month, maybe that feels okay. But when you want to do twelve a month, you're looking at $5,000–$10,000 in labor alone. And you, the content lead, are the breaking point.

Now, compare the platform license against that number. If the platform cuts your personal time from four hours an article to just one, the savings are very real.

The scaling cliff: why cost per article rises with a stack at higher volume

At low volume, you can spread your fixed costs (tools, your own management time) across a few articles. But when you try to scale up with a stack, the variable costs, like your hours per article and rework, scale right along with it. Sometimes they scale even faster. You don't get more efficient at high volume. You just get more of the same manual work.

Integrated platforms flip this script. The cost per article actually drops as you do more, because the pipeline automation doesn't change whether you publish eight articles or fifteen. Your oversight time stays about the same. That's the real economic argument for a platform. It's not that it's cheaper per seat, but that it's cheaper per article at the volume you actually want to hit.

Which option produces better SEO outcomes in practice (not in theory)?

Both approaches can lead to well-optimized content. The real question is, how consistently can you do it, and how much pain does it cause to get there?

Keyword coverage and heading structure: who enforces it, when, and how reliably?

With the stack, optimization is a chore you do after the draft is written. You run it through Surfer and then fix what it tells you to fix. This can mean multiple back-and-forths, especially with freelancers. You're always reacting.

Integrated platforms bake optimization in from the start. The keyword targets, related terms, and heading structure are all part of the initial brief that feeds the pipeline. The draft shows up already optimized because the system was built to score well from the beginning. You still need to review it, of course, but you're validating the optimization, not adding it in after the fact.

Internal linking and sitemap awareness: manual chore vs. automated step

Internal linking is one of those high-impact SEO tasks that I see get skipped all the time because everyone is in a rush. It's a perfect example of a predictable bottleneck a system should solve.

DeepSmith handles this automatically. During article generation, the pipeline scans your sitemap and inserts contextually relevant internal links. Is it perfect every time? No. You should still review the placements. But you're editing a list of suggestions instead of building that list from scratch. For teams doing ten or more articles a month, that's hours back every single week.

Metadata, formatting, and CMS publishing: where errors and delays creep in

I can't tell you how many times I've seen a simple copy-paste from Google Docs into a CMS create a huge mess. You get broken formatting, skipped metadata, missing alt text. These little mistakes add up and create a massive cleanup project down the road.

A native CMS integration, where the platform pushes directly to WordPress or Webflow, gets rid of this entire step. It includes all the metadata as part of the package. It's not glamorous, but it's a genuine time-saver.

How do you manage AI content risk at scale (quality, ethics, plagiarism, and 'AI detection')?

Doing this at scale multiplies your risk. Publishing one AI-assisted article with a questionable fact is a small problem. Publishing twelve of them a month with no real QA process means that risk is live twelve times over.

The 4 risks to plan for (generic sameness, factual drift, plagiarism, brand/claim violations)

Generic sameness is the most common issue I see. AI drafts are trained on the internet, so they tend to write the most average, boring version of any topic. If you don't intervene, your articles will sound exactly like everyone else's. The fix isn't a better prompt. It's an editorial process that forces you to add your unique point of view before you hit publish.

Factual drift is scarier. This is when the model just makes things up, like statistics, product features, or process steps, because they sound plausible. At low volume, a human expert can catch this. But at scale, you need a systematic way to review claims.

Plagiarism risk is lower than people think with the new models, but it's not zero. Running your final draft through a plagiarism checker isn't being paranoid, it's just good hygiene.

Brand and product-claim violations can be incredibly costly, especially for SaaS companies. It's so easy for a writer (human or AI) to describe a feature you don't have, or make a comparison that opens you up to legal trouble. This risk gets much bigger when you're working with freelancers and trying to move fast.

A practical QA workflow: what humans must verify before publish

Before any article goes live, someone needs to run this simple checklist:

- Sources and factual claims: Does every statistic or definitive statement have a real source? If you can't find one, you have to remove it or soften the language.

- Product claims: Does every mention of your product (or your competitor's) match your official documentation?

- Differentiation: Does this article have a real point of view? Or is it just a summary of the top search results? If it's the latter, you need to add one.

- Voice: Read the first three paragraphs out loud. Does it sound like you, or does it sound like a robot?

This checklist should only take 15–20 minutes if the draft is solid. It can take 90 minutes if the draft is a mess.

Platforms that store your brand context, like DeepSmith's Deep IQ, can help a lot here. It holds your positioning, personas, and product claims as structured data, so the drafts it produces are less likely to have these violations in the first place. You're still the final check, but you're not starting from scratch every time.

"AI detection penalties" vs "helpful content": how to think about the real failure mode

Can we all agree to stop worrying about "AI detection" tools? Google's guidance is clear, even if their enforcement is messy. Content that is helpful, demonstrates expertise, and provides real value will perform well, regardless of how it was made. Content that is generic, thin, and just stuffed with keywords won't.

The real failure isn't "Google detected AI." The real failure is "this content is completely indistinguishable from fifty other articles on the same topic." That's an editorial problem, not a tool problem. It's solved by having a strong QA process, not by avoiding AI.

If leadership cares about AI search visibility, which approach helps you win citations—and prove it?

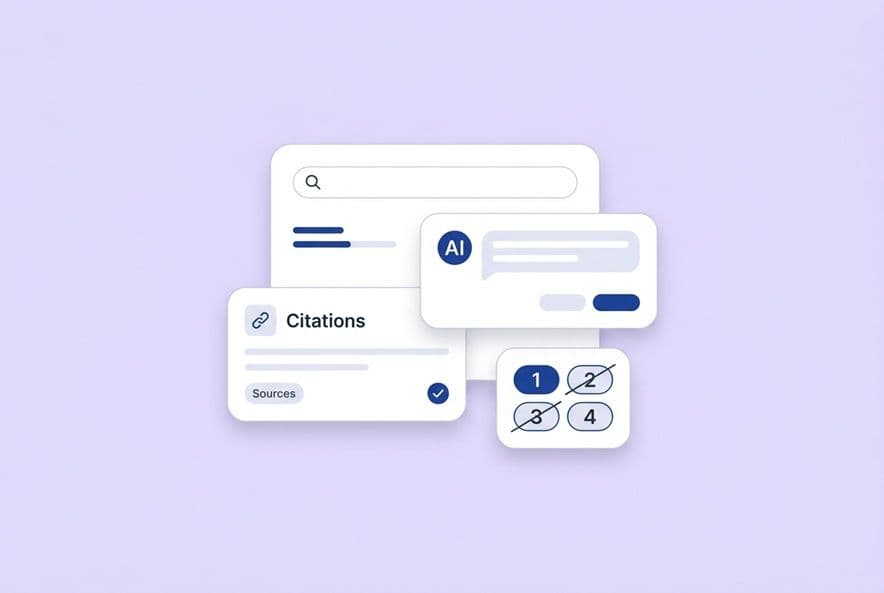

This is where the stack hits a brick wall. There is no ChatGPT plugin or Surfer module that can track whether your content is getting cited in AI answers. It's a totally different class of technology.

What AEO requires that SEO tools don't cover well

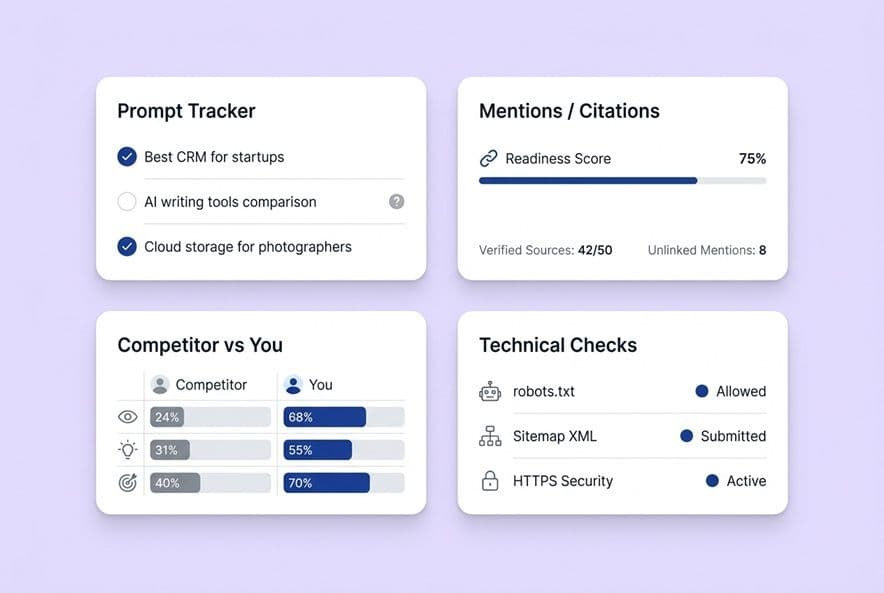

Answer Engine Optimization (AEO) is about getting your brand cited when your buyers ask questions to AI platforms, not just ranking in a list of blue links. To do this, you need to track very specific things: which prompts your brand shows up for, how often you're mentioned versus actually cited (a huge difference), which of your pages are driving those citations, and how you stack up against your competitors.

None of this data lives in Ahrefs, Semrush, or Surfer. Those tools are built for the old world of SERP tracking. Measuring AI citations is a completely new layer of data. Without it, you're flying blind on the one channel your leadership is probably asking about every week.

What to track weekly: prompts, citation rate, page winners, competitor movement

A real AEO measurement process looks like this. You define the 10-20 prompts your buyers are actually typing into ChatGPT, Perplexity, Gemini, and Google's AI results. Then, every week, you track your brand's mention rate and citation rate for each prompt on each platform. You identify which of your pages are winning, and you watch whether that number is going up or down. You also monitor which competitors are winning citations on prompts you haven't covered yet.

This is exactly what DeepSmith's AI Visibility suite is for. It tracks your mentions and citations across five AI platforms, shows you which pages are earning them, and lets you monitor competitors. For teams that were just manually typing prompts into ChatGPT once a quarter, this is a massive leap in capability.

How content needs to change to earn AI citations (structure, claim clarity, comparison tables)

AI engines tend to cite content that is very clearly structured, fact-based, and directly answers a question. Research from Princeton University found that content with specific statistics and cited sources earns 30–40% more AI citations than purely qualitative content. In practice, this means using explicit headers that match the questions your buyers are asking. It means including specific claims with sourced evidence, using comparison tables to show trade-offs, and writing conclusions that give a clear recommendation instead of hedging.

This isn't that different from good SEO writing, but the emphasis on clean formatting is higher, and the discipline of answering the question in the first paragraph is non-negotiable. Every article you produce should be checked against the prompts you're tracking. If a piece covers a topic that matches one of those prompts, you need to make sure it's optimized to be cited.

How should you evaluate an integrated platform (or harden your stack) before committing?

Vendor questions that reveal the truth (automation depth, governance, integrations, collaboration)

When you're watching a platform demo, don't just sit there and nod. You need to ask some hard questions:

- "Show me every single step the system takes, from topic to publish. Where exactly does the human need to step in?" You want specifics, not vague promises of "end-to-end automation."

- "How does the system maintain our brand voice? Where does our brand context actually live?" If the answer is just a PDF style guide you upload, run. Look for structured data.

- "Show me the internal linking it generates on a real site with over 200 pages." Don't let them demo on a small, clean site.

- "What does your AEO tracking cover? Which platforms, what metrics, and how often is the data refreshed?"

- "How does your CMS integration handle our specific custom fields, categories, and metadata?" Their demo environment is clean. Yours isn't.

Vague answers on automation, or a "brand voice" that's just a simple tone setting, are huge red flags.

Adoption plan: how to switch without pausing publishing

My advice is to not try and migrate everything at once. Start by piloting the new platform on 2-3 lower-stakes topics. Run it in parallel with your current stack. Compare the time you invest and the quality of the output. This will help you figure out your new review process before you move your whole calendar over.

If you pilot DeepSmith, do the Deep IQ setup first. Load your positioning, product details, and brand voice into the system before you start generating content. I've seen teams skip this step, get a generic first draft, and lose faith. The problem wasn't the platform; it was that they didn't give it enough context.

After a few weeks, once you've validated your process and confirmed the integrations work, you can expand to your full production cadence.

If you stay with the stack: the minimum viable process to make it scalable

If a platform isn't in the cards for you right now, you can still make your current stack more durable. Here's the bare minimum:

- Standardize your brief template. Create one master Google Doc template with every field you need. Make it non-negotiable. The quality of the brief is the single biggest lever you have on the quality of the output.

- Build a QA checklist. Use the four-point check from earlier in this article and make it the final gate before anything gets published.

- Assign internal linking ownership. Someone has to own it. Put it on a checklist. It's not something that happens "when there's time."

- Create a distribution trigger. The same day an article is published, a task should be automatically created for whoever owns social. It happens every single time, without fail.

This process won't let you scale to 12+ articles a month forever. But it will make six articles a month reliable and defensible. And it will buy you the time to evaluate a platform with real data instead of just vendor promises.

Build a workflow you can scale (without becoming the bottleneck)

If you're still the one writing briefs, doing manual internal linking, and watching social distribution slip through the cracks, you have a production system problem, not a content strategy problem. The stack helped you draft faster. An integrated platform is what helps you scale without your own workload scaling right along with it.

Go run the TCO calculation from this article. If you're spending more than three of your own hours on every piece after the draft is done, you're the bottleneck. And the stack you're using can't solve that.

To see what replacing that manual orchestration feels like, pilot DeepSmith's Content Studio on a couple of your backlog topics. Compare the actual workflow and your time investment against your current process. Don't compare features, compare the workflow. That's the only test that matters.