You know exactly where the time goes. The brief took an hour. The draft came back half-baked. You then spent another 45 minutes fixing headers, cramming in keywords, doing internal linking by hand, finding a cover image, and wrestling with the CMS just to get it published. By then, you were already behind on the next one. Distribution? Never happened.

That's not a writing problem. That's an assembly line problem. And just plopping ChatGPT in the middle of that mess doesn't fix the assembly line. It just gives you a faster, blander first draft that still needs the same multi-hour cleanup on the other side.

This article is about something different. It's about what it looks like to redesign the production system itself, not just swap one tool for another. My take is simple: true consolidation means you collapse research, briefing, drafting, optimization, governance, and publishing into one connected workflow. You don't just add an "AI writer" to your existing chaos. The platforms that win aren't the ones with the slickest demo draft. They're the ones that kill the handoffs.

Here's how I think about evaluating that, proving it to your team, and rolling it out without it becoming another tool nobody uses after month two.

What does a "consolidated content stack" actually replace? (And what does it not?)

The baseline workflow you're replacing: Docs (drafting) + Ahrefs (research) + Surfer (optimization) + "everything else"

Let's be honest about what the work actually looks like. If you're running a typical content operation at a Series A or B company, your day probably feels a lot like this:

Research: You open Ahrefs, pull a mountain of keyword data, and start sorting. You copy it all into a spreadsheet, spending a good 30 to 45 minutes trying to find a decent keyword cluster. Maybe it turns into a brief. More likely, it dies in a forgotten tab.

Briefing: You write the brief yourself in a Google Doc. You list the target keyword, secondary keywords, suggested H2s, competitors to look at, and notes on tone. That's an hour of your life, gone. Minimum.

Drafting: The writer opens your brief in a separate tab and gets to work. You get a draft back in a few days. Sometimes it's great. Often, it misses the mark.

SEO review: Now you open Surfer or Clearscope, paste in the draft, and check the score. You leave comments about missing keywords or entire sections that need to be added. Then it's either back to the writer for another round, or you just sigh and fix it yourself.

Internal linking: Time to manually search your own site for 3 to 5 relevant pages and find a natural place to stick a link. This is another 30 to 60 minutes per article if you're actually trying to do it well.

The rest: You still have to find or make a cover image. Format the whole thing for WordPress. Write the meta title and description. Push it to the CMS and manually schedule it.

Distribution: You had big plans to write a LinkedIn post, a newsletter blurb, and a social media thread. Realistically, the article goes live and you're already drowning in the next brief.

That's your assembly line. Count the open tabs. The handoffs. The manual copy-pasting. Count the important things that always fall off the end.

Consolidation levels (pick your scope): research-only vs production pipeline vs publish-and-distribute

Not everyone is ready to consolidate the whole stack on day one. In my experience, trying to force that is the fastest way to fail. It's better to think about this in levels.

Level 1 — Research and planning only. You use a platform for keyword clustering, analyzing your coverage gaps, and adding topics to a backlog. Briefing and drafting still happen in Docs. This is a low-risk way to start, especially if your team is skeptical.

Level 2 — Research through draft, with SEO built in. Here, the platform handles topic discovery, creates the brief, and generates a draft that already has keywords, structure, and internal links embedded. A human reviews it before publishing. This is where most teams I talk to see the biggest time savings.

Level 3 — Full production pipeline including publishing and distribution. The platform generates the article, cover image, metadata, and assets for social channels, then publishes everything directly to your CMS. Your job becomes a final sanity check, not a series of manual assembly steps.

You're not really consolidating until you hit at least Level 2. Level 3 is where the math for a small, lean team really starts to click. For instance, some platforms (like our own DeepSmith's Content Studio) run a whole pipeline. They handle research, briefing, drafting, QA, voice humanization, internal linking, metadata, and publishing. Your team just reviews the output instead of building it from scratch across a dozen tabs. That's the kind of architecture you should be looking for.

What consolidation won't fix by itself (strategy, positioning, subject-matter expertise, approvals)

Let's be real. A consolidated platform makes you faster at executing a strategy you already have. It doesn't create the strategy for you.

If you don't know which topics matter to your buyers, no platform will magically figure that out. If your product positioning is a mess, the AI drafts will be a mess. If your subject matter experts never have time to add their insights, the content will stay shallow and generic. And if your approval process is a three-week game of email tag, a new tool won't fix that. It will just expose the bottleneck more quickly.

Consolidation is for the manual labor that happens between "we know what to write" and "it's published." You still own the strategy, the point of view, the depth, and the final human sign-off.

It's also not for everyone. Consolidation can be a bad fit if your content requires deep investigative work for every article, or if you operate under intense legal scrutiny where every word has to be custom-written. It's also tough if your own processes are too chaotic to define the inputs a platform needs. In those cases, you might be better off using AI as a targeted assistant for outlines or first drafts, but keeping the rest of your high-touch workflow.

Where the G-Docs + Ahrefs + Surfer workflow actually breaks (and why it hurts)

The hidden time sinks: briefs, rewrites, internal linking, formatting, images, CMS publishing

The writing itself is rarely the biggest bottleneck. What really breaks your process is everything around the writing. I see it all the time. Teams find that the "last 40%" of the work, everything after a first draft exists, takes just as long as writing the damn thing.

Internal linking alone is 30 to 60 minutes of tedious work per article if you're doing it right. Meta descriptions, cover images, CMS formatting, and that final QA pass each tack on another 15 to 30 minutes. A good brief that a writer can actually use? That's an hour of your time, easily.

Add that up across 8 to 12 articles a month, and you've just found the reason you never get to distribution. It's why your content calendar is always behind schedule, and why you're personally stuck doing tasks that shouldn't require a senior leader's attention.

Why "AI draft in ChatGPT" doesn't solve the workflow (and can make it worse)

The impulse to just use ChatGPT makes sense, but it solves one tiny step while leaving the rest of your broken workflow intact. The draft it spits out ignores your keyword brief. It has no idea what you've already published, so it can't suggest internal links. It just makes up product features, statistics, and quotes with the unearned confidence of a college freshman.

So now you have a faster draft that needs even more cleanup. The SEO review, the internal linking, the formatting, the image sourcing, the publishing, all those steps are still there. But now you also have to spend extra time fact-checking hallucinations and stripping out the robotic phrasing before you can even think about putting your brand's name on it. A truly connected workflow solves this problem at an architectural level, not with a faster prompt.

The quality failure modes: generic structure, keyword misuse, claim drift, inconsistent voice

When you're looking at any AI-assisted workflow, don't just look at the best-case demo. Look for what breaks when you try to do it at scale.

Generic structure: Every article has the same boring intro formula, the same H2 rhythm, and the same "in conclusion" sign-off. Your readers feel it even if they can't name it.

Keyword misuse: The target keyword is in there, but it's forced into sentences where it makes no sense. The article might rank for the keyword, but it doesn't actually satisfy the searcher's intent.

Claim drift: The AI confidently states an incorrect product feature, cites a statistic from a non-existent source, or makes a competitive claim that would give your legal team a heart attack. You'll catch this when you're doing one article. You won't catch it when you're trying to publish ten.

Inconsistent voice: The first article sounds like your brand. By the eighth, it sounds like every other generic SaaS blog on the planet. The voice was defined in a style guide PDF that the system, of course, never read.

These aren't just edge cases. They are the predictable, frustrating outputs of a disconnected workflow trying to run at scale.

How platforms consolidate "Ahrefs → brief → Surfer" into one system

Topic discovery and clustering: replacing "keyword lists" with coverage-driven planning

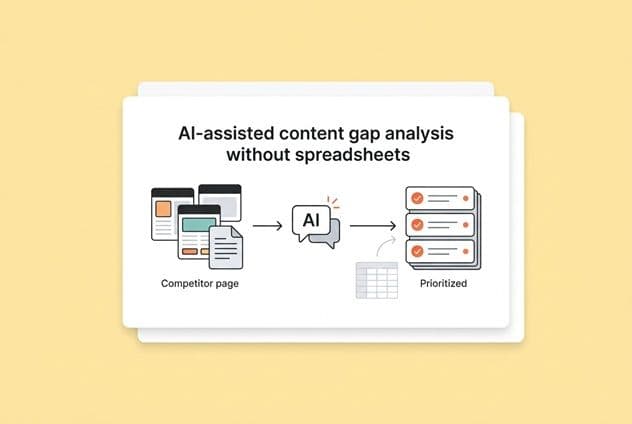

The problem with traditional keyword research isn't the data. Ahrefs and Semrush are fantastic tools. The problem is that the research lives in one place and the work happens somewhere else. A spreadsheet with 200 keywords isn't a content plan. It's a to-do list that's going to die in a Google Drive folder.

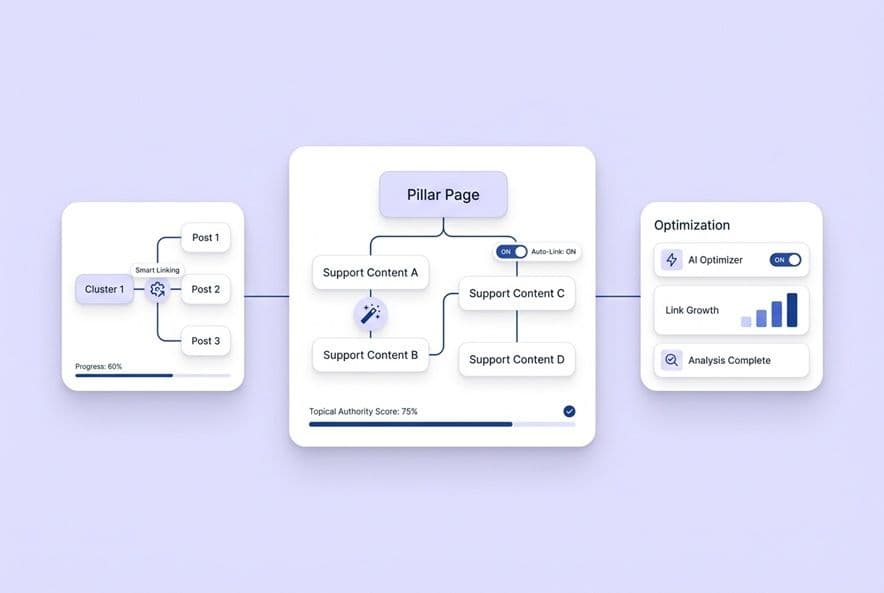

A consolidated platform changes this model. Instead of exporting a list of keywords and then manually trying to decide what to write, you work with a topic clustering engine. It groups keywords by intent, shows you where you have coverage gaps, and lets you push a topic directly into production, all from the same screen.

What you want is topic clustering that feeds a production queue, not just another research tool that hands off to a separate workflow. DeepSmith Topics, for example, does this by clustering keywords, surfacing your gaps, and letting you move a topic right into content production. Your research shouldn't die in a spreadsheet.

Brief generation: turning keyword intent + SERP patterns into a structured plan

A good AI-generated brief does more than just list keywords and a word count. It should analyze the search results, suggest an angle based on what's already ranking, propose an H2 structure that covers the topic properly, and even flag related topics and internal linking opportunities.

The practical question you should ask during any demo is this: "What does your brief actually contain, and does it reduce the amount of guesswork my writers have to do?" If the brief is just a keyword and a prompt, it's not really a brief. If it's a structured editorial plan with intent, angle, and competitive context, then it's actually replacing the hour you currently spend writing briefs by hand.

In-draft optimization: structure, semantic coverage, and section-by-section answerability

This is where most "AI writing tools" show their true colors. They produce a block of text and then run a scoring pass afterward. That's just Surfer-in-a-box, not real consolidation.

Real in-draft optimization means the system is building semantic keyword coverage into the article's structure as it writes. Section by section, it should be asking itself: does this heading address the user's question? Does this paragraph include the right supporting terms? Is the content actually answering the query, or is it just stuffing in keywords?

The goal should be a draft where your SEO review is just a quick confirmation, not a major revision cycle. If your editor is still heavily rewriting the structure for keyword coverage after the AI draft is done, the optimization isn't truly embedded. It's just a fresh coat of paint.

AEO considerations: writing for extraction, citations, and AI answers

Your leadership is probably starting to ask about AI search visibility. The question is no longer just "does this rank on Google?" It's now "does this get cited when someone asks ChatGPT or Perplexity a question about our industry?"

Content that gets cited by AI tends to have a few things in common. It gives clear, direct answers to specific questions, it uses structured data and well-organized headers to signal what each section is about. These aren't afterthoughts you can sprinkle on later. They need to be built into the drafting process from the start. When you're evaluating platforms, ask if this kind of AEO-friendly structure is native to their writing pipeline or if it's just another thing you have to fix manually.

How consolidated platforms replace Google Docs: collaboration, QA, and governance

Multi-role workflows: writer, editor, SEO reviewer, SME, approver—who does what where?

Let's be honest, the reason Google Docs is so sticky isn't its features. It's because everyone on your team already knows how to use it. Any platform that wants to replace it has to be just as simple for collaboration. If it's not, your team will just keep doing their final edits in Docs, turning your shiny new platform into just another input.

Before you choose a platform, map out how each person on your team will interact with it:

- Writers need to be able to edit, respond to comments, and see why the system made certain choices.

- Editors need to be able to review and approve work without having to rebuild the draft in another tool.

- SEO reviewers need to see the keyword coverage decisions, not just the final text.

- SMEs need a dead-simple way to jump in and add their expert comments without a learning curve.

- Approvers need a clean, final version to review, not a mess of seven different draft versions.

A platform that truly consolidates will have a clear, easy path for every one of these roles inside a single interface. If any step requires someone to export the work back to Docs, you haven't replaced Docs. You've just added a step before it.

Governance that actually prevents drift: voice, templates, claim boundaries, and review checklists

A style guide PDF doesn't enforce anything. It's a document that everyone forgets about after their first week. What actually prevents your brand voice from drifting and stops claim errors at scale is structured context that the system reads every single time it writes.

Look for a context layer that stores your brand voice, product details, and claim boundaries as structured data that actively shapes every output. This is what stops article 47 from sounding like it was written by a completely different company than article 3. It's also what keeps the AI from inventing product features that don't exist or citing stats that aren't real. A system with this kind of layer gives you drafts that are already inside your guardrails, not drafts you have to painstakingly audit for basic accuracy.

This is the governance piece most platforms skip. They give you a little "style settings" box and call it a day. Real governance means the system can't go off the rails, not that you've just written the rails down somewhere.

Editorial QA realities: hallucinations, stale info, and "sounds right" errors

No platform is going to eliminate the need for human review. So let's be precise about what that review should focus on. AI systems make things up. They cite sources that don't exist, state statistics that aren't real, and describe product features with a confident-but-wrong flair.

Your editorial QA should be laser-focused on these high-risk zones: the factual accuracy of any data point, the accuracy of any product feature description, any claims you make about competitors, and any statement that requires a source.

What you should not be spending your QA time on in a good workflow is keyword placement, internal link selection, header structure, or meta description formatting. If the platform is doing its job, those things should be handled long before the draft ever gets to you.

The forgotten pieces of consolidation: linking, metadata, formatting, images, and publishing

Internal linking at scale: how it should work, and what to validate

Automated internal linking sounds amazing, right up until you see a platform insert links to totally irrelevant pages or use anchor text that makes no sense. Good internal linking means the system scans your sitemap, identifies topically relevant pages, finds a natural place to put the link, and uses varied anchor text.

Here's what to check: Are the linked pages actually relevant? Does the anchor text make sense in the sentence? Is the system avoiding linking to pages that compete for the same keyword (which causes cannibalization)? Is it inserting 3 to 5 high-quality links, or just spamming the page with 15 links that scream "bad SEO"?

Metadata + structured formatting: making posts publish-ready, not "draft-ready"

There's a huge difference between a draft that reads well and a draft that's ready to go live. CMS-ready means the meta title is written and within the character count, the meta description is there, the H1 is set, alt text is drafted, and the formatting is clean enough that it won't break when you paste it into your blog.

Most platforms give you a "draft-ready" document and call it "publish-ready." When you're evaluating a tool, take a sample output and paste it directly into your CMS. How much cleanup does it need? The answer should be "almost none," other than your final editorial review. It shouldn't be a reformatting project.

Native publishing vs export: what "no copy-paste" actually means

"Exports to CMS" and "publishes to CMS" are not the same thing. Exporting means you get a file that you then have to paste somewhere else. Publishing means the platform pushes the content directly to WordPress, Webflow, or whatever you use, with all the formatting intact.

The copy-paste step sounds trivial, but in practice, it's where formatting breaks, alt text gets lost, and a 5-minute task mysteriously turns into 20 minutes of cleanup. Look for a native publishing integration, not just an export button.

How to measure ROI and prove it works in 30–90 days

ROI buckets: tool costs, labor hours, throughput, revision cycles, time-to-publish

The ROI conversation with your finance team will need numbers. I usually break it down into four buckets.

Tool cost displacement: Add up what you're paying for Ahrefs, Surfer, Canva, and any other tools you're replacing. A platform that consolidates these gives you a clear cost offset before you even count time savings.

Labor hours recovered: Calculate how much time you personally spend on each article today. Think brief writing, SEO review, internal linking, image sourcing, formatting, and publishing. Most teams I talk to are spending 4 to 6 hours of lead-time per article. At 10 articles a month, that's a full week of your time. What does that number drop to with a new system?

Throughput increase: How many more articles can your team realistically publish per month if you remove all that manual labor? If you go from 8 to 16 articles with the same team, the value of that extra content is huge.

Revision cycle reduction: Count how many back-and-forth rounds a typical article goes through today. If a platform delivers a near-final draft with SEO and links already built in, revision cycles shrink dramatically.

Quality and performance metrics: what to track so speed doesn't kill your results

Going faster is useless if the quality drops and your brand is damaged. You need to track both leading and lagging indicators.

Leading indicators (check at 30 days): How long does it take to get an article from brief to publish? How many revision cycles does each draft need? Are internal links and meta descriptions consistently being included?

Lagging indicators (check at 60–90 days): What's the organic traffic trajectory for the new articles versus the old ones? Are keyword rankings improving? Are these articles being cited by AI search engines like ChatGPT, Perplexity, or Google's AI Overviews?

On that last point, you need a way to measure AI visibility. Some systems (like our own DeepSmith AI Visibility) can track mentions and citations across major AI platforms and attribute them back to specific pages. This helps you prove that your content changes are actually making you more discoverable in this new world, not just improving your old-school search position.

A practical 30/60/90-day pilot plan

Days 1–30: Pick one simple content type (like bottom-of-funnel comparison posts) and one workflow. Run 4 to 6 articles through the system end-to-end. Measure the time-to-publish per article against your old baseline, count the revision cycles, and get your team's gut-check on the quality.

Days 31–60: Expand to a second content type. Look at the performance of the articles from your first month. Are they getting traffic? Are they ranking? Fix any friction points you found in your workflow.

Days 61–90: Onboard the full team. Measure your total throughput and cost savings. Are you spending more time on strategy and less on tedious production work? Before you even start the pilot, set a clear goal. For example: "If we can publish 15 articles in 90 days, with less than 2 revision rounds each, and no brand voice failures, we'll move forward."

Implementation: How to consolidate without buying a tool nobody uses

Migration approach: start with one content type and one workflow lane

The biggest mistake I see is trying to boil the ocean. Don't move every content type at once. Pick one that's high-volume and formulaic, like blog posts for informational keywords. And pick one simple workflow (like research → draft → publish). Run that for 30 days. Prove the value with a clean before-and-after comparison before you add anything else.

Process redesign: approvals, versioning, and where final accountability lives

When you consolidate tools, you have to be explicit about process. Who has the final say before publishing? Where does the "source of truth" version of an article live? Define this stuff before you start. A simple ruleset can save you a lot of pain: the platform is the working document, editors make changes inside it, no exported copies are the source of truth, and the content lead has final publish authority. It seems obvious, but it's a nightmare to sort out after the fact.

Vendor evaluation questions to ask

Use these questions in your demos.

- "Show me the entire research-to-publish workflow in your platform. No hypotheticals, the actual steps."

- "How does your system enforce our brand voice and claim boundaries? How do you stop it from making things up?"

- "Show me how internal linking actually works. How does it choose links and anchor text?"

- "Does your CMS integration push natively, or does it just export a file?"

- "How do you handle approvals with multiple reviewers?"

- "If I want to customize a workflow for a different use case, what does that take?"

- "What metrics do you show for AI visibility, not just traditional SEO?"

Any platform worth your time should be able to answer these clearly. If the demo just keeps showing you a "perfect draft" but gets fuzzy on governance, linking, and publishing, you're likely looking at a point solution with a platform price tag.

During a pilot, look for systems that can maintain a publishing cadence without you having to be hands-on all the time (like with scheduled generation via DeepSmith Autowrite) and that reduce drift with a structured context layer (like DeepSmith Deep IQ). You want drafts that stay on-brand even when you're not actively in the tool.

Build your consolidated content stack evaluation checklist

The ideas in this article are only useful if you actually use them in your next vendor call. So take the evaluation questions from the implementation section, the ROI buckets from the measurement section, and the consolidation levels from the beginning, and turn them into a simple checklist.

For each platform you look at, score it on how well it unifies the workflow, the depth of its governance, whether its SEO is truly in-draft, if it has native CMS publishing, how it measures AI visibility, and what kind of change management support it offers. The platform that scores highest on unifying your workflow and providing real governance is the one that will actually make your life easier in the long run. It's not about finding the "best draft," it's about finding the best system.