You're doing internal linking by hand, aren't you? Every single time.

You open a new tab, pull up your site, and start the treasure hunt. You scan for relevant pages, copy URLs, try to write some decent anchor text, and drop it all into your new article. Then you do it again for the next one.

It's thirty, maybe sixty minutes per article. And that's on a good day, when you don't just skip it because you're already behind schedule. I've been there, and I know that feeling of choosing between "doing it right" and "getting it done."

Here's the thing: you don't have an internal linking task. You have an internal linking system problem. AI platforms can solve this, but there's a catch. You have to treat automation as a controlled, rules-based process, not just a button that sprays links everywhere. One way builds genuine topical authority; the other creates a massive mess you'll have to clean up later.

Let's build the system.

What does "topical authority" actually mean, and how do internal links create it?

Before we get into the tech, let's get real about the goal. Everyone throws around "topical authority" like it's some kind of magic dust. It's not. When you cut through the jargon, the mechanics are pretty simple.

How internal links shape discovery, hierarchy, and "what you're about"

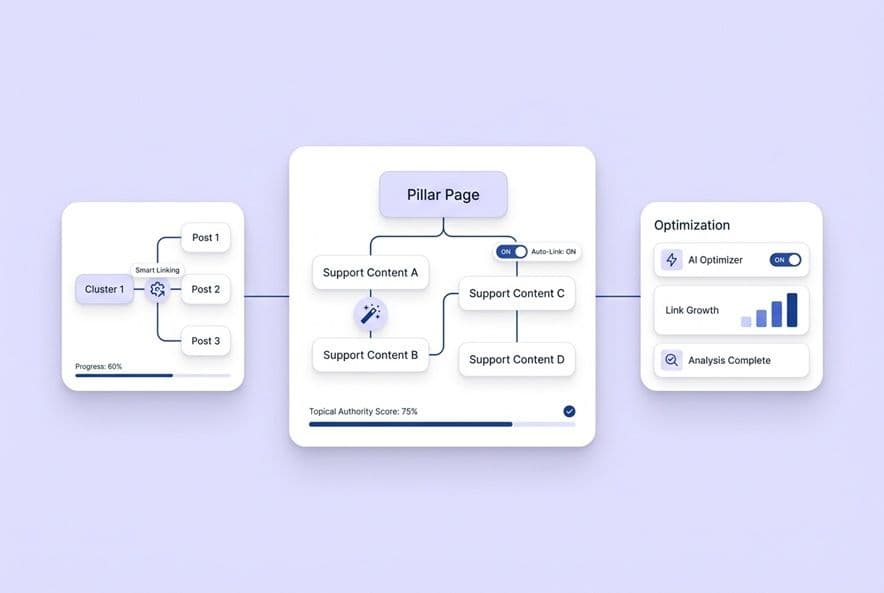

Search engines use your internal links to figure out three things about your site: what pages you have, how they're related, and which ones are most important. When you link from a big pillar page to a smaller, supporting post, you're basically telling Google, "Hey, this little post is part of this bigger topic, and you should check it out."

That web of relationships is what topical authority really is. The pattern of your links shows that you've gone deep on a subject, not just written a one-off article.

The misconception: more links ≠ more authority

I learned this one the hard way. Early on, I thought more was always better. I'd cram 15 internal links into every post, thinking I was crushing it. I wasn't. All I was doing was signaling that I was optimizing for links, not for people.

Google's spam policies are designed to catch exactly this kind of behavior. A site full of forced, irrelevant links just looks like a link scheme, whether a person or a robot put them there. Relevance, placement, and whether the link actually helps the reader matter so much more than just the count. A single, well-placed link to a truly related page does more work than five random links a tool found by matching keywords.

The real goal: make clusters navigable for both bots and humans

The mental model that works for me is this: your internal links should create a clear path. Someone reading your main page on customer onboarding should be able to follow a link to a post about onboarding emails, and then maybe to another about activation metrics.

That path works for a human who is genuinely curious, and it works for a web crawler trying to map out your site. When your links serve that "information scent," pointing people toward the next logical question, you're building authority the right way.

How do AI platforms decide which internal links to add (and what inputs they need)?

Most of the sales pitches stop at "the AI finds related pages." To actually trust automation, you need to know how it thinks, what it needs, and where it's likely to fail.

The minimum viable inputs (sitemap, page inventory, titles/H1s, topical tags)

An AI linking system is only as smart as the information you give it. At a bare minimum, it needs your full list of URLs, your page titles, any tags or categories you use, and a way to know which pages are cornerstones versus regular posts.

Without this, the AI is just guessing. It might link to an old post, a page in a totally different topic cluster, or something that has nothing to do with the reader's next question. That's not automation; it's just generating randomness.

Common decision signals (semantic similarity, cluster membership, intent alignment, freshness)

Once the AI has a rich inventory of your pages, it can start making smart decisions. It looks at multiple signals. Semantic similarity finds pages about similar concepts. Cluster membership shows which pages live in the same topical neighborhood. Intent alignment checks if the linked page is for the same kind of user. And a freshness signal flags if a page is new or ancient.

No single signal is enough. A page can be semantically similar but for the wrong audience, or in the right cluster but years out of date. Good automation weighs all these signals together and, crucially, lets you override its suggestions.

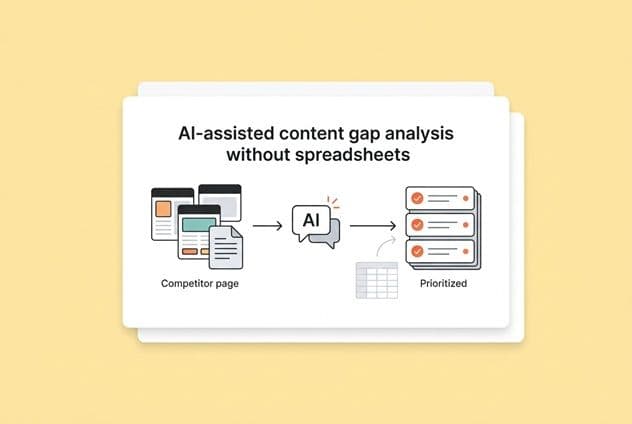

Why "enriched sitemap" beats "best guess from the current draft"

Here's a failure I see all the time. Some tools only look at the one article you're writing and suggest links based on keywords in that draft. I once tried a tool like this and it was a disaster. It would see the keyword "onboarding" and suggest a link to a three-year-old post that was totally out of date, completely ignoring the brand-new pillar page we'd just published.

It had no clue.

An "enriched sitemap," one that includes all your metadata like clusters and content type, gives the AI the context it needs to make good decisions. Some platforms, like DeepSmith Content Studio, build this right into the writing process, scanning and matching against this rich map of your site in real time. The key is enriched. The quality of the links depends entirely on the quality of the context you provide. An editor still needs to review the work, but that painful step of manually cross-referencing everything disappears.

Where automation breaks first: outdated pages, overlapping topics, and a messy taxonomy

Before you flip the switch on automation, be ready for these common problems:

Outdated target pages. If your site has a bunch of old, thin content, an automated system will happily send your readers there. You have to do some house cleaning on your content before you start. A content refresh focused on ROI can help you prioritize which pages to fix first.

Overlapping topics. Got five posts that all kinda cover the same thing? Semantic matching will get confused. It will pick a page, but probably not the best one. This is why you need to define your pillar pages first.

Messy taxonomy. If your categories and tags are an inconsistent mess, you're giving the AI a broken map to navigate. The fix isn't a smarter AI; it's a content strategy cleanup sprint.

Automation just amplifies what you already have. A good information architecture gets even better. A messy one gets messier, and a whole lot faster.

Where should automated internal links go in an article to help UX (not annoy readers)?

You can get the link selection perfect and still create a terrible user experience with bad placement. This is the human side of safe automation.

Placement rules that usually work (definitions, next-step guidance, related subtask moments)

There are a few moments in any article where a link feels like a genuine service to the reader:

- Definition moments: You introduce a term someone might not know. Linking to a deeper explanation is just plain helpful.

- Next-step guidance: You tell the reader to "set up your topic cluster strategy." If you have a post on that, link to it. It's just common sense.

- Related subtask callouts: You mention a process you've already covered in detail somewhere else. Perfect place for a link.

These work because the reader knows what they're going to get before they click. The destination delivers on the promise.

Placement patterns to avoid (link density, early-paragraph clutter, unrelated "SEO links")

The patterns that drive me crazy as a reader are easy to spot:

Link density in a single paragraph. Jamming more than two links into one paragraph creates a wall of blue text. It looks robotic and is hard to read. Just cap it.

First-paragraph linking. Don't ask for a click before you've even made your point. Let the reader get invested in the article first.

"Orphan SEO links." These are the worst. They're links that only exist because a tool found a keyword match, not because they help the reader. They're distracting and a dead giveaway that your linking strategy wasn't made with care.

The UX test: would this link help a skimming reader?

Here's a quick gut check I give my editors. Imagine you're just skimming the article, reading only the headers and first sentences. When you see a link, does it make sense? Is the anchor text crystal clear about what you'll get if you click?

If the answer is no, the link either needs better anchor text or it shouldn't be there at all. This catches most of the bad placements before they go live.

How do AI tools pick anchor text—and how do you keep it from sounding spammy?

Anchor text is where bad automation becomes most obvious. A link to the wrong page is a mistake. Robotic, keyword-stuffed anchor text is a mistake that actively damages your brand's credibility.

Anchor text types (exact, partial, branded, generic) and when each is appropriate

| Anchor Type | Example | When to Use | When to Avoid |

|---|---|---|---|

| Exact match | "internal linking automation" | Pointing to a key pillar page; use it sparingly. | Repeating it across many posts; it feels over-optimized. |

| Partial match | "automating your internal links" | Most of the time. It sounds natural and works in conversation. | When the phrase feels forced into the sentence. |

| Branded | "DeepSmith's content pipeline" | Referencing a specific product or feature page. | When the brand name just feels like an ad. |

| Generic | "learn more," "read this guide" | In supplementary callouts, like in a footer. | In the main body. It offers no context. |

My rule of thumb: partial match anchors are your workhorse. They sound natural, carry the right signals for SEO, and don't scream "I'm trying to rank for this keyword!" Exact match is fine now and then, but moderation is key.

The "unnatural anchor" problem: over-optimization and readability

The clearest sign of bad anchor automation is a linked phrase that just doesn't fit the sentence. Something like "Learn about our best practices for customer onboarding for SaaS companies" as a link in a normal paragraph doesn't read like human language. It breaks the flow and screams automation.

This is more than just an SEO risk; it's a brand safety issue. A misleading anchor that promises a deep dive but links to a generic sales page is a small betrayal. It erodes reader trust. When your automation makes promises your content doesn't keep, that's an editorial failure. Systems with a structured context layer, like DeepSmith's Deep IQ, can reduce this by ensuring the system understands your voice and offerings before making suggestions.

A practical review checklist for anchor edits (clarity, promise, accuracy, tone)

When you're reviewing AI-generated anchor text, run it through this quick check. I promise it takes less than a minute.

- Clarity: Does the anchor tell the reader what they'll find?

- Promise match: Does the linked page actually deliver what the anchor implies?

- Accuracy: Is the destination page current and correct?

- Tone: Does the anchor fit the sentence, or does it sound like a keyword that was just dropped in?

This simple check catches almost all the anchors that can damage trust.

What's the safest workflow for internal linking automation (so humans keep control)?

This isn't a strategy doc. This is the actual operating procedure my team runs. Feel free to steal it.

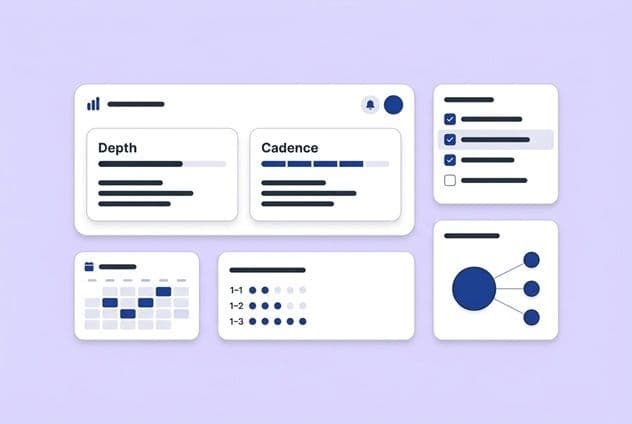

Step-by-step workflow: strategy → rules → generation → review → publish → monitor

Step 1: Map your clusters. Before anything else, define your topics, your pillar pages, and your supporting posts. This is the human strategy that everything else is built on.

Step 2: Set your rules. Write down your link caps (like 3–5 per 1,000 words), pages to exclude (like your privacy policy), and which cluster every URL belongs to.

Step 3: Generate with context. Run your AI tool using your enriched sitemap. The system will suggest or insert links based on the rules you just set.

Step 4: Editor review. Every single article gets a 10-minute link review before publishing. Use the four-point checklist from above. You're just looking for mistakes, not rebuilding from scratch.

Step 5: Publish and log. Keep a simple record of what links were added where, and when. You'll want this later.

Step 6: Monitor and iterate. Once a month, check your crawl data, link distribution, and cluster performance. Adjust your rules based on what's working.

To keep things moving while you're focused on strategy, tools like DeepSmith Autowrite can keep publishing content on schedule, so the pipeline doesn't stop while you build out the governance.

What to automate vs. what must stay manual

Automate: Finding link candidates, generating suggestions, drafting anchor text, and initial placement.

Keep manual: Defining clusters, setting rules and exclusions, making the final UX call on placement, and editing the final anchor text.

The line is pretty clear: let the machines handle discovery and execution. Humans should handle strategy, exceptions, and the final judgment call.

Guardrails that prevent "SEO debt at scale" (caps, exclusions, no-link zones)

Here are the specific guardrails to document before you begin:

- Link cap per article: A hard max on automated links per post.

- Exclusion list: A list of pages that should never be linked to.

- No-link zones: Sections that should always be link-free, like intros and final CTAs.

- Freshness filter: Only link to pages updated or reviewed recently.

- Cluster restriction: Prioritize links within the same topic cluster.

How do you measure whether automated internal linking is working?

Just counting the number of links you've added is a vanity metric. Here's what to track to see if your system is actually working.

Baseline first: what to measure before you automate

Before you change a thing, take a snapshot of where you are now. You need to know your total indexed pages, crawl coverage, orphan page count, average links per page, and your current cluster performance in Google Search Console. Without this baseline, you can't prove your changes made a difference.

SEO signals to watch (crawl/discovery, internal link distribution, cluster performance)

Crawl and discovery: Are those pages that were once ignored finally getting crawled? Look for newly indexed pages and fewer crawl errors.

Internal link distribution: Is link equity being spread more evenly? Check the inbound internal links for pages in your key clusters.

Cluster-level performance: Track the organic performance for each topic cluster as a whole. As the cluster gets stronger, you should see multiple pages start to rise together.

Be patient with these. You might see crawl stats improve in weeks, but it can take a full quarter or more to see real movement in GSC as Google re-evaluates your site. For those of you tracking AEO, a tool like Founders AEO Checklist can also show you when your cluster pages are earning more citations in AI-generated answers.

UX proxies that keep automation honest (engagement patterns, navigation behavior)

Don't let this become a pure SEO game. Track scroll depth and time-on-page. If engagement drops after you add links, they're probably distracting, not helping. Check your internal click-through rates in GA4 and watch bounce rates on your most commonly linked-to pages. This tells you if the pages are delivering on their promise.

What should you look for when evaluating AI platforms for internal linking automation?

Think of this as a buyer's guide from a friend. Here's what to look for and what to ask the sales reps.

Capability checklist (context inputs, edit controls, audit trail, rerun logic)

These are the non-negotiables for any platform you consider:

- Enriched site context as input: The system must see your whole site, not just the draft you're working on.

- Edit controls before publish: You must be able to review and change every single suggestion.

- Rule configuration: You need to be able to set caps, exclusions, and cluster rules.

- Audit trail: You have to be able to see what was added, when, and why.

- Rerun logic: The system needs to be able to update links across your whole site when your strategy changes.

Integration and security questions (CMS permissions, staging, rollback)

You also have to figure out how a tool will fit into your actual workflow. Get clear answers to these questions:

- CMS Integration: Does it push directly to your CMS, or do you have to copy and paste?

- Staging and QA: Does it support a staging environment so you can test changes before they go live?

- Rollback Process: What happens if a batch of links goes wrong? How easy is it to undo?

- Data Security: What data does the tool need access to, and how is it stored?

Comparison table: manual vs. suggestions vs. automated insertion (trade-offs)

| Approach | How It Works | Best For | Watch Out For |

|---|---|---|---|

| Fully manual | Editor finds and adds links one by one. | Tiny sites (< 50 pages) or very early-stage teams. | Doesn't scale, is inconsistent, and burns a ton of time. |

| AI suggestions (human inserts) | AI recommends links; editor reviews and adds them. | Teams just starting with automation; mid-size sites. | Still adds review time; doesn't help with the backlog. |

| Automated insertion (human reviews) | AI inserts links; editor reviews before publishing. | Teams with a defined cluster strategy ready to scale. | Needs strong rules and QA to prevent problems. |

Most teams I know should start with automated insertion plus a strong review step. Build your rules, run a pilot on one topic cluster, check the results aggressively, and then expand.

Build an internal linking system (not a bigger manual checklist)

You already know the manual approach is broken. An hour per article, skipped half the time, with no consistency. That's not a process; it's a recurring fire drill that burns out good people.

The answer isn't just a new tool. It's building a system first. Map your clusters, set your rules, define your exclusions, and get your baseline metrics. Then, and only then, use automation for what it's good at: discovery and execution at scale, while keeping your team's judgment right where it belongs.

Start with one cluster. Pick your clearest topic, document the rules, and pilot the automation for a month or two. Measure what happens. Then take what you learned and expand.

That's how you turn internal linking from a tax on every article into a real, compounding advantage for your business.