So, you got a first draft in 12 minutes. Fantastic. Now you've got to fact-check it, review it for keywords, find internal links, make a cover image, write the metadata, and upload it to your CMS. Oh, and someone has to make sure it doesn't sound like it was written by a robot having a very confident, very bad day.

That part? That's probably the next two hours of your life.

This is where almost every "AI writing tool" comparison I see gets it completely wrong. They all grade on draft quality, as if the first draft is the hard part. It's not. The draft is maybe 60% of the work. The last 40% is where your team spends its time, where you actually create quality, and where the cost per article either stays manageable or quietly blows up your budget.

I want to argue one thing here: the right AI platform for your team is the one that handles this last 40% best, not the one that demos the prettiest paragraph. We're going to define what that last 40% actually is, I'll give you a scoring rubric you can use on any platform, and we'll walk through the workflows that separate a real publishing system from a fancy autocomplete tool.

What is the "last 40%" of AI content production (and why it's where you win or lose)?

The last-40% checklist (this is about publishability, not "polish")

I have a pet peeve with the word "polish." When someone says a piece "just needs polish," they usually mean the structure is wobbly, the claims are shaky, the keywords are missing, there are no internal links, and the intro sounds like a machine trying to win a high school essay contest.

Here's what that last 40% looks like as actual, observable tasks you can check off a list:

- Factual accuracy review: Has every product claim, statistic, and how-to step been verified against a real source or confirmed by someone who knows what they're talking about?

- Brand voice pass: Does the draft sound like it came from your company? Does it use your positioning and avoid the buzzwords, competitor claims, or tones you've explicitly banned? (We've all got a list).

- SEO coverage check: Are the target keywords in the H1 and key H2s? Do they show up naturally in the body copy and meta description?

- AEO structure: Is there at least one section with a direct question for a header, answered concisely right below it? Are there tables and lists where they make sense?

- Internal links: Are there 3–5 relevant links to your existing content in there?

- Multimedia assets: Is there a cover image that fits your brand? Is the data in the article backed up by a table?

- Metadata: Are the title tag, meta description, and slug written and inside character limits?

- CMS-ready formatting: Are headers tagged correctly? Are images compressed and alt-tagged? Can you publish this without spending 20 minutes reformatting everything?

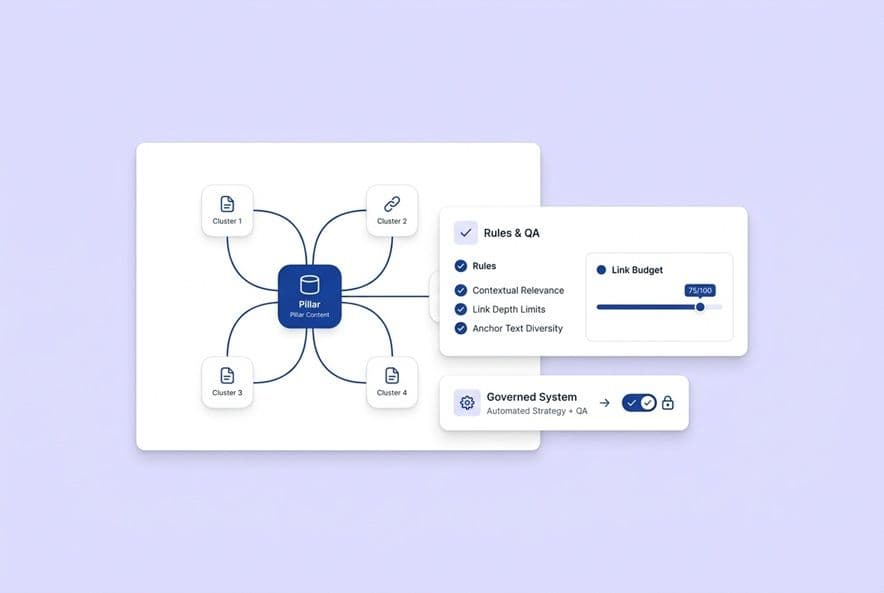

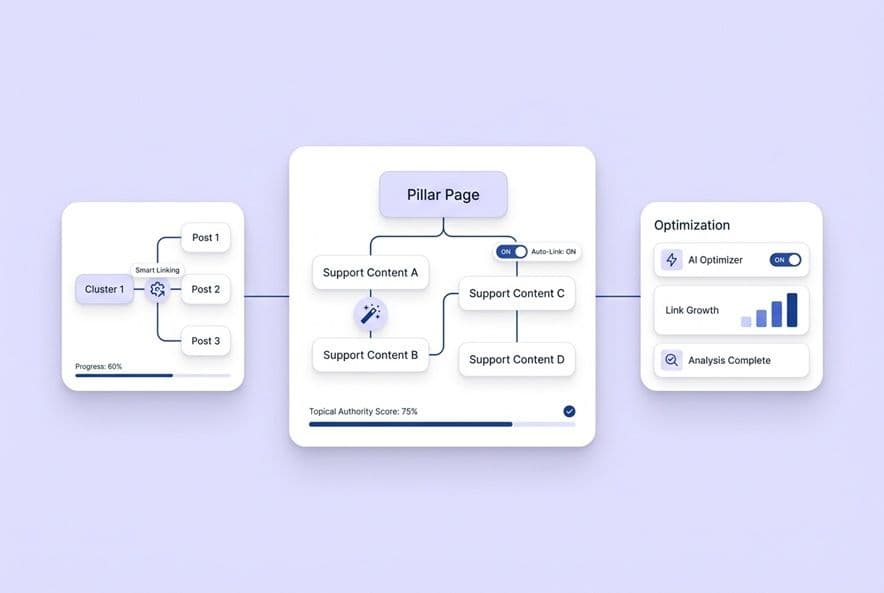

If a platform gives you a draft and you still have to do all of that manually, it only automated the part of the job that was already getting easier. The real breakthrough is finding a tool that consolidates these steps. Some platforms, like DeepSmith Content Studio, are built as a multi-step pipeline so your team is just reviewing and approving work, not manually assembling it across five different tabs.

Where your team is actually losing time: the hidden rework loop

You know this loop. I know this loop. A draft comes in. It's… decent. But the keyword coverage is spotty, so you spend 20 minutes massaging the copy. There are no internal links, so you open the sitemap in another tab and start hunting for good matches. The claims seem plausible, but you can't find a source, so you ping the product team and wait. The image is missing. The meta description is blank. And when you finally paste it into your CMS, all the formatting breaks.

You've now spent hours on a draft you were told was "AI-generated in minutes." That's the hidden rework loop. At four articles a month, it's an annoyance. At 12 or 15, it's the reason you feel like a bottleneck. The platforms that get rid of this loop don't just draft faster. They produce artifacts, things like verified outlines, pre-inserted links, structured metadata, and clean export files.

When the last 40% matters most (especially for lean teams)

Not every team feels this pain equally, but if you're on a lean SaaS content team and your boss is asking about AI, this stuff matters at every single level.

- Team size: If it's just you and one other writer, every hour of rework falls on your shoulders. There's no "dedicated QA editor" to absorb the overflow. It's just you.

- Technical complexity: When you're writing about SaaS, you're making specific claims. One wrong feature description or a made-up statistic creates support tickets and burns the trust you've worked so hard to build.

- Cadence: If you're trying to scale from 4 articles a month to 10 or more, that rework loop scales right alongside you unless you systematize this last 40%.

- AI citation competition: Structured, accurate, well-linked content is what AI answer engines are looking for. That generic first draft you got in ten minutes isn't going to cut it.

If your platform doesn't address these issues, you're not buying a content platform. You're just buying a first-draft generator.

How to run a fair head-to-head test of AI platforms (a "last 40% scorecard" you can use this week)

The scoring categories that actually predict publishability

Stop asking about "output quality." It's a vague and misleading metric. Instead, run every platform you're considering against this rubric. It's the one I use.

| Category | What you're testing | Pass criteria |

|---|---|---|

| Factual accuracy | Does the draft make things up? | No fabricated stats, product features cited correctly |

| Brand grounding | Does it sound like you and stay on message? | No banned phrases, no competitor-adjacent claims |

| SEO structure | Are keywords, headers, and readability built in? | Target KW in H1, subheadings, meta |

| AEO structure | Does it use question headers, tables, clear answers? | At least 2 AEO-ready sections per article |

| Internal linking | Are links suggested or inserted automatically? | 3–5 relevant links, contextually placed |

| Asset support | Are tables, images, and alt text handled? | Cover image generated, key data in table |

| CMS readiness | Can you export or publish without reformatting? | One-click or clean export to your target CMS |

| Measurement | Can you track AI visibility after you publish? | Prompt-level and page-level citation tracking |

Score each platform from 0 to 2 for each category. If a platform scores below a 12 out of 16, it's a drafting assistant, not a production platform.

The demo script: force the platform through the entire finalization process

Here's a test you can run in under two hours with your own content. Don't let the sales rep drive the demo; you drive.

- Bring a real brief. Pick a topic you're actually planning to publish. Use your real keyword, your real ICP, and your real product positioning.

- Generate the full draft. Pay attention to what the platform produces on its own versus what it makes you provide.

- Stop and take inventory. Before you touch a single word, list every last-40% task that isn't done yet.

- Ask the platform to finish the job. Can it add internal links for you? Is there a dedicated metadata step? Can you export it directly to WordPress?

- Measure your remaining work. Every task you still have to do yourself is the real cost of that platform. This process shines a big, bright light on the hidden cost of hopping between Surfer, Google Docs, Canva, and your CMS just to get one article live.

What to demand as output (so vendors can't hide behind a pretty paragraph)

A vendor who shows you a polished paragraph in a demo is selling you the 60%. Push them. Before you get off any demo call, ask them to show you these artifacts:

- A complete content brief, generated from just a keyword.

- A finished draft that already has internal links inserted.

- A complete metadata block (title tag, meta description, slug).

- A cover image (or at least a detailed image brief).

- A QA summary or a list of editorial flags.

- A direct export or publish push to your CMS.

If they can't produce any of those, that step stays on your to-do list.

How do platforms handle factual accuracy and "claim boundaries" in the final mile?

What "accuracy" really means in SaaS content

When we talk about inaccuracy in SaaS content, it's not just about a wrong statistic. It's much more painful than that. It means:

- Incorrect product descriptions: An AI writes that your tool "supports X integration," but that feature is still in beta. A customer tries it, fails, and files a support ticket.

- Fabricated comparisons: A comparison article invents a feature for your competitor, making your product look weaker than it is.

- Over-promised outcomes: A case study claims your product can "increase conversion by 3x" when the real number was much more modest.

- Outdated claims: The article describes a pricing model you sunset six months ago.

Each of these creates a real downstream cost, whether it's support tickets, confused sales calls, or AI engines confidently repeating your own mistakes back to potential buyers. I've seen it happen. It's not pretty.

Practical fact-checking workflows (what to automate, what must stay human)

You don't need to hire a full-time fact-checker. Here's a realistic model that works for busy teams.

Automate this:

- Flagging any statistic for you to review the source.

- Comparing product claims against a knowledge base you provide.

- Detecting phrases like "studies show" that don't have an attribution.

Keep this human:

- Any claim about your product roadmap.

- Any comparison to a competitor.

- The actual steps in a technical how-to guide.

- Any big claim you make in a headline or section intro.

The platform's job is to surface things for you to check. Your job is to use your judgment. A good platform makes the haystack smaller so you can find the needle faster; it doesn't pretend the needle isn't there.

Red flags that a tool will just create more rework for you

Watch out for these during any pilot or demo. They're classic signs of a "60% tool."

- Confident hedging: The AI writes "research shows that..." with no source. It sounds authoritative, but it's just making it up.

- Plausible but wrong competitor claims: The tool describes a competitor's feature set in a way that feels about right, but it's six months out of date.

- No QA layer: If a platform doesn't have a specific step for editorial flags or fact-checking, it's telling you that part is your problem.

- First-draft framing: If the vendor keeps talking about "amazing output quality" but never mentions review steps, they are selling you the 60%.

How do platforms keep content brand-aligned over time (not just "set a tone" once)?

The difference between "brand voice" and "brand strategy" (and why tools usually mix them up)

Most AI tools have a box where you can paste a few sentences about your tone, like "We're professional but friendly." That's brand voice.

Brand strategy is different. It's about what problems you solve, which competitors you position against, and which claims are your core differentiators. I see so many teams get this wrong. They get content that sounds like them but says all the wrong things.

What to test: positioning, forbidden claims, competitive language, and persona fit

When you're in a pilot, run these specific tests:

- Forbidden phrases: Tell the platform three phrases your brand would never, ever use. See if they show up in the drafts.

- Competitive comparisons: Generate a "vs." article. Does the framing reflect your actual market positioning, or does it give a generic, neutral overview?

- Persona language: Does the output use the same words and phrases your ideal customers use to describe their problems?

- Positioning under pressure: Generate five articles in the same topic cluster. Does the brand's unique angle hold up across all five, or does it drift into generic advice by the third one?

Systems that let you encode your brand strategy as structured context, not just a fluffy tone description, are the ones that will hold up when you start publishing at scale.

How to avoid the "generic AI intro and bland takeaways" pattern

This is the quiet brand killer. You publish 20 articles that are all technically fine, but none of them are distinctly yours. They all have that same bland, "In today's fast-paced world..." intro and a conclusion that says nothing. Over time, your blog becomes invisible.

The fix is structural. Every single article needs an editorial stance, a specific argument it's trying to make. Platforms that force you to fill out a "point of view" or "core argument" field in the brief are your friend here. They enforce the discipline that prevents the generic mush you're worried about.

How do platforms optimize for SEO and AEO during the last 40% (so you rank and get cited)?

SEO vs. AEO: what has to change in your final review

SEO in the last 40% is mostly about coverage and structure: making sure your keywords are in the right places, your headings are logical, and your meta description matches search intent. You know the drill.

AEO (Answer Engine Optimization) is different. AI answer engines are pattern-matching for trustworthy, structured, self-contained answers. For them, your content needs:

- Question-formatted headers, which are far more citable than generic noun phrases.

- Self-contained sections that answer one question completely.

- Tables and structured lists, which can be parsed and quoted directly.

- Specific, attributable claims, which are stronger than vague statements.

- A "definition-first" structure, where you state the core claim in the first sentence and then support it.

Your last 40% process has to solve for both SEO and AEO now.

AI-friendly structures that are more likely to get you cited

Structures AI engines love:

- Direct question headers with a 2–4 sentence answer right below.

- Numbered lists for any kind of process.

- Comparison tables with clear, simple headers.

- Definitions in a bolded lead sentence.

- Named frameworks that are original and specific (like "the last-40% checklist").

Structures that get ignored:

- Long, narrative paragraphs with no clear sub-points.

- Vague overviews ("AI tools offer many benefits...").

- Section headers that promise more than the text delivers.

- Intros that ramble on before making a point.

AEO formatting needs to be as automatic as checking the meta description. It should be part of the editorial gate, not an afterthought.

What "built-in optimization" should actually look like

The old model of tool-hopping was miserable. You'd draft in one tool, check it in a second tool like Surfer, rewrite it, check links in a third, and build metadata by hand. Every hop adds friction and burns time I know you don't have.

"Built-in" means keyword coverage, heading structure, internal links, and metadata are all generated as part of the writing process, not audited after the fact. The draft shouldn't need a scoring tool run on it, because the optimization was baked in from the start. When you're talking to vendors, ask: "Does optimization happen during creation or after?" If it's after, you're just buying a draft generator with a scoring tool duct-taped to it. It's why you also have to check if they offer post-publish visibility. Some platforms, like DeepSmith, build SEO and AEO into the pipeline and pair it with AI Visibility tracking, which finally connects optimization to measurement.

How do platforms handle internal linking, assets, and publishing-ready formatting?

Internal links: what to automate, what to keep human

Internal linking isn't hard; it's just slow and tedious. This is a perfect job for an AI. A good system should scan your content library, find opportunities based on anchor text, and suggest or insert the links right where they make sense. You still need a human eye to confirm the link is truly the best one for the reader. But this workflow takes five minutes instead of 45. It's a huge win.

Multimedia and "content assets" in practice: tables, images, and what to standardize

Missing assets are one of the most common bottlenecks in publishing. You think you're done, but then you spend an hour looking for a decent stock photo. Standardize your workflow here.

- Tables: Any section that compares options or lays out steps should probably be a table.

- Cover images: These should be automatically generated or built from a template, not sourced by hand every time.

- Alt text: This is a must for accessibility and SEO, and it should be automated.

CMS readiness: the final copy-paste step that shouldn't exist

The last soul-crushing, manual step is copy-pasting from a Google Doc into WordPress and then fixing all the broken formatting. A truly "publishing-ready" output means headers are tagged, images are alt-tagged, and metadata is populated. Direct integrations that push the content straight to your CMS eliminate this step entirely. If a platform's workflow ends with a copy-paste, it hasn't solved the whole problem.

What does a winning human-in-the-loop workflow look like (so AI speeds you up, not dumbs you down)?

The minimal workflow that actually works for lean teams

You don't need a six-person editorial department. I've seen this simple workflow work wonders for small, scrappy teams:

- AI generates the brief and draft, including links, metadata, and basic formatting.

- A writer reviews it for strategic angle and brand alignment. Their only job is to ask: does this piece say the right things for the right reasons?

- An editor does a final read for accuracy and voice, double-checking product details, competitive mentions, and any cited data.

- An SME only gets looped in for technically or legally sensitive content, not every single article.

- Publish and distribute.

The key insight here is the division of labor. The AI handles assembly. The humans handle judgment. That is a fundamentally different (and better) approach than "AI writes something, and then a human rewrites most of it."

Approval gates that protect quality (and where most teams go wrong)

Most lean teams I know either have no approval gates or they have so many that a simple blog post takes four rounds of review. You only need a few gates that really matter: a check for claim accuracy, a check for brand positioning, and one final read for anything that just feels too much like a generic AI. That's it for most articles. Get rid of vague, "does this feel right to you?" checks that aren't tied to specific criteria. Vague quality gates just create vague delays.

Evergreen refresh cycles: using AI to keep your winners fresh

Publishing new content is the obvious use case, but refreshing old content is where a lot of teams leave money on the table. An AI-assisted refresh is simple: you identify articles with declining rankings, ask the AI to update the accuracy-sensitive sections (like stats or product details), add new AEO-formatted sections to answer new questions, and re-run the SEO check. This can turn a full rewrite into a two-hour task.

The reality for any team trying to scale is that the content pipeline has to keep moving, even when you're swamped. This means being able to schedule content in advance and building refresh cycles right into your calendar. Platforms like DeepSmith's Content Studio and Autowrite are built for exactly this. Content Studio handles the multi-step pipeline for new stuff, while Autowrite can schedule generation for refreshes on set dates. The Agent Library can then turn those articles into social posts and newsletter snippets, so your distribution doesn't fall behind either.

How to make the final decision: cost, risk, measurement, and the questions that reveal the truth

Total cost per publishable article: the only metric that matters

The monthly subscription price is the smallest part of the equation. The real cost includes your team's time spent on briefs, SEO, linking, fact-checking, and finding assets. Let's be conservative and say your team's blended cost is $75 an hour. A platform that saves you three hours per article is worth an extra $225 per article in time savings. A $500/month subscription that saves 30 hours of team time pays for itself in the first week. You have to run this calculation for every platform you look at. The one with the lowest true cost per publishable article is the winner.

The two-week pilot plan: what to test, what to track, and what success looks like

You can figure out everything you need to know about a platform's last-40% capability in two weeks.

Week 1: Production test

- Run 3 real articles through the platform, end to end.

- Track the time you spend on each article, at each stage.

- Score the final output against the publishability scorecard.

- Make a note of every single manual step you still had to complete.

Week 2: Measurement and brand test

- Check its post-publish AI visibility tracking. Define 3–5 buyer prompts and see if it can track your citation rate.

- Run the brand drift tests: use forbidden phrases and test its competitive framing.

- Test an evergreen refresh on one of your existing articles.

Your success criteria: The total time per publishable article is at least 40% lower, you had zero rewrites due to factual errors, and every item on the last-40% checklist was completed within the platform.

Vendor questions that uncover their last-40% capability

Ask these questions in your next demo. The answers will tell you everything you need to know.

- "Walk me through what happens after the draft is generated. What does your platform do to help me get this piece ready to publish?"

- "How does your system handle brand voice and product accuracy? Is it just a style guide I paste in, or is it more structured?"

- "Show me an internal link being inserted during creation, not as a post-draft editing step."

- "Where does AEO optimization happen in your workflow?"

- "What do I get after I publish? Can I track if this article is getting cited in AI answers?"

- "What's the one manual step I can't remove from your workflow?"

That last question is my favorite. It's the most important. A good vendor will have an honest answer for you.

Connecting production to measurement

You can produce the world's best content and still lose in AI search if you have no idea what prompts your buyers are actually asking. Most teams I talk to only find this out reactively. That's a fire alarm, not a measurement system. Platforms like DeepSmith AI Visibility, with its Competitors module and Overview dashboard, can track which of your competitors' pages are earning AI citations and show you the gaps your content can fill. This is how you finally close the loop between your production investment and real AI visibility outcomes.

Build your "last 40%" scorecard and run a 2-week pilot

The platforms that are actually worth your money aren't competing on who can write a first draft the fastest. They're competing on how much of your post-draft workload they can absorb. They're competing on whether they can give you the SEO structure, the AEO formatting, and the AI visibility tracking you need to make this investment actually pay off.

So take the publishability scorecard from this article. Pick two or three platforms that look promising. Run the two-week pilot. And track your time per publishable article, not how pretty the demo looks. The platform that scores highest on the last 40% is your answer.