You're spending $50 a month on AI tools and still staring down 60-hour work weeks. Sound familiar? If you're nodding, you know something doesn't add up.

I've been there. The subscription is cheap, but the workflow is brutally expensive. Every single article runs a gauntlet. You've got the brief, the draft, the SEO review, the internal linking slog, sourcing images, writing metadata, wrestling with the CMS, and then, if you have any energy left, distribution. The AI tool wrote a draft, sure. But you, my friend, are doing everything else.

This isn't just another article about AI. It's an argument that we're all optimizing for the wrong number. The right number is your total cost of ownership (TCO) per publish-ready, distribution-ready asset. This is the fully loaded cost, in both dollars and hours, to get from a topic idea to a piece of content that's live, linked, and actually working for you.

When you run the real numbers, that "free" tool often isn't cheaper. It just looks that way on paper. So let's build a framework to see the real costs and use them to buy smarter.

What's the "true cost" of a free AI content stack (and why is subscription price a trap)?

Most content budget conversations are about subscriptions. It's $20 for ChatGPT, $99 for an SEO tool, maybe $199 for a bigger suite. Leadership sees those numbers, nods, and the budget is approved. Easy.

But that total doesn't include you. It doesn't include your writer's revision time, the manual SEO pass you have to do, or the 45 minutes you lose hunting for internal links. It doesn't include the cover image your designer is three days behind on because their plate is also full. These costs are real, they are massive, and they're invisible because they show up in salaries, not tidy software line items.

The hidden bill: your team's time is the most expensive line item

I learned this the hard way. A senior content marketer at a growing SaaS company can easily cost $80,000–$130,000 when you factor in salary, benefits, and overhead. That's roughly $40–$65 per hour. When you spend three hours wrestling one AI-assisted article into shape, you've just spent $120–$195 of your own time on a single piece of content.

And that's just you. Add a writer at $60–$90K, and you're looking at $600–$1,200 a month in labor to publish eight articles. That's before you even pay for the tools. Labor is the budget. The subscriptions are a rounding error.

The "last 40%" problem: where AI drafts create heavy manual work

Generic AI tools are pretty good at generating a C+ first draft. They are not good at producing a finished, strategic asset. That gap, which I call the "last 40%," is where all your time disappears.

This is the stuff that feels like your real job:

- Rewriting the bland, generic intro to add an actual editorial angle.

- Running the full SEO pass, checking keyword coverage, header structure, and search intent.

- Manually inserting internal links, which, for a thorough job, can take an hour. (You know it's true.)

- Sourcing or creating a decent cover image that doesn't look like every other stock photo.

- Writing the title tag, meta description, and social sharing tags.

- Formatting the whole thing for the CMS and finally hitting publish.

- Trying to create distribution assets for social and newsletters, though this is usually the first thing to get dropped when you're overwhelmed.

This "last 40%" isn't a temporary problem. It's a structural part of using a cheap, disconnected stack. It doesn't get cheaper as you publish more; you just get more burned out.

A decision-ready definition: TCO per publish-ready + distribution-ready asset

So let's use a better unit of measurement. What matters is the total dollars spent per article that is live, SEO-optimized, internally linked, and pushed to at least one distribution channel.

Not "cost per draft." Not "cost per tool seat." The cost per finished, distributed asset. If your stack produces drafts that sit in Google Docs for weeks or need multiple rounds of QA, those delays and rework loops are part of your cost model. Let's own it.

What costs should you include in TCO for manual AI content workflows?

To build a real cost model, we have to be honest with ourselves and capture every single category. Here's the full list.

Tooling costs (the obvious ones)

Start here because it's the easiest. List every subscription your team uses for content: AI writers like ChatGPT, SEO tools like Surfer or Ahrefs, image tools like Canva, project management tools like Notion, and social schedulers like Buffer. Don't forget to add per-article credit usage, team seat costs, and any sneaky overage fees.

Labor costs (the ones we all undercount)

This is the big one. For each article, map who touches it and for how long. Be brutally realistic.

- Brief creation: Who is building the brief? How long does that take?

- Research: Is a person pulling sources and data before the AI gets involved?

- Drafting: How much time is spent prompting, re-prompting, and stitching together AI output?

- Editorial QA: Who reviews for accuracy and, more importantly, brand voice?

- SEO review: Who is checking keyword density and search intent alignment?

- Internal linking: Who does this chore? How long does it actually take?

- Images & publishing: Who makes the visuals and gets the article formatted in the CMS?

- Distribution: Who writes the social posts and newsletter blurbs?

Document each step, the role, and a realistic time estimate. Then assign a cost using a loaded hourly rate. A good back-of-the-napkin formula is (annual salary ÷ 2,080 hours) × 1.3 to account for benefits and overhead.

Workflow overhead costs (the copy-paste tax)

Every time work moves between people or tools, you pay a little friction tax. For teams running a stack of four to six different tools, this coordination overhead, things like copy-paste errors, version control confusion, and "quick sync" meetings, can eat up 20–30% of the total time spent on an article.

Risk-adjusted costs (brand drift, fake stats, compliance headaches)

"Cheap" tools can create incredibly expensive problems. A generic AI voice requires heavy editing to sound like you. I've seen AI "hallucinate" statistics or product details that have to be caught in QA (which costs time) or, even worse, corrected after publishing (which costs trust). For anyone in a regulated industry, the cost of a compliance review for every single AI draft is very real. One major rewrite can easily cost $400–$800 in labor.

Opportunity costs (the articles you don't ship)

This one hurts. If you have 30 critical topic gaps identified but you can only ship six articles a month because each one is a 12-hour nightmare, you're leaving a ton of compounding organic value on the table. That deferred authority is a very real cost.

How do you calculate TCO per article step-by-step (with a model you can reuse)?

Alright, let's get practical. Here's how you build the actual spreadsheet.

Step 1 — Map your workflow stages from "topic" to "distributed"

Write down every single stage, in order. Topic selection, brief creation, research, first draft, structural edit, SEO review, internal linking, image creation, metadata, CMS publishing, and distribution. If you often skip a step like internal linking, note that. It's a risk, not a savings.

Step 2 — Assign owners, time ranges, and frequency

For each stage, identify the owner (e.g., Content Lead, Writer) and estimate a realistic time range. This is how you turn your messy workflow into measurable inputs.

Step 3 — Convert time to dollars (loaded cost) and add tool costs

Use a simple formula for the loaded hourly rate: (Annual salary × 1.3) ÷ 2,080. For tool costs, divide the monthly subscription by the number of articles you published that month to get a per-article cost. For instance, a $199/month tool for 10 articles is about $20 per article.

Step 4 — Add rework and variance (your "it depends" buffer)

Not every article sails through cleanly. I've found that if about 20% of your articles need a significant revision, it's safest to add a 20% buffer to your average time estimate. If one in ten needs a legal or compliance review, add that cost proportionally.

Step 5 — Output: your baseline TCO

Summing it all up (labor + tools + overhead + rework) gives you your TCO per article. Multiply that by your target monthly volume, and you have your true monthly content operations budget.

Table: TCO worksheet template (copy this structure)

Fill this in with your own honest numbers. I've seen lean SaaS teams land anywhere between $400 and $900 per article when they actually do the math.

| Workflow Stage | Owner Role | Hours (Low) | Hours (High) | Loaded Rate ($/hr) | Cost (Low) | Cost (High) |

|---|---|---|---|---|---|---|

| Topic selection | Content Lead | 0.25 | 0.5 | $55 | $14 | $28 |

| Brief creation | Content Lead | 1.0 | 2.0 | $55 | $55 | $110 |

| Research | Writer | 0.5 | 1.5 | $35 | $18 | $53 |

| First draft (AI-assisted) | Writer | 1.0 | 2.0 | $35 | $35 | $70 |

| Structural edit | Content Lead | 1.0 | 2.0 | $55 | $55 | $110 |

| SEO review | Content Lead | 0.5 | 1.0 | $55 | $28 | $55 |

| Internal linking | Content Lead | 0.5 | 1.0 | $55 | $28 | $55 |

| Image / cover | Designer | 0.25 | 1.0 | $40 | $10 | $40 |

| Metadata | Writer | 0.25 | 0.5 | $35 | $9 | $18 |

| CMS publish | Writer | 0.25 | 0.5 | $35 | $9 | $18 |

| Distribution assets | Content Lead | 0.5 | 1.0 | $55 | $28 | $55 |

| Tool costs (per article) | — | — | — | — | $25 | $40 |

| Rework buffer (20%) | — | — | — | — | $64 | $130 |

| TOTAL PER ARTICLE | $378 | $782 |

How do you compare AI tools by editing time and SEO impact (not just "quality")?

I learned the hard way that "quality" is too vague to be a useful metric. Here's how to measure what actually moves the needle.

Define "publish-ready" for your team (your non-negotiables)

Before you test any tool, write down your acceptance criteria. This is your definition of "done." For example:

- Primary keyword is in the H1, first paragraph, and 2–3 subheadings.

- No generic AI opener. (If I see "In today's fast-paced world..." one more time, I might scream.)

- At least three relevant internal links are placed.

- No unverified statistics or made-up claims.

- The brand voice is specific, direct, and sounds like a peer talking to a peer.

- Metadata is complete and unique.

This becomes your scoring rubric for every tool.

Run a controlled benchmark: same brief, same editor, timed edits

This is the only way to get real data. Give each tool the exact same brief and have the same editor review each output. Start a timer and see how long it takes to get from the raw output to your definition of "publish-ready." Log every type of edit you have to make. Do this for three articles per tool and average the editing time for a comparison you can actually defend.

Score the output on dimensions that drive SEO outcomes

Rate each draft on these points:

- Intent match: Does it actually answer the searcher's question at the right depth?

- Structure quality: Are the H2s logical and helpful?

- Keyword coverage: Does it use primary and related terms naturally?

- Internal link readiness: Does the draft give you obvious, logical places to insert links?

- AEO formatting: Does it use direct answers, definitions, and lists that AI engines can easily cite?

Tools that score high here require less work from you, which directly lowers your TCO.

Watch for failure modes that inflate TCO

The rework that burns the most time is often depressingly predictable:

- Generic intros: A 10-minute rewrite on every single article adds up fast.

- Missing editorial angle: This forces your editors (or you) to do the strategic thinking the tool should have handled.

- Hallucinated claims: This forces you into a line-by-line fact-check, which often takes longer than writing from scratch.

Track these issues in your benchmark. They are predictable and expensive cost drivers.

When should you consolidate into an integrated platform vs keep a multi-tool stack?

The stack makes sense when your bottleneck is strategy, not production

If your team is shipping content on time and distribution is actually happening, your best-of-breed stack is probably working just fine. The friction of integrating different tools is manageable, and you get the specialized depth each tool provides. Don't fix what isn't broken.

Consolidation wins when the bottleneck is throughput and handoffs

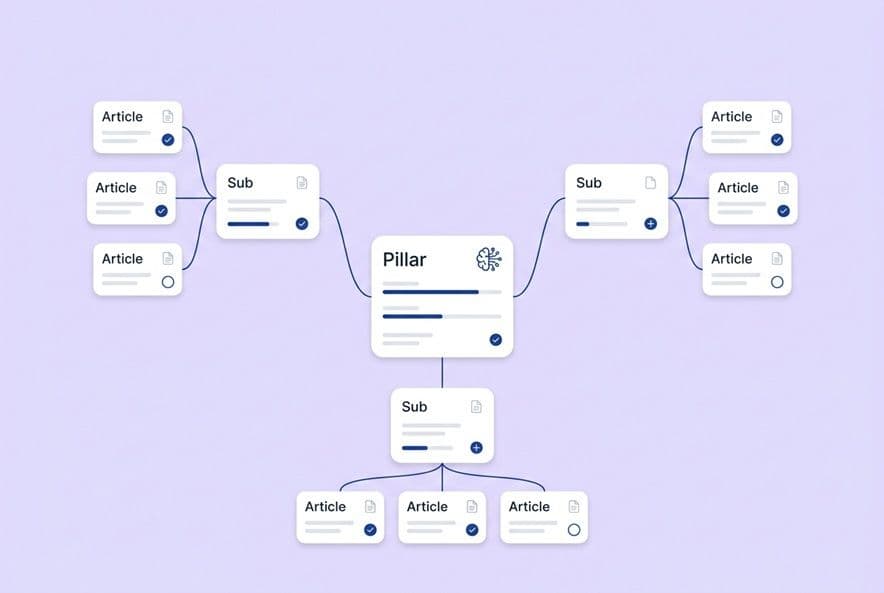

However, if you can't publish consistently because articles are always stalled, if internal linking gets skipped, or if distribution is just a pipe dream, your bottleneck is production. This is the exact moment consolidation starts to pay for itself by eliminating handoffs, context switching, and delays. Some teams I know use features like DeepSmith's Autowrite to schedule content generation on a cadence. This ensures the pipeline keeps moving even when the team is buried, with a human still governing the final approval.

The migration/transition tax (it's real)

Switching tools is a pain. You lose institutional knowledge, your team has to learn new interfaces, and publishing might slow down for a bit. Budget for this. You can reduce the pain by running a parallel pilot, migrating one content type at a time, and making sure you have exports from your old tools.

A practical note on integrated pipelines

The real case for consolidation isn't about having more features in one place; it's about eliminating the costly gaps between the tools. Platforms that connect briefing, drafting, SEO, linking, and publishing into a single workflow can compress a 10-hour manual process into 3–4 hours of human review. For example, DeepSmith's Content Studio acts like a multi-agent pipeline handling the whole process. This turns the team's job from manual assembly into strategic review and approval, which is where we should have been all along.

How do AEO/GEO and AI visibility tracking change the cost model (and the ROI model)?

And just when you thought you had a handle on things, AI search visibility becomes the new fire to put out. The work required to get your content cited in ChatGPT, Perplexity, and Google's AI results is real, and it is not free.

The new recurring tasks: prompt discovery, monitoring, and content updates

An AEO (AI Engine Optimization) workflow adds new tasks to your plate:

- Prompt discovery: Researching the actual questions your buyers are asking these new AI platforms.

- Citation monitoring: Regularly checking which of your pages (and your competitors' pages) are being cited as sources.

- Content updates: When a competitor wins a key citation, you need to update your content or create something new to compete.

If you're not budgeting time for this, you're already falling behind.

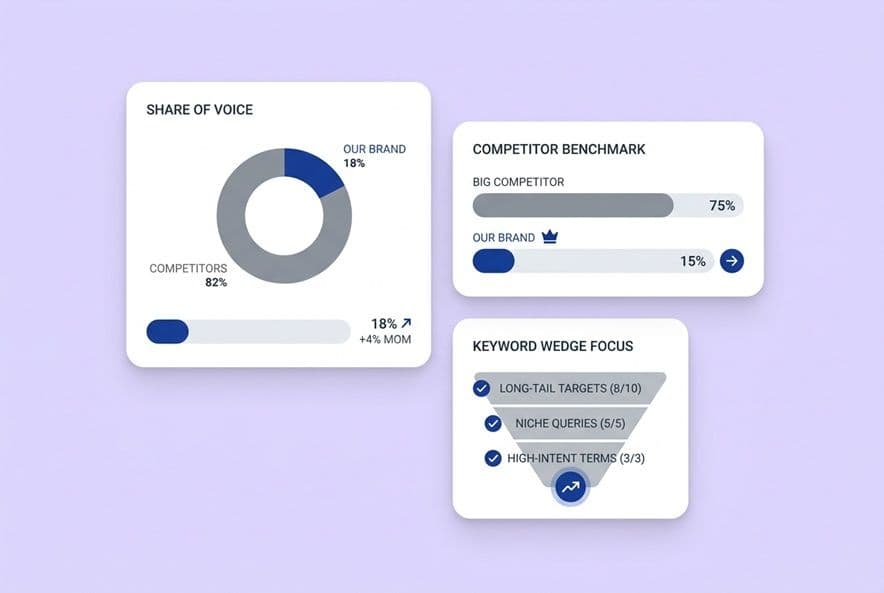

What to measure: mentions vs citations vs page attribution

You need to track your mention rate (your brand name appears in an answer), citation rate (your page is linked as a source), and page-level attribution (which specific pages drive citations). High mentions with low citations means the AI knows your brand but isn't confident in your content, which is a content strategy problem.

Competitive benchmarking: knowing who wins citations tells you what to write next

This is incredibly actionable intelligence. If a competitor's page is consistently cited for a key topic, you have a clear content gap and a proven format to model. This insight should be driving your editorial roadmap. Platforms are emerging to help with this, like DeepSmith's AI Search Readiness, which can replace manual spot-checking with systematic tracking.

What should you ask vendors before you commit?

I've learned to ask some very pointed questions after getting burned a few times. Here's my list.

Pricing model questions that expose the real costs

- "What happens when I go over my monthly article limit? Is there an overage fee, or does everything just stop?"

- "If I bring on a freelancer for a project, does that require a new seat at full price?"

- "Are key features like internal linking or AI visibility only on the highest tiers?"

- "What is the actual unit of cost here? Show me what three months of our estimated volume would really cost, all in."

Workflow fit questions

- "Walk me through the entire workflow, from a topic idea to a live article. How many different tools or tabs does my team need to have open?"

- "Is internal linking automatic, suggested, or fully manual?"

- "Show me exactly what the approval and review flow looks like."

- "Which CMS platforms do you integrate with, and what does the 'publish' button actually do?"

Governance and brand safety questions

- "How does the platform prevent brand voice drift when we're publishing at scale?"

- "How does the system prevent inaccurate product claims from being generated?"

This is where a platform's context infrastructure really matters. For instance, DeepSmith's Deep IQ works by storing your company positioning, product details, and brand voice as a structured source of truth. This shapes every output, which dramatically reduces rework and QA overhead.

Commitment and exit questions

- "If I sign an annual contract and the tool stops serving our needs in month four, what are my options?"

- "Can I export all of my content, including briefs, in a standard format?"

- "How long has your core feature set been stable?"

Monthly billing costs a bit more, but it buys you flexibility. In a market moving this fast, that flexibility has real value.

Pilot plan: how to get a go/no-go decision in 4 weeks

- Week 1: Produce three articles using the new tool. Log your editing time and compare it to your TCO baseline.

- Week 2: Test the internal linking, metadata, and publishing workflow. Does it actually work?

- Week 3: Evaluate the output quality against your "publish-ready" checklist.

- Week 4: Calculate the TCO per article from your trial and compare it to your baseline. If the tool cuts your total hours by 30% or more without a drop in quality, the math supports the switch.

Commit only after you have your own numbers, not just good vibes from a sales demo.

Build your TCO baseline, then evaluate tools with numbers, not vibes.

The decision to change your AI stack shouldn't be based on a slick sales demo. It should be driven by your actual cost per publish-ready, distribution-ready article.

So build that baseline first. Map your real workflow, assign honest hours and roles, and add up the costs for tools, rework, and all the new AEO tasks. Get to a real number.

Then, and only then, run a short pilot against an alternative. Measure the exact same metric: your total cost to get a finished, distributed article out the door. If the new approach cuts that number in a meaningful way without sacrificing quality, the math will make the decision for you. If it doesn't, the math tells you that, too.

The lean content team that wins isn't the one with the cheapest subscriptions. It's the one that optimizes the entire system to ship the most value, end to end.