You publish a blog post. Then you spend the next two hours manually adding internal links, reformatting it in WordPress, and making a mental note to write the LinkedIn post "later." Later never comes. The article goes live, sits there, and you're already behind on the next brief.

If that sounds like your Tuesday, you don't have a content score problem. You have a workflow problem. And that's exactly why searching for a "Surfer SEO alternative" can send you down the wrong path. You end up looking at another point solution that makes one slice of your process a little better while the rest of it stays just as painful.

I'm arguing for a different frame here. The real question isn't which tool gives you better content scores. It's whether your next tool can take over enough of the pipeline (research, briefing, drafting, optimization, linking, publishing, distribution, measurement) that your team stops being the human glue holding everything together. That's the difference between a simple optimizer and a platform that actually changes your team's content production.

What's the best Surfer SEO alternative for your team: another optimizer, or a unified AI SEO platform?

Before you open up another comparison spreadsheet, let's get clear on what you're actually trying to buy. A "Surfer SEO alternative" can mean three very different things: a better content editor, an AI writing tool that also scores drafts, or a platform that manages your entire content operation. Those are not the same thing at all.

What "Surfer SEO alternative" should mean (and what it shouldn't)

The typical roundup post treats this like a simple feature comparison, but it's not. It's a fundamental workflow decision. Surfer does one set of things well. It analyzes the SERP, grades your content against top-ranking pages, and guides a writer through optimization. That's genuinely useful. An "alternative" could be a different optimizer, a better NLP scoring engine, or a totally different category of tool that makes the scoring workflow less critical because drafts show up already optimized.

Most teams I see searching for this need to decide which of those paths solves their real bottleneck, not just which brand name has the best marketing.

The litmus test: where does your team lose time from brief to publish?

Take five minutes and map out your current workflow. Seriously, grab a napkin if you have to. Where do things get stuck?

If your drafts are well-structured but just need keyword tuning, a content optimization tool (maybe even Surfer itself) solves your problem. But if the draft needs a full rewrite because it's generic, misses your angle, and has zero internal links, that's a creation problem. And if the draft is fine but it takes 90 minutes to get it from a Google Doc into WordPress with the right formatting and metadata… that's an operations problem.

Most teams putting out 15 to 40 articles a month are dealing with all three. One of them is the real constraint, though. Start there.

When Surfer still works—and the signals you've outgrown it

Let's be honest. Tearing out Surfer isn't always the right move. Part of building a repeatable system is knowing which parts are working just fine.

Keep Surfer if your problem is primarily on-page tuning and writer guidance

Surfer earns its keep when you have reliable writers who produce solid drafts. If your main challenge is just making sure those drafts hit the right keyword density, heading structure, and NLP terms before they go live, you're in good shape. When the bottleneck is truly "our content scores are too low," Surfer's editor is purpose-built for that. Clearscope is another strong choice in this lane. Both tools give writers live feedback that builds good optimization habits. If your operation is clean from brief to draft and the only friction is that final SEO polish, don't fix what isn't broken.

You've outgrown Surfer if the 'last 40%' is killing throughput

I see this pattern constantly. A team uses Surfer for the optimization layer, but every single article still requires a manually written brief, a draft from a writer that needs heavy structural edits, an SEO review, 30 to 60 minutes of internal linking, image sourcing, CMS formatting, and a vague promise to "do the social post later."

That last 40%, everything that happens after the draft is written, rarely gets automated. It just keeps eating your time. When that operational overhead is what's actually capping your monthly output, a better content grader won't change your capacity. You need a different kind of tool entirely.

You need more than Surfer if leadership is asking about AI search visibility

This is the new pressure point, isn't it? You search your main use case in ChatGPT or Perplexity, a competitor shows up, and suddenly you're in a meeting explaining why your brand isn't in the answer. Surfer is built for traditional Google search results. It doesn't track how often you're mentioned in AI answers, show which of your competitors' pages are winning citations, or tell you what prompts your buyers are typing into Gemini. That's not a knock on the tool. It's just a different job. If AI search visibility has become a real deliverable for you (not just a vague goal), your tool stack has to grow beyond content scoring.

What to look for in a modern Surfer SEO alternative (capability checklist)

This is the section to keep open when you're sitting through vendor demos. These seven capabilities are what separate tools that solve one tiny problem from platforms that change how your team operates.

Creation quality: how the platform produces drafts you want to edit (not rewrite)

The biggest risk with AI content tools is trading one kind of work (writing) for another (rewriting generic AI garbage). A good platform should give you drafts where you're making strategic edits. You're adding a sharper example, adjusting a claim, or tightening a section, not rebuilding the whole thing from the ground up because it reads like a fifth-grade book report.

You'll know you have strong draft quality when the structure reflects actual search intent, the intro doesn't start with "In today's fast-paced digital landscape," and the tone sounds human enough that you aren't spending an hour just on the voice pass. Ask vendors to show you a real draft from their system in your category. Read the first three paragraphs. If you'd publish them with light edits, that's a huge signal.

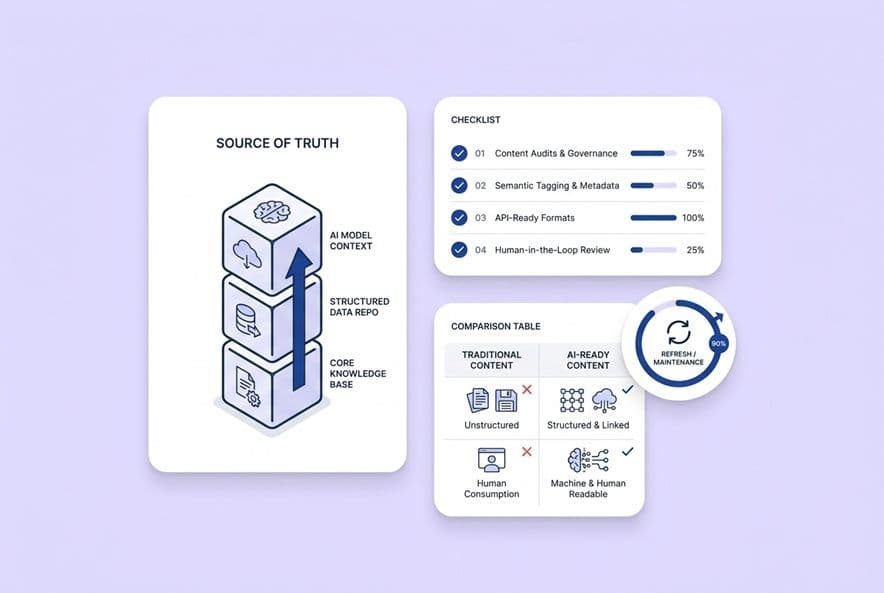

Platforms built on multi-agent pipelines (where separate agents handle research, drafting, QA, and voice in sequence) tend to produce much better output than systems running a single, massive prompt. DeepSmith, for example, runs this full pipeline, including a dedicated voice pass to get rid of that "AI smell." That architectural choice matters more than any feature on a pricing page.

Optimization depth: SERP analysis, intent mapping, and content grading without over-optimization

You still need solid SEO capabilities. That means SERP analysis that shows you what's actually ranking and why, keyword guidance, heading recommendations, and metadata generation. But there's a real tradeoff here. Many optimization tools can push writers or AI systems toward keyword stuffing that makes the content unreadable. Ask vendors how their system avoids over-optimization and if they have any built-in readability checks.

Intent mapping is the unsung hero. A platform should identify if a query is informational, navigational, commercial, or transactional, and the draft should match that intent. A high content score on an article that answers the wrong question is a total waste.

Content lifecycle: audits, updates, and keeping wins from decaying

Rankings decay. It happens. The articles that drove tons of traffic 18 months ago are quietly sliding down the SERPs. A modern platform should have content audit features that surface which pages are losing rank or traffic and make it easy to refresh them. "Publish and forget" is how great content programs slowly lose all their ROI. If a platform only supports creating new content, that's a major gap to ask about.

Workflow + collaboration: briefs, approvals, versioning, and handoffs

This sounds like boring administrative stuff, but it's where most tools fail. If a platform forces your whole team to abandon their Google Docs workflow, learn a complex new editor, and route approvals through some clunky interface, they'll just stop using it in 60 days. Look for brief templates writers can actually use, version tracking that's easy to follow, and approval flows that don't bring work to a halt.

Integrations: CMS, Google Search Console, and editorial workflows

Two integrations matter more than all the others: your CMS and Google Search Console. A direct CMS integration (like for WordPress or Webflow) means you can publish without copy-pasting, which saves 20 to 40 minutes per article and kills formatting errors. GSC integration means the platform can use your actual search query data to find topic ideas instead of relying on third-party estimates. Without these, you're just buying another workflow island.

AI visibility/AEO: prompt tracking, citation mapping, and competitive benchmarking

If AI visibility is on your roadmap, this is a non-negotiable. Useful tracking means defining the specific prompts your buyers actually ask, tracking how often your brand is mentioned versus cited across the major AI platforms (ChatGPT, Gemini, Perplexity, etc.), and seeing which of your specific pages are earning those citations. Platforms that just tell you "your brand was mentioned X times" without page-level data give you anxiety, not a plan.

Scalable pricing: how costs behave when volume and seats grow

This is where you can get burned. A subscription model is predictable but can get pricey if you need to add seats. A credit-based model is flexible but can lead to surprise bills when your volume doubles. The question isn't which one is cheaper today. It's which one stays manageable when you're doing 40 articles a month with 4 users versus 80 articles with 8 users. Ask vendors to price both of those scenarios for you before you sign anything.

How unified AI platforms remove the 'last 40%' of manual work (and what to demand in a demo)

Every vendor claims to be "all-in-one." Here's how to call their bluff.

Brief creation that doesn't depend on one person's brain

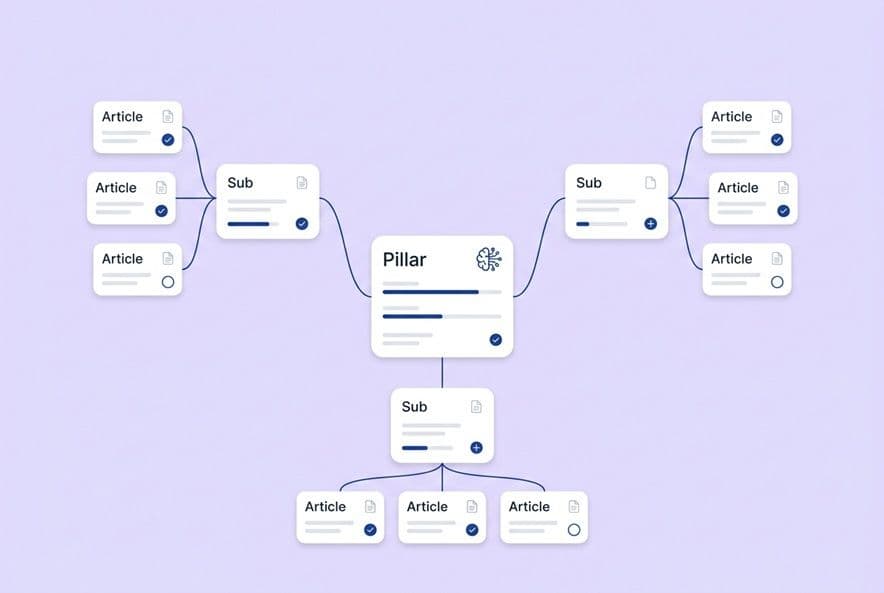

Good briefing automation is not a blank template. It's a system that analyzes the keyword and SERP, identifies the right search intent, proposes a smart structure, and even surfaces internal linking opportunities before a human writes a single word. If generating a brief still requires heavy manual input, you've just moved the bottleneck, not removed it.

SEO + structure built in during drafting (not bolted on in review)

The rewrite cycle is where editorial time goes to die. A unified pipeline builds the heading structure, keyword coverage, and internal links into the drafting stage. This means your review pass is focused on strategic quality, not fixing basic structural problems. Fewer rewrites mean faster approvals and a faster time to publish.

Internal linking at scale: what "automatic internal linking" should actually do

Internal linking is the task most teams either do poorly or just skip when things get busy. When it's done right, automatic internal linking means the system scans your sitemap, identifies topically relevant pages, and inserts links with sensible anchor text during the draft generation. It's not random and it's not excessive. The things to watch out for are links to irrelevant pages, so many links the article reads like a directory, or generic anchor text like "click here."

DeepSmith handles this by scanning an enriched sitemap during the writing process and inserting relevant links contextually. When you're evaluating a platform, ask to see a draft with internal links already included and check them. Are the links actually helpful?

Publishing and formatting: why "export to Google Doc" isn't automation

"Export to Google Doc" is vendor-speak for "this is where our job ends and yours begins." Real publishing automation is a direct push to your CMS (WordPress, Webflow, etc.) with formatting preserved, metadata populated, and the cover image attached. The article should be ready for a final review in your editor, not sitting in a new document you have to copy-paste manually. At 20 articles a month, this one feature can reclaim hours of tedious work.

Distribution: turning "article published" into "article shipped everywhere"

When deadlines are tight, distribution is always the first thing to get dropped. By the time an article is live, the next brief is already late and that LinkedIn post never gets written. Platforms that treat distribution as an output, not a separate project, will generate channel-specific assets like social threads or newsletter sections as part of the main workflow. That's how distribution finally stops being an afterthought.

If AI search visibility matters, what should your Surfer alternative do?

This is the capability most content leaders are shopping for and are least prepared to evaluate. Here's a simple framework.

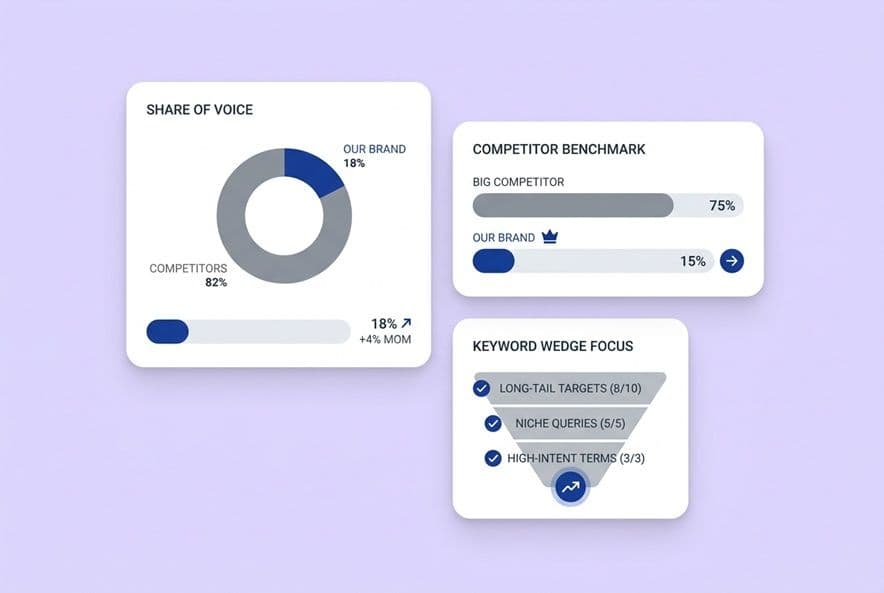

The two tracking layers you need: prompt-level and page-level

Prompt-level tracking answers the question: when a buyer asks a specific question in an AI chat, does my brand show up? This means you have to define those prompts, not just guess at them. Then you track your mention and citation rates across different platforms.

Page-level tracking tells you which specific pages on your site are earning those citations and how the numbers are trending. You need both. Prompt-level data tells you where you're winning the conversation, and page-level data tells you which content is doing the heavy lifting.

DeepSmith's AI Visibility module covers both. The Prompts view tracks queries across ChatGPT, Gemini, Perplexity, Claude, and Google AI Mode, while the Pages view shows which of your URLs are getting cited.

Competitive benchmarking that leads to a plan (not just anxiety)

Knowing a competitor gets cited more often is only useful if it tells you what to do about it. Good competitive benchmarking shows you which of their pages are winning and why (structure, depth, authority), so you can build a plan to compete. A tool that just shows you "Competitor X is ahead" without any context is just giving you a new thing to worry about.

Platform coverage and geography: what "good enough" monitoring looks like

No system can track every AI surface. In 2025 and 2026, the emerging standard is consistent tracking across ChatGPT, Gemini, Perplexity, Claude, and Google AI Mode. Together, these cover the vast majority of AI search queries in English-speaking markets. If your audience is global, make sure you confirm which regions a platform can monitor.

How to turn visibility insights into an editorial backlog

This is the final, crucial step. Visibility data is only valuable if it fuels your content production. The workflow should be simple: find prompts where competitors are winning, figure out why, generate briefs for content that can beat them, and then publish consistently. If your tracking tool doesn't connect to your content queue, you'll just have another dashboard you check once in a while.

How to compare Surfer alternatives quickly (a decision table you can reuse)

Comparison table: point optimizer vs briefing-first vs AI writer vs unified AI SEO platform

| Category | Best For | Strengths | Limitations |

|---|---|---|---|

| Point optimizer (Surfer, Clearscope) | Freelancers, small teams needing draft QA | Fast scoring, strong SERP analysis, writer guidance | Doesn't write, doesn't link, doesn't publish, no AI visibility |

| Briefing-first platform (MarketMuse, Frase) | Teams with strong writers needing structured briefs | Topic modeling, content gaps, brief quality | Still requires a separate writing and publishing workflow |

| AI writing tool (Jasper, Copy.ai) | Teams needing volume at low cost | Fast drafting, multiple formats | Low SEO specificity, no linking, no publishing, high rewrite rate |

| Unified AI SEO platform (DeepSmith) | In-house teams needing end-to-end pipeline | Research → brief → draft → SEO → links → publish → distribute → track | Higher setup investment; requires structured onboarding |

The "buyer persona fit" lens: freelancer vs in-house team vs agency

A freelancer doing a few articles a month for one client is probably fine with a point optimizer. An agency managing tons of clients needs multi-client workspaces and white-labeling, which is a whole other set of requirements.

But for in-house content teams at Series A or B SaaS companies (that's who this article is for), a unified platform is almost always the right fit. You have a consistent brand to encode, a known audience, one CMS to publish to, and a leadership team that's starting to ask about AI visibility. The workflow benefits compound fast because you're building a system for one brand that you run over and over.

Run a 30-day evaluation without breaking your content calendar

Pick one cluster, one workflow, one success definition

Don't try to test a new platform across your entire content operation. You'll never figure out what's actually working. Instead, pick one topic cluster (5 to 10 related keywords) and run it through the full pipeline of the platform you're evaluating. Define what success looks like before you start. In DeepSmith, you can use the Topics feature to identify a cluster, push it into production, and use Autowrite to generate the articles so the pilot doesn't become a manual chore.

Metrics that prove ROI: cycle time, cost per article, publish consistency, and visibility signals

Here are four metrics to track during a 30-day pilot:

- Cycle time: How many hours does it take to get from brief to published? Compare your baseline to the platform's performance.

- Cost per article: Calculate the total hours (yours and your writer's) plus the platform cost.

- Publish consistency: Did you hit your target volume each week, or did things slip?

- Early visibility signals: Are the articles indexed? Are they getting impressions in GSC? Any AI citations yet?

You won't see ranking results in 30 days. That's not how search works. But you can absolutely measure if your workflow got faster, if you published more consistently, and if the drafts you got needed heavy rewrites or just light edits.

Vendor questions to ask: privacy, claim boundaries, integrations, and pricing scale

Get clear answers to these four questions before you move forward with any vendor:

- Data privacy: Is our company and product information used to train your shared models? Who can access it?

- Claim boundaries: How does your system stop the AI from making inaccurate claims about our product? Can you show me how brand context is enforced?

- Integration depth: Which CMS platforms do you integrate with natively? Does the content publish with formatting, metadata, and images intact?

- Pricing at scale: What does pricing look like at 20 articles a month with 3 users, and at 60 a month with 6 users? Are there overage fees?

If a vendor gets vague on any of these, that's a red flag. Most stories of tool regret trace back to one of these four gaps.

Build your Surfer alternative short list (and pressure-test it in 30 days)

You've probably known what your real bottleneck is for a while. You just needed permission to name it. The choice between keeping Surfer, switching optimizers, or moving to a unified platform isn't about which brand is coolest. It's about figuring out where your content operation actually breaks down.

If the break is in your throughput, your workflow, or your ability to get seen in AI search, the playbook above gives you a way to build a short list and run a pilot that produces real data, not just good vibes from a demo.

Use the 30-day pilot framework: one cluster, one workflow, four metrics. Ask the tough vendor questions before you sign. And define what "success" looks like before you even start.

That's how you avoid buying another tool your team abandons in a month. And it's how you finally stop being the human glue holding the whole messy operation together.