Let's be honest, you're the bottleneck. I've been there. You know it's true. Every single article that goes out has your fingerprints on the brief, the SEO review, the internal linking, and the final push to the CMS. Now leadership is asking about AI search visibility, and you're nodding along like you have a plan. Really, you're just hoping to buy a few weeks to figure it all out.

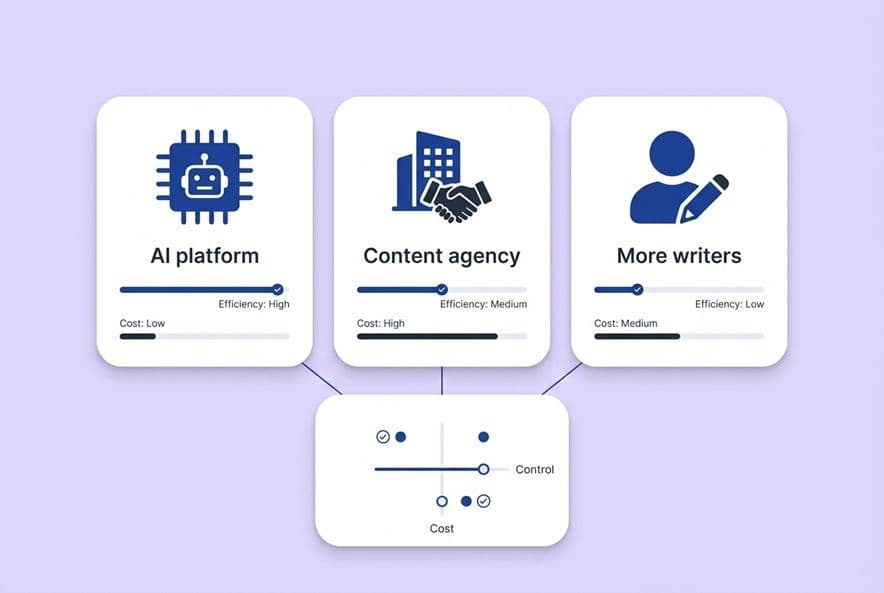

Choosing between an AI content platform, a content agency, or more writers feels like just another vendor decision. It's not. This is a decision about your entire operating model. It determines where quality control lives, who owns strategy, and what part of the system will shatter when you try to double or triple your output. Get it wrong and you'll either burn budget on a tool no one uses or hand your brand voice to an agency that has no idea what your product actually does.

This isn't another generic comparison guide. This is a framework for looking at total cost, control, the burden of governance, and readiness for AI search. I'm mapping these options to the specific, messy situations that real Series A and B SaaS teams face every day.

How to Decide: AI Platform, Agency, or More Writers?

This decision is hard, not because there's a lack of information, but because most of it compares the wrong things. Price per article, feature lists, vague promises about scale. None of that helps.

I learned to treat this as an operating-model question. Start by asking these things: Who owns our strategy? Who is actually enforcing our standards? And what's the first thing that will break when we go from 4 articles a month to 12?

The 5 variables that actually matter

When you cut through the noise, these are the only things you should be debating.

1. True cost (not subscription price) Your cost includes your time. It's the hours you spend writing briefs, doing SEO QA, managing revisions, chasing down freelancers, and wrestling with the CMS. For most of us, that's 3 to 5 hours per article we're not even counting. You have to add that to any option's "price" before you can compare them.

2. Control surface area Control isn't just a yes or no. You can have strong control over strategy but weak control over execution (hello, most agency relationships). The real question is: which parts of the process do you need to control tightly right now for your brand?

3. Speed-to-publish This isn't about how fast a first draft gets written. It's the full cycle time, from a brief to a live URL. Agencies have ramp-up time. New hires take weeks to get up to speed. AI platforms can generate drafts in minutes, but your review process is still a factor. Measure the whole cycle.

4. Scalability risk What really happens when you need to go from 4 articles a month to 10? Which option bends without breaking, and which one just snaps? The answer usually depends on whether your quality is baked into a system or dependent on a single person.

5. Compounding distribution Content that gets published but never distributed might as well not exist. You have to factor in whether an option helps get the content onto LinkedIn, into your newsletter, and in front of AI engines. Or is that still your job after the article is "done"?

A 60-minute decision process for your team

Block an hour. Seriously, put it on the calendar. Pull up your content production data from the last three months and answer these four questions with your team.

- Where did our hours go? Map out every step: brief, research, draft, SEO review, linking, images, CMS, distribution. Be honest about the hours for each. This is your baseline reality.

- Where did quality break? Make a list of every article that needed major rework. Was it because the voice was off? Did it have the wrong product claims? Was the SEO a mess? This tells you which control points are failing.

- What's our 6-month volume target? If you're aiming for 2x your current output, you're optimizing. If it's 3x or more, you're rebuilding the whole machine.

- What's our real governance capacity? How many hours per week can a human (probably you) realistically spend on QA? This is your ceiling. Any model that requires more time than you have is doomed to fail.

Once you have these answers, map them to the five variables above. The biggest pain point you identify is what should drive your decision.

The "True Cost" of Each Option (and why price-per-article is a lie)

I once got a $500/article quote from an agency and thought I was a genius. I wasn't. That reasonable quote didn't include the four revision cycles, two scope conversations, a monthly briefing call, and the three hours I spent rewriting the final version because it missed all the product nuances. It was actually a $900 article that cost me five hours of my own time.

The hidden costs most teams forget

- Briefing time: Who writes the brief? For agencies and freelancers, it's usually you. A proper brief takes 45 to 90 minutes.

- SEO QA: If the writer or agency doesn't hit your standards, you're the one re-doing keyword density and heading structures.

- Internal linking: Almost no one outside your company does this well. Budget 30 to 60 minutes per article if you have to handle it yourself.

- CMS operations: Formatting, uploading images, adding metadata, scheduling. It's low-skill work, but it eats up time.

- Distribution: That LinkedIn post and newsletter snippet take another hour if you do them right. And usually, they just don't get done.

- Revision cycles: Every round of feedback costs time for everyone. Two rounds of revisions are optimistic for a new agency relationship.

- Management overhead: The time you spend coordinating freelancers, reviewing work, and tracking deliverables is invisible until it's eating up 20% of your week.

A TCO worksheet (you don't need perfect data)

You're looking for directional accuracy, not a perfect spreadsheet.

| Cost element | AI platform | Agency | Hire (FT writer) |

|---|---|---|---|

| Tool/salary | $300–800/mo | $4–15K/mo | $75–110K/yr + benefits |

| Briefing (your time) | Low (template/stored context) | High (custom per article) | Medium (internal process) |

| SEO QA (your time) | Low–Medium (built-in or partial) | High (usually manual) | Medium (depends on skill) |

| Internal linking | Low (can be automated) | High (usually skipped) | High (manual) |

| Revision cycles | 1–2 rounds | 2–4 rounds | 1–2 rounds |

| Distribution | Low (can be systematized) | Rarely included | Rarely included |

| Ramp time to value | 2–4 weeks | 4–12 weeks | 8–16 weeks |

Now, multiply your hourly rate by the hours spent on each step. Run the numbers for 4 articles per month, then run them again for 10. The cheapest option at 4 articles often looks very different at 10.

Where ROI actually comes from

Forget per-article cost. It's a vanity metric. What matters is the cost to get a published piece of content that actually does its job: ranks, gets cited, and converts. The way to drive that cost down is through three levers:

- Throughput: More articles per month without increasing your team's hours.

- Quality stability: Fewer articles that need a complete overhaul before you can hit publish.

- Rework reduction: Fewer revision cycles burning your time.

An AI platform that cuts your QA time from three hours to one per article is giving you back capacity. When you talk to your CMO or CFO, frame it as operating leverage. You're not just saving hours; you're increasing the company's strategic capacity. Tie higher throughput to pipeline, quality stability to brand risk, and faster publishing to beating competitors to market.

Where Does Control Live? (And what breaks when you scale)

I used to think of "control" as a simple on/off switch. That's wrong. You have to think in layers.

Control: Strategy vs. Execution vs. Distribution

"Control" means different things at different stages of the process.

- Strategy control (what gets written and why): You should own this in all three models, unless you deliberately hand it off.

- Execution control (brand voice, product accuracy, SEO): This is where the models really differ. With a hire, you train one person and trust them. With an agency, you're trusting a team you can't see. With an AI platform, you are encoding your standards into the system itself.

- Distribution control (getting content to the right channels): This almost always falls back on you, no matter which model you choose, unless you build a specific workflow for it.

Common breaking points at 2x scale

When you try to double your volume, here's what I've seen break.

- Agencies: The voice starts to drift, and product mistakes creep in. The quality of your briefs becomes the only thing ensuring quality output, and your time writing briefs becomes the new bottleneck.

- Freelancers/hires: Your output hits a ceiling based on headcount. You can't just add more writers and expect it to work, because you've just proportionally increased your own review burden.

- AI platforms: If you haven't properly configured the brand voice, product rules, and persona knowledge, the output quickly becomes generic. It's a classic garbage-in-garbage-out problem, but at scale.

You'll know it's happening when you see a spike in revision rounds, a stall in your publishing cadence, or a batch of articles that just feel off-brand. These are all governance failures, not vendor failures.

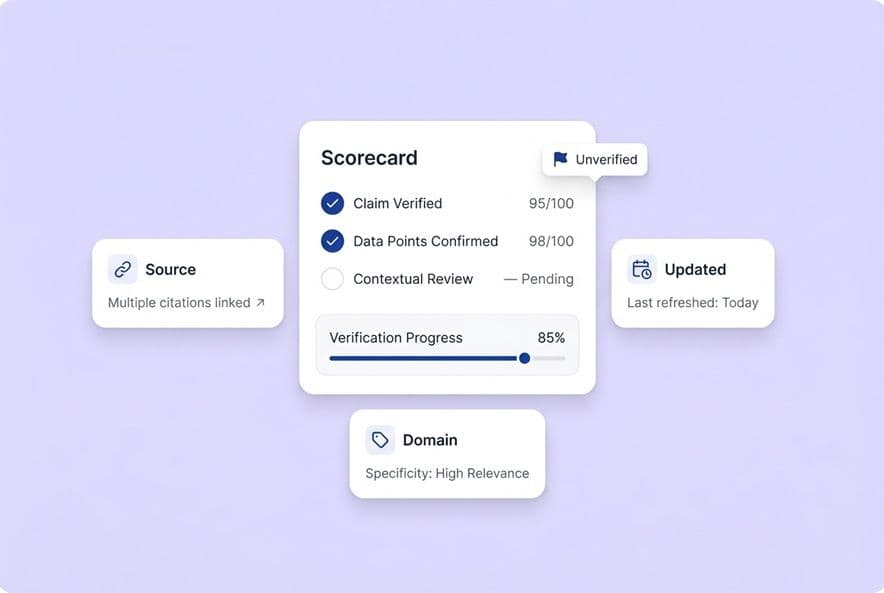

The governance question: who owns "definition of done"?

Every content system needs a "definition of done." It's a shared standard that says when a piece is ready to publish. Without it, "done" just means "whenever you stop editing," which is always you.

Your definition of done doesn't have to be complicated. It could be this: keyword target confirmed, SEO score above 85, at least 3 internal links, product claims verified, intro reviewed for angle, meta description written. That's it. Five or six checks, owned by specific people.

AI Platform vs. Content Agency vs. More Writers: The Trade-Offs

Let's put it all side-by-side.

Comparison table: cost, control, speed, and risk

| Dimension | AI content platform | Content agency | Hire (FT writer) |

|---|---|---|---|

| True cost/month (4 articles) | $500–1,200 total | $4,000–8,000+ | $7,000–9,000+ with overhead |

| Strategy control | High | Medium | High |

| Voice/accuracy control | High (if configured) | Low–Medium | Medium |

| Speed-to-first-draft | Hours | Days–weeks | Days |

| Speed-to-publish | 2–5 days | 2–6 weeks (ramp) | 1–3 weeks (ramp) |

| Quality at scale | Stable (systematic) | Variable (brief-dependent) | Variable (person-dependent) |

| Distribution support | Often included | Rarely included | Rarely included |

| AEO/AI visibility | Native (some platforms) | Unlikely | Unlikely |

| Governance burden | Low (encode once) | High (ongoing briefing) | Medium (training + feedback) |

| Vendor/talent risk | Platform dependency | Contract/quality drift | Turnover |

When each option is the wrong choice

Don't choose an AI platform if: You have absolutely no time to configure it and you think it's a magic button. It's a system, not a miracle. It needs a human to guide it, especially for content that relies on original research or interviews.

Don't choose an agency if: Your brand voice is what makes you special and you don't have the time to write incredibly detailed briefs for every single article. Agencies produce what they're given. Vague briefs will get you generic content every time.

Don't choose a hire if: You need to increase your output in the next 60 days. A new writer won't be fully productive for 8 to 12 weeks, and you'll spend that entire time training and reviewing their work.

Building a Governance Model for Humans and AI

Most guides tell you to "maintain quality" but never explain what that actually means. Let's fix that.

The minimum viable governance stack

Forget a 40-step checklist. You need six checkpoints. That's it.

- The Brief: It's required before any draft starts. It must include the keyword target, search intent, editorial angle, key claims, product references, and persona. If the brief is incomplete, the draft will be wrong.

- Source and Claim Verification: Who checks the product claims against your internal source of truth? This is a 10-minute step that can prevent massive brand damage.

- SEO Structural QA: Heading hierarchy, keyword coverage, internal links. This can be systematized and doesn't have to be you.

- SME Review (when needed): For highly technical or regulated content, route it to the subject matter expert. Not every article needs this, only the ones where a mistake has real consequences.

- Editorial QA: Voice, angle, opening paragraph, transitions. This is where your judgment is critical. Keep this part for yourself, but narrow the scope of what you're checking.

- Publish Checklist: Meta description, alt text, canonical tag, scheduling. This is a simple checklist for whoever is in the CMS.

A practical RACI for a lean SaaS team

| Task | Content Director | Writer/AI | Freelancer | SEO Specialist |

|---|---|---|---|---|

| Topic strategy | R/A | C | — | C |

| Brief creation | A | R (AI) | C | C |

| Draft production | — | R | R | — |

| SEO structure QA | A | — | — | R |

| Voice/claims QA | R/A | — | — | — |

| Internal linking | A | R (AI) | — | C |

| Publish/distribute | A | R (AI) | R | — |

The goal here is simple: you should be Accountable for strategy and voice, not Responsible for every little execution step. Every "R" that's currently on your plate and could be moved to an AI system or a trained human is capacity you get back.

How to use freelancers and AI without losing your brand

This is where I see teams mess up all the time. They bring in freelancers, give them AI tools for speed, and six months later their content sounds like it was written by a committee of robots. Nobody bothered to write down the brand standards anywhere but the marketing director's head.

The fix is a shared context document. This is your bible. It contains your brand voice rules, product claim boundaries, and persona descriptions. It's a source of truth that everyone (and every system) pulls from. Some AI platforms have this built-in. We use DeepSmith's "Deep IQ" for this. It's basically a central brain for our brand that keeps every AI-generated draft on the same page. It means even our freelancers are working from our standards, not guessing.

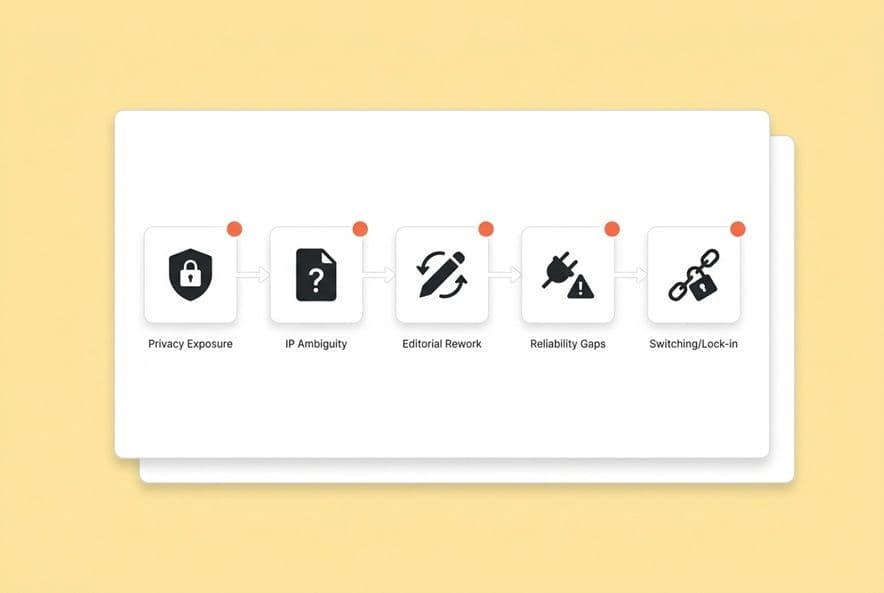

How to Manage Risk: Security, Legal, and Lock-in

This is the boring stuff that can save you from a disaster later. Don't skip it.

Security & privacy

When you use an AI platform, your data is processed by their systems. Before you upload a sensitive roadmap, you need to know where that data goes and if it's used for model training. Agencies are a human risk. NDAs are standard, but they are hard to enforce. An in-house team is the most secure but also carries the most operational overhead.

Contract and legal questions to ask

For AI platforms: Do you use my inputs to train your models? Who owns the final output? What happens to my data if I cancel? Are you SOC 2 certified?

For agencies: Who owns the copyright on the deliverables? What are the confidentiality and exit clauses if the quality drops?

For hires: Make sure your employment agreements have explicit work-for-hire provisions. Anything created on company time with company tools is company property.

Vendor lock-in

The biggest lock-in risk isn't your content; it's your process. If your entire workflow is dependent on one vendor's system, you're trapped. When you leave, you don't just lose files, you lose all the institutional knowledge baked into their process. Before you commit, ask: can I export everything in a standard format? Are my briefs portable?

How to Avoid "AI Detection" Problems and Use AI to Scale

Forget "detection risk." The real risk is publishing thin, generic, useless content that no one would ever cite. The detection tools are just a clumsy proxy for that quality signal. If you fix the quality, you don't have to worry about the detectors.

A playbook for using AI without sounding like it

- Add an original angle to every brief. Generic prompts create generic output. You have to specify the unique take before the draft starts.

- A human must own the intro and conclusion. This is non-negotiable. This is where your voice lives. Rewrite them yourself or give them to your best writer.

- Use your own data. Cite internal data, customer language, and product specifics. An AI can't invent your proprietary insights.

- Be disciplined about claims. Every factual claim should be traced to a source you can verify. This protects you from both misinformation and the appearance of AI hallucination.

Where AI helps most (and where it hurts)

AI is fantastic at research synthesis, structural drafting, SEO optimization, internal linking, and repurposing. These are rules-based tasks.

It struggles with original thought, genuine perspective, expert interviews, and the specific nuances of your brand. Don't automate the part of the process that makes your content worth reading.

If Leadership Is Asking About AEO, What's the Right Move?

Your CEO didn't ask about AEO because they read a blog post. They saw a competitor show up in a ChatGPT answer. That's a signal that the entire game of discovery is changing. The investments you make in content now will have consequences far beyond Google rankings.

What "AI visibility" actually requires

AEO isn't just a new content format. It's a measurement and optimization loop. To do it right, you need to:

- Define the prompts your buyers use in ChatGPT, Perplexity, and Google's AI Overviews. These are full questions, not keywords.

- Track your mention and citation rate for those prompts. Are you mentioned? Do they link to you? Is a competitor always showing up instead?

- Attribute citations to specific pages. Which of your articles are earning these citations? What's different about them?

- Monitor what your competitors are doing. Which of their pages are winning citations and why?

Without this measurement, you're just guessing.

Platforms like DeepSmith AI Visibility are built for this. It's what we use. It lets us track who's getting cited for the prompts our buyers use across all the major AI engines. This gives me something real to show the CMO instead of just saying, "I think we have a problem."

Choosing with AEO in mind

Regardless of which model you choose, your content needs to answer specific questions directly, include verifiable sources, use consistent language for your products and categories, and be published frequently. Agencies rarely think about this. Hires need to be trained on it. AI platforms with AEO built-in have a structural advantage.

How to use AEO insights

This is how you make it a system. Every month, review the prompts you're missing from. Identify the content gaps. Add briefs to your queue to target those specific prompts. Then, after you publish, track whether your citation rate improves over the next 4 to 8 weeks. That's a repeatable process, and it's a quarterly report your CMO will actually understand.

The Best Default Operating Model for Series A–B SaaS

Here are four common scenarios I see and the path I'd recommend for each.

Scenario 1: "Our SEO is working, but I'm the bottleneck and we're stuck at 4 articles a month." → AI content generation platform. You have the strategy. You need production capacity without adding more management work. Configure the platform with your brand rules and then focus on reviewing, not producing.

Scenario 2: "Leadership is asking about AEO, and I have no idea where to start." → An AI platform with native AEO measurement. You need visibility first. You need the infrastructure to see where you stand before you can create content to fix it. An agency won't build this for you, and a new hire won't know the tools.

Scenario 3: "We're in a regulated space and every single claim has to be reviewed." → A hybrid model: AI-assisted drafting with a strict SME review. Use AI for research, structure, and the first draft. Then, route it through your legal or SME review process. Don't hire an agency that won't respect your process.

Scenario 4: "We lost a key freelancer and our output dropped by half last quarter." → AI platform as your production floor, freelancers for the editorial layer. Stop being dependent on individuals for volume. Make the AI system your reliable producer, and use talented freelancers for their editorial judgment, not their raw output.

For most Series A–B teams I talk to, the best path is AI-assisted production with internal strategy ownership and selective input from freelancers or SMEs. An AI platform like DeepSmith Content Studio can handle the entire assembly line: research, briefs, drafting, SEO, internal linking, and even publishing to the CMS. This frees your team to review and ship, not manage a production line. Features like Autowrite keep things moving, and the Agent Library can handle distribution so those LinkedIn posts actually get written.

Your 30-day rollout plan

- Week 1: Establish your baseline. Document your current hours per article, publish cadence, and revision cycles. Set a target.

- Week 2: Configure the system. If you chose a platform, set up your brand context and rules. If you chose an agency, get them onboarded. If you're hiring, post the job description.

- Week 3: Run a pilot with 2 articles. Use the full workflow. Every time your governance checklist catches something, that's a point where the system needs to be tuned.

- Week 4: Review and calibrate. Did the pilot articles require more or less work than your baseline? What broke? Adjust the process.

Your success metrics at 90 days are simple: did you hit your publish cadence? Did your time-per-article go down? Are your revision rounds stable or decreasing? And are you tracking at least one AEO metric?

Questions to bring to your next call

For AI platforms:

- How do you enforce brand voice? Is it just a doc I upload, or is it structured data the system actually uses?

- What happens to my data? Do you use it for training?

- What does your publishing integration actually do?

- How is AEO measurement built in?

For agencies:

- Show me a sample brief. Who fills that out, you or me?

- What's your revision policy? When do you start charging more?

- How do you ensure product accuracy for a technical product like ours?

- What's the plan if the quality drops after three months?

For hiring:

- Can you write SEO-ready content without a separate SEO review?

- What tools are you used to, and how fast do you learn new ones?

- Show me a piece where you had to adopt a strict brand voice that wasn't your own.

Build Your Scorecard and Pick a Model

You have the variables. The next step is to apply them to your actual situation, not wait for a perfect dataset.

This week, run that 60-minute session with your team. Document your current hours per article. Calculate your baseline cost, then stress-test it at a higher volume. Map out where quality is breaking down. Go to ChatGPT and ask it the three questions your buyers are most likely to ask, and see if your content shows up.

Then, pick one model and pilot it for 30 days.

The teams that solve this production bottleneck are the ones who stop endlessly evaluating and start running a structured experiment. The governance and the cadence all follow from the commitment to try something systematically. You don't find the perfect vendor first; you build the right system.

If you want to see how an AI content production and visibility platform handles this from end to end, DeepSmith is worth a look. Bring your current workflow and your AEO questions. You should stress-test any potential partner before you decide.