I've seen this happen a dozen times. You're up against a deadline, you open up ChatGPT, and you feed it a topic. Forty-five seconds later, a 1,200-word draft appears. For a second, you feel like a genius.

Then you start reading.

The intro is mush. The structure is all wrong. The voice sounds like it was written by a robot from the 1980s. And buried in paragraph four is a "fact" that's just… not true. So you spend the next two hours fixing it.

That's the real cost of "free" AI. It isn't the zero dollars you paid. It's your time. It's the hours that vanish into editing drafts that are almost good enough.

Now, this isn't another one of those "AI is coming for your job" articles. It's not. Using AI to help create content is a smart move; it's a legitimate production strategy. My argument is simpler and, I think, more useful: those "free" AI tools have hidden costs that pile up fast.

We're talking about wasted time, exposed data, a brand voice that gets watered down with every article, and the massive headache of switching tools when you finally hit a wall.

I want to give you a framework for making a better decision. We'll walk through five specific costs, look at the legal reality, build a quick cost model you can actually use, and map out a plan to switch that won't blow up your content calendar.

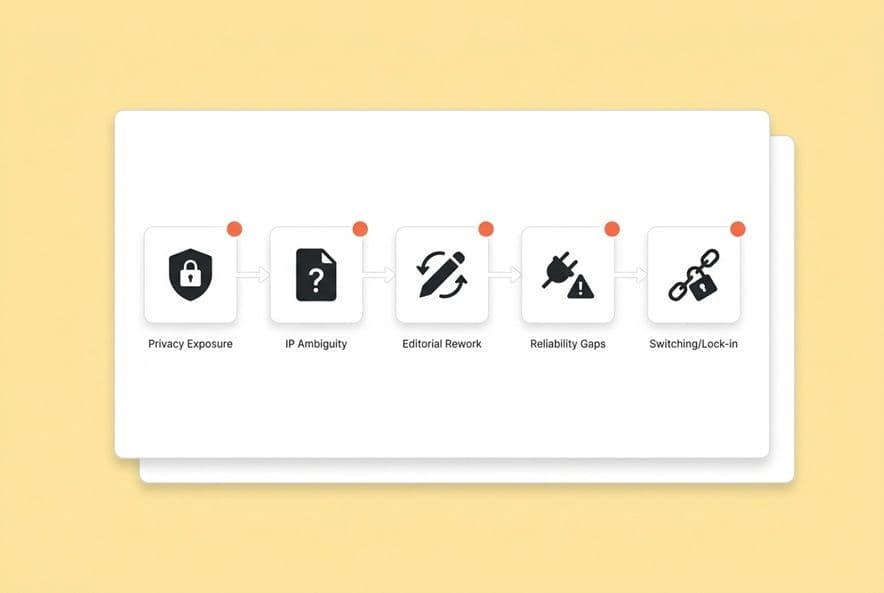

What are the 5 hidden costs of "free" AI content creation tools (and why do they show up fast)?

Let's just get right to it. You don't need a 500-word preamble to name five things.

Hidden cost #1: Data monetization and privacy exposure

Look, those free-tier platforms have to pay their server bills, and it's usually not out of the goodness of their hearts. The price you're paying is often your data. Every time your team pastes a brief, a new product positioning doc, or an internal competitive analysis into that free tool, you're rolling the dice. You have to assume that input could be saved, reviewed by a human, and used to train their model. The user interface (and let's be honest, those endless terms of service) rarely makes this clear.

Hidden cost #2: IP and content ownership ambiguity

This one is a legal minefield. Most free tools have terms that are foggy at best about who owns the words on the screen. Some even give the platform rights to use your prompts. For content that's supposed to be a core part of your brand, your SEO strategy, and your sales funnel, "we'll figure out the legal stuff later" is a recipe for disaster. I've seen it happen.

Hidden cost #3: Editorial rework and quality inconsistency

A generic draft that takes three hours to fix wasn't free. The cost of that article is your team's time. All the restructuring, rewriting, SEO work, voice fixing, internal linking, and fact-checking is real labor that just gets buried in your "editing" budget.

Hidden cost #4: Reliability and support gaps that break workflows

Free tools are notorious for going down at the worst possible moment. They get slow during peak hours. They cap your usage right when you're trying to hit a big deadline. And when it breaks? There's no one to call. Your whole content pipeline just stops. The cost is a missed launch or a backlog that haunts you for the rest of the quarter.

Hidden cost #5: Scaling + lock-in costs when you outgrow the free tier

All those amazing prompts you wrote, the templates you perfected, the specific workflow your team finally got used to… none of it moves with you. When you eventually need to upgrade to a real tool, you're not just paying to switch software. You're paying to rebuild your entire process from scratch, probably in the middle of a busy quarter when you can least afford it.

The fastest way to get ahead of these costs is to think about your content as a repeatable production line, not a series of one-off prompts. You want a pipeline where research, briefing, drafting, and publishing are connected. That's what platforms like DeepSmith's Content Studio are designed for. Your team's job becomes reviewing and publishing, not duct-taping the process together for every single article.

How do free AI writing tools monetize your data—and what should you assume is happening to your inputs?

This is the cost that sneaks up on everyone. It's not because we're naive; it's because the real risk isn't obvious until you see what your team is actually pasting into those little text boxes.

The common monetization models behind no-cost AI platforms

Free AI platforms have to make money somehow. Some state right in their terms that they use your inputs to train their models. Others use the free tier as a giant sales funnel, watching your usage data to decide what to build next. Some sell aggregated data. And some are just burning venture capital money, hoping to figure out monetization later.

What do all these models have in common? The platform's goals and your privacy are not automatically aligned. You're making a trade every time you hit "generate," and most teams don't even realize they're at the negotiating table.

What content teams should treat as sensitive (even if it doesn't look like PII)

When I talk to other founders about sensitive data, they immediately think about customer credit cards or social security numbers (PII). But for a content team, the real danger is different. Just think about what gets pasted into a free AI tool on a random Tuesday:

- An unreleased feature announcement.

- Your honest assessment of a competitor's weaknesses.

- Customer quotes from a case study that haven't been approved yet.

- The entire messaging strategy for next quarter's launch.

- A draft with product claims that legal is still reviewing.

None of that is PII. All of it is incredibly valuable to your competition. Once you put it in that free tool, you're just hoping it disappears. Hope is not a strategy.

A practical mitigation checklist for using free AI tools safely

If you absolutely have to use free-tier tools for a bit longer, here are some guardrails that can reduce your risk.

- Redact before you paste. This is non-negotiable. Strip out product names, feature details, pricing, and customer names. Describe things generically.

- Use public-only inputs. If the information is already on your website or in a press release, go for it. The risk is low. If it's not public, it doesn't go in the prompt.

- Set a clear team rule on sensitive topics. Make a list of what's off-limits: internal metrics, customer data, M&A talk, legal drafts. Write it down.

- Log what was used where. A simple spreadsheet is fine. Just track which tool was used for which draft. If a problem comes up later, you'll know where to look.

- Require manager approval for anything with a client name. "Just for a draft" is how accidents happen. This is a hard-and-fast rule.

What legal protections exist for AI-generated content—and how do you reduce IP risk without being a lawyer?

The short answer is, you have fewer protections than you think, and it's a lot more complicated than the simple headline "AI can't own copyright." (For the latest on how copyright law applies to AI-generated works, the U.S. Copyright Office's AI initiative is the most authoritative reference.)

The three IP questions to answer before you publish AI-assisted content

Before a single piece of AI-assisted content goes out under your brand, you need clear answers to three questions:

- Who owns the output? Most platforms say they don't own your output, but they give you a "license." A license is not the same as ownership. You need to know if the platform keeps any rights to the content you create.

- Can the platform use your inputs to train its models? This is the big one. If your prompts contain your secret sauce and those prompts get used for training, you've just donated your company's IP to a public resource.

- Does commercial use require a paid plan? Some free tools have terms that restrict "commercial use." If you're publishing content to get leads and drive revenue, that's commercial use.

What to look for in terms: training use, retention windows, and commercial rights

I'm not a lawyer, and this isn't legal advice. It's a reading list from one founder to another. When you scan a platform's terms, search for these things:

- Training language: Look for phrases like "we may use your inputs to improve our models." Compare it to "we do not train on user data" or "opt-out available." These are huge differences. (The NIST AI Risk Management Framework offers a useful lens for evaluating how platforms handle data governance and transparency.)

- Retention window: How long do they keep your data? Is there a way to delete it?

- Commercial rights: Do they explicitly allow commercial use on the free plan?

- Red flags: Watch out for broad language that gives them rights to "your content and data," makes deletion difficult, or gives them the right to sublicense your stuff. If you see that, it's time to talk to a real lawyer.

My point is to know what you're signing up for before you've built half your blog on a tool you don't understand.

Team-level governance: what your content ops policy should say (in plain English)

We don't have a 20-page policy. We have a one-pager, and honestly, that's all you need. It covers our ethical rules and our practical ones.

- Allowed vs. Prohibited Inputs: List what's safe (public info, generic topics) and what's banned (unreleased features, customer data). Be explicit.

- Review and Accountability: Every AI-assisted article must have a human's name on it. That person is accountable for the accuracy and quality before it's published. The AI is a tool, not a teammate.

- Attribution and Disclosure: Decide on your team's policy. Do you disclose AI use internally? To readers? In what situations? Make a consistent rule to avoid a thousand one-off debates.

- Approved Tools: Keep a short, approved list of tools. It's much easier to enforce a positive list ("use these") than a negative one ("don't use anything else").

Why "free" content creating AI tools increase your cost per article (and how to estimate it)

The draft is free. The article is not. I learned this the hard way.

Where the rework actually happens: brief → draft → SEO → internal links → CMS

Let's walk through what a real workflow with a "free" tool looks like. I bet this sounds familiar.

- Brief: You still have to write it. The free tool has no idea what your keyword strategy is or who you're trying to talk to.

- Draft: You get a generic first pass. Now the real work begins: rewriting the intro, fixing the structure, changing the voice, and hunting for made-up "facts."

- SEO: The free tool doesn't know your keyword targets or how you structure your headings. So you go back and manually rework it all.

- Internal links: The tool doesn't know your site's content map. So you have to manually find and add every single internal link.

- CMS publishing: You're still stuck with copy-pasting, cleaning up formatting, and finding images.

Every single one of those bullet points is your team's time and labor. And that has a cost.

A simple total cost of ownership model (with variables you can measure)

This isn't about creating a perfect P&L statement. It's about making the hidden costs visible. For one article made with a "free" tool, you can estimate your cost like this:

TCO per article = (Writer time [prompting + editing] + Editor time [rework + SEO + linking]) x Fully-loaded hourly rate + Tool overhead [e.g., Surfer/Clearscope] + Compliance risk [a weighted guess]

When I first ran this calculation for my own team, I was shocked. We found that the "free" draft was adding several hours of work per article. Use this formula. Replace your assumptions with a real number.

If you want a deeper breakdown, use a dedicated TCO per article framework that separates labor, tooling, workflow overhead, and rework so you can compare "free" vs paid apples-to-apples.

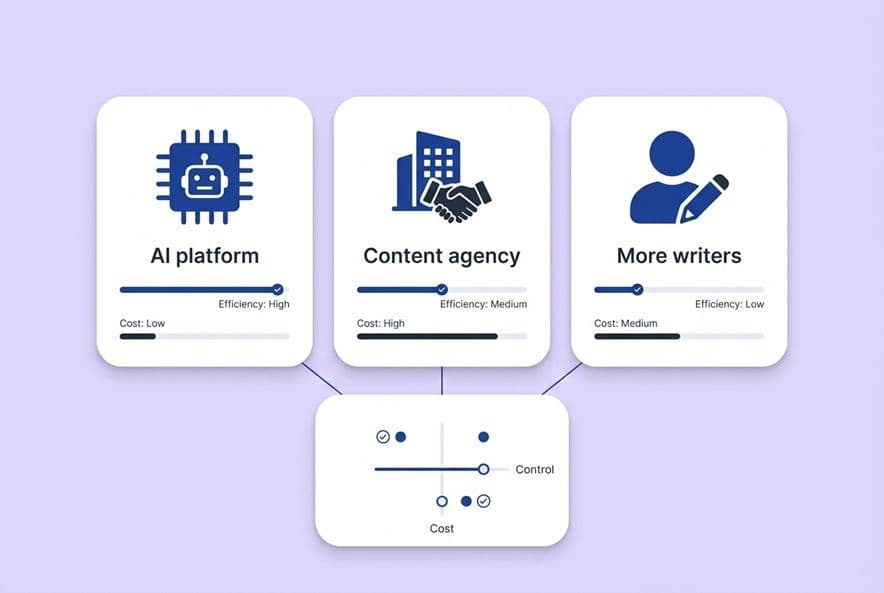

Free vs paid: what "ROI" should include for content teams

ROI isn't just about cranking out more drafts per hour. A real ROI calculation for content ops should include:

- Throughput consistency: Can you actually hit your publishing goals every single week?

- Rework reduction: How many drafts get to "published" without three rounds of heavy edits?

- Governance risk reduction: What's the potential cost of a data leak or an IP fight?

- Distribution follow-through: Are you publishing the content and then actually promoting it, or does that always fall off the to-do list?

- AI search visibility: Is your content being cited by tools like ChatGPT and Perplexity?

That last one is the new frontier. It's where the best teams are being measured now. To track it, you need to know what questions your buyers are asking AI, which of your pages get cited, and which competitors are eating your lunch. We built the AI Visibility module in DeepSmith to answer exactly that. It turns the "are we showing up?" question into a real metric you can track, not just a gut feeling.

When do free AI content generation tools fail at scale (and what are the early warning signs)?

You'll feel the system breaking before you can name the problem. The signs are small at first. The tool feels a little slower. You hit a usage cap at a bad time. A draft comes back and it's just... worse than it was last week. By the time the whole pipeline grinds to a halt, you're already playing catch-up.

Reliability failure modes: caps, throttling, outages, and no escalation path

Free tiers are designed to get you to upgrade, so the platform has no real incentive to make them reliable. When they throttle free users during busy hours, you lose a day. When there's an outage and no support email to write to, you just have to wait. If you run your whole content operation on free tools, your editorial calendar is completely at the mercy of a vendor who has no obligation to you.

One way around this is scheduling. For example, DeepSmith Autowrite lets you schedule article generation in advance based on a cadence you set. The pipeline keeps moving, even when your team is swamped.

Brand control limits: tone drift, inconsistent claims, and "AI-sounding" outputs

Free AI tools don't know your brand voice. They don't know your positioning. Every draft they create starts from a generic, public-domain understanding of the world. The result is what I call "tone drift." Each article sounds a little bit different, and slowly your brand voice gets diluted into a generic sludge. Fixing that on every single article is a tax on your team's time and creativity.

If you're seeing this, it's usually a sign you need a structured source of truth like brand voice consistency that your whole team (and your AI workflow) can actually enforce.

Workflow scalability: collaboration, repeatability, and handoff problems

One person using ChatGPT can make it work. But content isn't a solo sport. Add a second writer, a freelancer, and a stakeholder who needs to review everything. Suddenly you have four people using four different prompt styles, getting four different kinds of output, with no version control. Free tools are single-player games. Content operations is a team sport. The mismatch is invisible when you're small, but it becomes a huge problem as you scale.

How do you migrate from free AI tools to a paid, enterprise-grade workflow without losing momentum?

The number one reason I see teams stick with free tools for too long isn't the cost. It's the fear of breaking the fragile process they have. But you can make the switch without blowing everything up. Here's how.

Step 1: Audit your current AI usage (inputs, prompts, workflows, risk)

Before you can fix it, you have to see it. For one week, have your team log everything: which tools they use, what they're pasting into them, which prompts work well, and where the biggest time-sinks are. This audit will show you exactly what needs to be fixed first.

Step 2: Define "production-grade" requirements (security, workflow, voice, SEO/AEO)

Turn your team's complaints into a list of requirements. "The drafts sound generic" becomes a requirement: The platform must support our specific brand voice. "Internal linking takes forever" becomes: The platform must automatically suggest internal links. "We have no idea if we're in AI answers" becomes: The platform must track our AI citation rate. When your requirements are based on real problems, it's much easier to judge different vendors.

If you want a concrete comparison of the duct-taped stack vs a real system, see ChatGPT + GDocs + Surfer and how integrated platforms remove the handoff friction.

Step 3: Run a parallel pilot and set pass/fail criteria

Don't just flip a switch. Pick a candidate platform and run it in parallel with your old system for 4-6 weeks on a few articles. Before you start, define what "success" looks like. Is it reducing rework time to under an hour per draft? Is it passing a blind review for brand voice? Can you get an article from brief to published in less than three hours? A pilot lets you make a decision based on data, not a sales pitch.

Step 4: Cut over with templates, training, and governance

When you make the move, do it with structure. Migrate your best prompts into the new system. Publish your one-page content policy. And most importantly, train your team. The training shouldn't just be about the new tool. It should be about how to think critically about AI. Teach them how models work, how to write great prompts, and how to spot a subtle error or a logical flaw. This makes your team more resilient, not just more efficient.

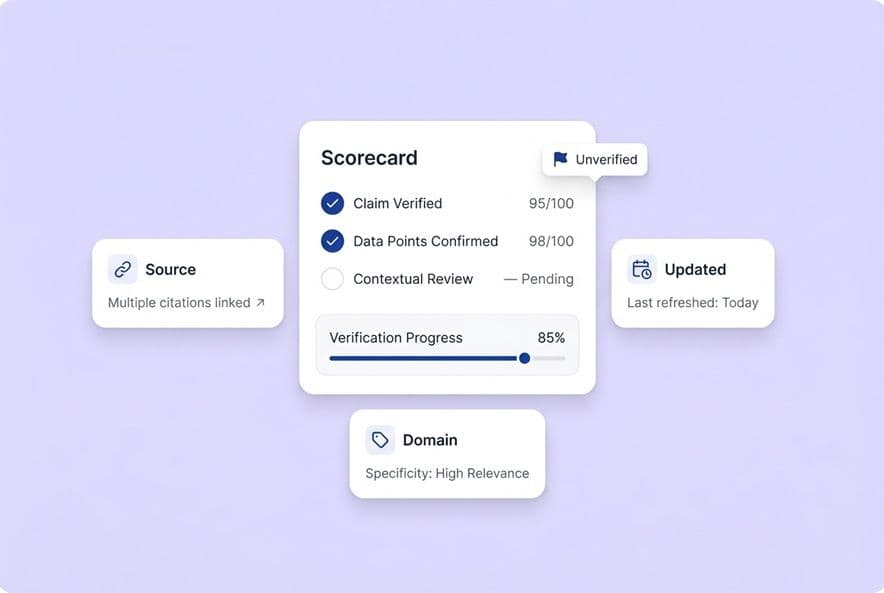

What should you look for in an AI content platform if your goal is SEO + AI visibility (AEO), not just faster drafts?

If your only metric is draft speed, you're solving yesterday's problem. The real goal is to create a consistent stream of high-quality content that performs well in both Google search and in AI-generated answers.

Evaluation criteria checklist (the non-negotiables vs nice-to-haves)

Non-negotiables:

- A clear policy on data retention and training (you want "no training" by default).

- Explicit rights for you to use the content commercially.

- The ability to enforce your brand voice and product context.

- SEO features that are part of the writing process, not an afterthought.

- Real support from a human being when things go wrong.

Nice-to-haves:

- Direct integration with your CMS.

- AEO / AI citation tracking.

- Tools for content gap analysis.

- Scheduled content generation.

How to evaluate "AI visibility" capability in a practical way

Don't just ask vendors, "Do you support AEO?" Everyone will say yes. Ask a better question: Can you show me which specific prompts our buyers are using, which of our pages are getting cited in AI answers, and how our citation rate compares to our top three competitors? That is a concrete, measurable outcome. If a vendor can show you a report with a prompt-level citation rate, you know they're serious.

If you need a practical framework for what "AEO" actually means in execution, start with the Answer Engine Optimization (AEO) pillars (answer structure, machine-readable signals, authority, and measurement).

Questions to ask vendors before you commit

- What is your data retention policy? How long do you store my inputs?

- Do you train your models on my data by default? How do I opt out?

- Who owns the content I generate? Are there any commercial use restrictions?

- If your platform goes down, what is your SLA and how do I get help?

- Can I export all of my data, prompts, and content if I decide to leave?

- How does your platform keep our brand voice consistent across our entire team?

Build a content workflow that's actually cheaper than "free"

A free AI writing tool is a fine for a one-off experiment. It is a terrible production system. That gap is where all the hidden costs live: the rework, the data leaks, the brand confusion, and the fragile pipeline that's always one bad day away from breaking.

The teams that are winning right now aren't the ones with the biggest budgets. They're the ones who treat content like a manufacturing process and pick tools that support a real system. They use tools with clear data policies, real IP protection, and the reliability to keep their content calendar on track without anyone having to be a hero.

If you're tired of paying those hidden costs, the next step is to take a hard, honest look at your current workflow. Start there. Then build a list of your real requirements, and run a pilot before you buy anything. That's how you build a content operation that is genuinely, truly cheaper than free.