You searched your company's core use case in ChatGPT last week. A competitor showed up. You didn't. And now your CMO is asking about "AI search strategy" in the next all-hands.

Deep breaths. We've all had that heart-in-your-stomach moment.

Here's the uncomfortable truth I had to learn: this isn't a structural problem, it's a systems problem. And it's about to become a competitive one. The shift from SEO to AEO isn't a new checklist you can just tack on. It's a different operating model, a whole new way of working. It demands new roles, new ways to measure success, and a new definition of what "good content" even means.

I'm going to lay out the operating model that worked for us. We'll cover which roles change (and how), what a real AI-plus-human workflow looks like, how to govern quality when you're moving fast, and how to prove ROI to your boss beyond just "we published more." If you're a content director at a Series A or B SaaS company trying to compete without tripling your headcount, this is your blueprint.

What actually changes when you move from SEO to AEO (and why your org chart has to change with it)

How AEO changes what "winning" content looks like (answers, citations, extractable claims)

For years, winning in SEO was simple. You found a keyword, wrote a long article, and earned traffic. The page itself was the prize. In AEO, winning means something different: your page gets cited. An AI engine doesn't send users to browse your article. It reads it, pulls out the most useful claim, and serves it up as the answer, sometimes with a link back to you and, let's be honest, sometimes without.

This changes the job of your content entirely. To get cited, a piece of content needs:

- A clear, extractable answer right at the top. No more five-hundred-word intros that bury the lede in paragraph three.

- Structured formatting like headers, bullets, and defined terms that language models can easily parse.

- Attributable claims that feel credible. These are specific, sourced, or clearly framed as your company's official position.

- Contextual authority, which means your content signals deep expertise on this specific question, not just broad coverage of a topic.

The fundamental unit of work is different. In SEO, our world revolved around the keyword. In AEO, the unit is the prompt, the actual question a buyer types into an AI chat box. This means you have to think differently at the brief stage, not just when you're editing.

SEO vs. AEO deliverables (table): what you produce, what you measure, what you optimize

| Dimension | SEO | AEO |

|---|---|---|

| Primary work unit | Keyword / keyword cluster | Buyer prompt / question |

| Content goal | Rank for a term, drive traffic | Get cited as an answer |

| Content format | Long-form, keyword-rich article | Citation-ready, structured answer content |

| Optimization signal | Rankings, impressions, CTR | Mention rate, citation rate, prompt coverage |

| Measurement tool | Ahrefs, Semrush, GSC | AI visibility tracking (per platform) |

| Quality signal | Page authority, backlinks, dwell time | Extractability, claim specificity, structured formatting |

| Distribution goal | Indexed and ranked | Indexed, cited, and attributed |

To be clear, both still matter. This isn't an either-or situation. The best teams learn to do both at the same time. But they require different systems. You can't just bolt AEO onto your old SEO checklist and expect it to work.

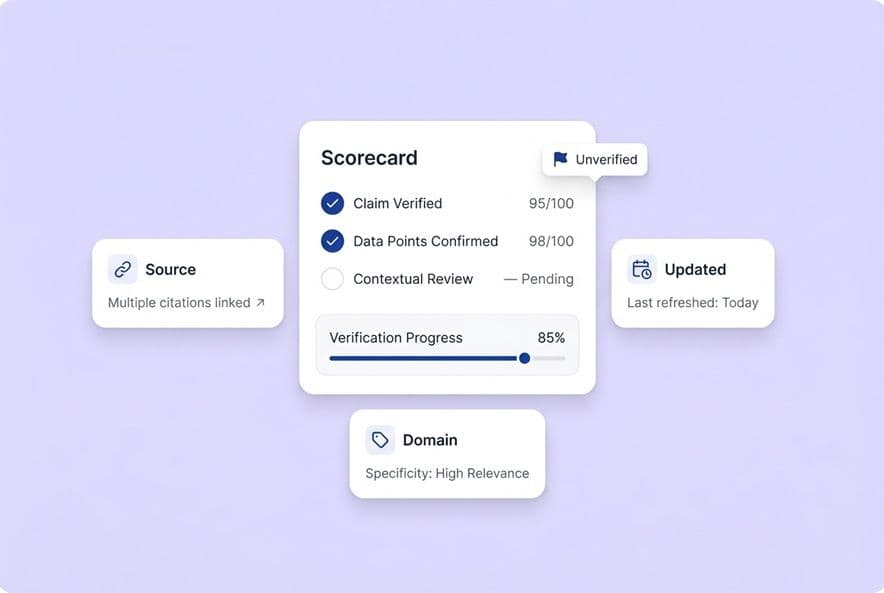

Newer platforms are finally making this measurable. You can define the prompts your buyers are asking, see where you show up across ChatGPT, Gemini, Perplexity, and others, and figure out which pages are earning you citations. That's how AEO stops being a guessing game and becomes a real feedback loop. You define prompts, see what gets cited, and prioritize your work accordingly.

The biggest misconception: adding AEO tasks onto your current process

This is where I see most teams stumble. They read an article like this, get the idea, and then just add "AEO optimization" to the SEO specialist's to-do list. The problem is that your team is already drowning. Adding a whole new class of work to the same people running a keyword-focused assembly line is a recipe for burnout and mediocre results.

The real problem isn't the workload. It's the design of the work. Your current roles were built for a world where you turn keywords into pages. AEO needs prompt-level thinking, someone verifying claims, a disciplined approach to formatting, and a new measurement loop. If you don't redesign the workflow, you're setting your team up to fail at both SEO and AEO.

What roles your content team needs in an answer-driven world (even if it's still a small team)

The new responsibilities no one owns today (AEO research, claim QA, distribution systems)

Take an honest look at your team. Who owns these things right now?

- Prompt mapping: Finding the actual questions your buyers are asking AI tools.

- Citation monitoring: Tracking which of your pages get cited and for which prompts.

- Claim QA: Making sure every factual claim in a draft is accurate, attributable, and doesn't promise something your product can't do.

- Distribution execution: Turning every published article into LinkedIn posts, newsletter copy, and social assets.

- Voice governance: Making sure all this AI-assisted content actually sounds like your brand.

In most teams I talk to, the answer is "nobody," or "the content director tries to do all of it at 11 PM on a Tuesday." Sound familiar? These aren't just nice-to-haves. They're the jobs that determine whether your AEO efforts actually drive the business forward or just create more content nobody sees.

3 practical team models (lean / balanced / scaled) you can adopt (table)

| Model | Headcount | Who Owns AEO | Key Trade-off |

|---|---|---|---|

| Lean (1–2 people) | Content lead + 1 writer | Content lead doubles as AEO lead; use AI systems to absorb production work | Requires strong tooling; content lead must protect strategy time |

| Balanced (3–4 people) | Content lead + 2 writers + SEO specialist | SEO specialist expands scope to include AEO tracking and prompt research | Most realistic for Series A–B; SEO role needs formal upskilling |

| Scaled (5+ people) | Adds dedicated editor + distribution specialist | Editor owns claim QA governance; distribution specialist owns multi-channel output | Enables true specialization; still benefits from connected AI tooling |

None of these models require a hiring spree. What they do require is formal ownership. Someone has to be accountable for prompt coverage and citation rates, even if it's just one part of their job.

How existing roles change (writers, editors, SEO) instead of being replaced

I hear this a lot: "Is AI going to replace my writers?" No. But their jobs are about to get a lot more interesting. According to the World Economic Forum's Future of Jobs Report, AI adoption works best when you plan to reskill your team, not replace them.

-

Writers shift from just drafting to strategic validation. We need to upskill them to:

- Validate AI drafts against the brief, focusing on the strategic angle and whether the claims are accurate.

- Strengthen the narrative, injecting the kind of real insight and perspective that AI can't fake.

- Master the brand voice, making sure the final piece sounds recognizably human and not like a generic edit.

-

Editors evolve from line checkers to QA architects. We need to train them to:

- Design review systems by creating checklists and review tiers so not every piece gets the same level of scrutiny.

- Implement governance by translating brand rules into the AI platform itself.

- Their job becomes building a system that prevents errors, not just catching them one by one.

-

SEO specialists expand their scope from keywords to prompts. We need to reskill them to:

- Own the AEO loop by using AI visibility tools to map buyer prompts and track citation rates.

- Translate AEO insights into briefs, closing the gap between what buyers ask and what you answer.

- Become prompt engineers, learning how to get citation-ready drafts from the start.

The "AEO lead" function: what it is and who can own it right now

You don't need to post a job for an "AEO Specialist" tomorrow. You just need someone on your team to formally own the AEO loop. This person defines the prompts your ideal customer uses, tracks citation rates, figures out what winning content has in common, and feeds that insight back to the team.

At most Series A or B companies, this falls to the SEO specialist or the content director. The title doesn't matter. What matters is the commitment. Someone needs to be running a weekly prompt review and connecting performance data back to content decisions.

How to build an AI + human workflow that produces high-quality, citation-ready content (not generic drafts)

The AEO-first content workflow (steps from topic → brief → draft → QA → publish → distribution)

Here's an end-to-end workflow you can actually use:

- Topic selection: Use prompt research alongside your keyword research. What are buyers asking AI tools? Prioritize topics where those two worlds overlap.

- Brief creation: Build briefs that are centered on the prompt. They should specify the exact claims to support and the formats (like FAQs or numbered lists) that will make the content easy to cite.

- AI-assisted drafting: Use a multi-agent pipeline, not a single "write me a blog post" prompt. Each step, like research, drafting, and an initial QA pass, should be a discrete stage with its own quality check.

- Human QA: A writer or editor reviews the draft for strategic alignment, claim accuracy, voice, and extractable quality. This shouldn't be a full rewrite. If this step takes more than 30-40 minutes, your AI output needs work.

- Optimization layer: Things like internal links and metadata should be built into the draft, not tacked on at the end. That last-minute scramble is the bottleneck you're trying to kill.

- CMS publishing: Push directly to your CMS. No more copy-paste cycles that break formatting.

- Distribution: Turning the article into social posts and newsletter copy should be a standard part of the workflow, not a separate project that gets skipped when you're busy.

This works because the steps are connected. Research feeds briefs, briefs feed drafts, and performance data from your last piece of content informs your next topic. A connected pipeline is how you scale without burning out your team.

Platforms like DeepSmith are built this way. They run a pipeline that handles research, drafting, voice humanization, internal linking, and even CMS publishing. Your team's job shifts from running an assembly line to being quality judges.

Where AI agents help most (and where they hurt): a responsibility map

| Task | Best Owner | Why |

|---|---|---|

| Research aggregation | AI | It's fast, exhaustive, and consistent. |

| Brief creation | AI + human review | AI can draft, but a human has to validate the angle. |

| First draft | AI | It beats the blank page and gives you something to react to. |

| Editorial angle / POV | Human | AI is a great summarizer; it's a terrible storyteller. |

| Claim verification | Human (required) | AI hallucinates with confidence. This part is non-negotiable. |

| Internal linking | AI | This is pattern matching, not a judgment call. |

| Metadata + formatting | AI | It's a structural, rule-based task. |

| Distribution asset generation | AI | Repurposing is a transformation task, not a creative one. |

| Strategy and prioritization | Human | This requires business context and competitive insight. |

The most common failure I see is teams asking AI to handle claim verification and come up with a unique editorial angle. You get generic, inaccurate content every time. Use AI for the mechanical work so your people can focus on strategy and accuracy.

Editorial QA as a system: defining review gates, checklists, and escalation rules

Stop reviewing everything with the same level of intensity. It's a waste of your best people. Not every piece of content carries the same risk.

I recommend three review tiers:

- Tier 1 (Quick pass, 15 min): For repurposed social content or newsletter intros. Check the voice, make sure there are no fake claims, and see that the formatting is right.

- Tier 2 (Standard review, 30–40 min): For blog posts and pillar pages. This is a full claim QA, a check of the editorial angle, and a review of internal links.

- Tier 3 (Deep review, 60+ min): For product-heavy content, case studies, or comparison pages. This requires a full accuracy audit and maybe even a sign-off from legal or compliance.

Define your escalation rules. For example, any claim about the product that can't be verified internally gets escalated to Tier 3. Anything that mentions a competitor gets escalated. This way, your best editors spend their time on the things that actually matter, not line-editing a LinkedIn post.

How to operationalize internal linking, metadata, and formatting without slowing down production

This is the "last 40%" of the work that eats up your entire day. I used to spend hours every week just hunting for internal linking opportunities. It's soul-crushing and a terrible use of a strategist's time.

These are rule-based tasks, perfect for AI.

Set the expectation that drafts come out of the AI pipeline with internal links, metadata, and structured formatting already done. When they arrive pre-done, your review time shrinks from an hour to a quick judgment pass on quality. That's the structural change that unlocks real speed.

How to standardize brand voice and accuracy across AI-generated outputs (without turning editors into hall monitors)

Define "claim boundaries": what the content can and can't assert (and how to enforce it)

Claim boundaries are rules about what your content can and cannot say, especially about your product, customer results, and competitors. This is the part that kept me up at night. Without explicit boundaries, AI will invent plausible-sounding "facts" that simply aren't true. This is the single biggest risk when you operate at scale.

We defined four categories:

- Approved claims: Specific product capabilities you can state anywhere.

- Contextual claims: Things that are true only in certain situations and must be qualified.

- Prohibited claims: Fake statistics, unverifiable customer outcomes, and any "best in class" superlatives.

- Escalation claims: Any claim about pricing, legal, or a competitor that requires another set of eyes.

Write these down. More importantly, build them into your AI system's context, not just a style guide that lives forgotten in a Google Doc.

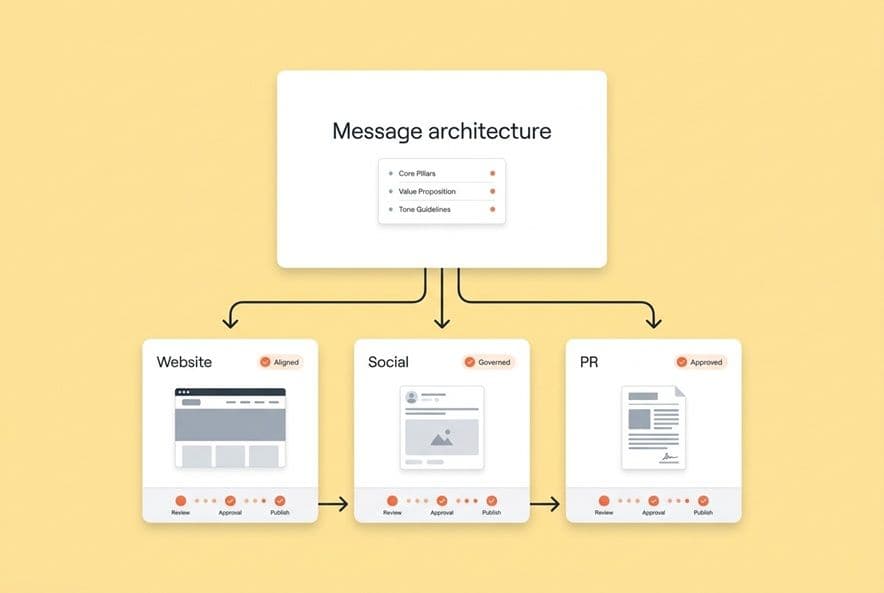

Voice consistency across formats: blog vs. LinkedIn vs. newsletter (and why drift happens)

Voice drift isn't a writing problem, it's a context problem. When you ask an AI to write a blog post, then a LinkedIn caption, then a newsletter, you're using different prompts and getting three outputs that sound like they came from different companies.

More editing isn't the fix. The fix is a structured source of truth. You need your positioning language, product descriptions, and tone guidance injected automatically into every single output.

This is what a system like DeepSmith's Deep IQ does. It stores your company facts, voice, and content templates as structured context that shapes every draft, no matter the format. The context travels with the system, so you're not re-briefing the AI every time.

The minimum governance toolkit: style rules, source rules, examples library, approval thresholds

Forget the 40-page brand bible nobody reads. You need a minimum viable toolkit:

- Style rules: 3–5 core principles for your voice (e.g., "pragmatic, not academic").

- Source rules: A list of approved sources for stats and a rule for disclosing AI-assisted facts.

- Examples library: 5–10 "this is what we sound like" samples, one for each format.

- Approval thresholds: A simple doc explaining which content type gets which review tier.

This toolkit should live inside your AI system, not in a PDF.

Collaborative workflows that make writers better (templates, exemplars, reusable modules)

Here's the counterintuitive part about governance. When it's done right, it frees up your writers instead of constraining them. A writer who starts with a structured brief and a brand-consistent draft can focus on what humans do best: the angle, the insight, the story. That's way more engaging than reformatting headers and chasing down internal links.

Templates and reusable content modules, like a standard way to open a post or frame a product mention, reduce the mental load of trying to apply brand standards from scratch every single time. Your team ships better work, faster, and feels less like they are on an assembly line.

How to mitigate AI content detection risk and protect search performance (without fear-based rules)

What "detection risk" really means in practice (quality signals, trust, and consistency)

Everyone's worried about AI detection, but they're often worried about the wrong thing. The real risk isn't that a tool flags your content and you get penalized. The risk is quality. It's producing content that reads like every other AI-generated article, offers no unique perspective, and signals to both readers and search engines that it's a cheap commodity.

Search engines are getting better and better at rewarding genuine helpfulness and penalizing thin, unhelpful content, no matter how it was made.

The risk you need to manage is that ungoverned AI content tends to be generic, full of hedged language, and completely surface-level. That pattern is what tanks performance, not the fact that an AI was involved.

A process-based mitigation checklist (originality, sourcing, editing depth, formatting, helpfulness)

- Originality: Does this piece have a clear point of view? Does it include specific examples or a unique way of framing the problem?

- Sourcing: Are your factual claims backed by real sources or clearly framed as your company's position?

- Editing depth: Did a human review this for substance, not just for typos?

- Formatting: Is the piece structured for a human reader? Do the headers and bullets actually help someone understand the content?

- Helpfulness: Does this piece honestly answer the question better than what's already out there? If not, don't publish it.

When AI is the wrong tool for the job (and what to do instead)

AI is the wrong choice for original research, genuine expert opinion, or anything where a specific author's voice is the main attraction. For thought leadership from your CEO or deeply technical customer stories, AI can be a research assistant, but it shouldn't be the author.

Knowing when not to use AI is just as important as knowing when to use it.

How to measure ROI from AI content generation platforms beyond "we shipped more"

KPI map: efficiency metrics vs. performance metrics vs. business outcome metrics (table)

| Category | KPI | What It Measures |

|---|---|---|

| Efficiency | Articles published per month | Production velocity |

| Efficiency | Time per article (brief to publish) | Workflow compression |

| Efficiency | Cost per published article | Budget efficiency |

| Performance | Organic traffic per published piece | SEO effectiveness |

| Performance | AI mention rate / citation rate | AEO effectiveness |

| Performance | Prompt coverage % | How much of buyer question space you own |

| Performance | Content engagement (time on page, scroll depth) | Audience resonance |

| Business Outcome | Leads attributed to content | Pipeline contribution |

| Business Outcome | MQL → SQL conversion from content-sourced leads | Revenue quality |

| Business Outcome | Content-influenced pipeline | Downstream revenue impact |

Efficiency metrics will get the tool approved. Performance metrics prove your channel is working. Business outcome metrics will protect your budget when things get tight. You need to track all three, but always lead with business outcomes when you talk to leadership.

Measuring AEO: prompts, citations, pages, and competitor benchmarks

Measuring AEO requires a different toolkit. You need to know which prompts your customers are using, whether you show up in the answer, which of your pages got cited, and how you stack up against the competition.

If you want the nuts and bolts, start with how to Measure AI Search Citations so you can separate mentions from citations and build a prompt library you can actually track.

Setting expectations: where ROI shows up fast vs. where it's lagging

Efficiency ROI shows up almost immediately. You'll feel the workflow get faster within weeks. SEO performance will compound over months, just like it always has. AEO has the longest horizon. Citation rates build slowly as you produce more citable content and the AI platforms index it. You have to set this expectation with leadership now.

What moves fast? Your team's morale when they get to stop doing the boring stuff, and your total output when the pipeline runs without you manually pushing every single step forward.

How to evaluate an AI content generation platform for SEO + AEO (questions to ask before you buy)

Platform evaluation criteria (table): workflow depth, governance, integrations, AEO measurement, repurposing

| Criterion | What Good Looks Like | Red Flag |

|---|---|---|

| Workflow depth | A connected pipeline from research to CMS. | "Export to Google Docs, then publish manually." |

| Governance | Structured brand context that automatically shapes every output. | A style guide uploader that's applied inconsistently. |

| AEO measurement | Per-prompt citation tracking with competitor benchmarks. | "We're working on AEO features." |

| Integrations | Native CMS publishing and GSC data integration. | API-only with no out-of-the-box connections. |

| Repurposing | Built-in tools to generate distribution assets. | Manual export and separate repurposing tools. |

| Internal linking | Happens automatically during the draft generation. | You get a manual suggestion list at the end. |

Red flags that predict failed adoption (and how to spot them in a demo)

Watch out for demos that only show perfect outputs and never show the review workflow. Be wary of tools that require massive upfront configuration. And if a tool looks great on generic topics but falls apart when you test it on your actual niche, walk away.

Here's my favorite question to ask in every demo: "Show me what happens when the AI makes a product claim that's wrong." Their answer will tell you everything you need to know about their governance model.

The rollout plan: 30/60/90 days to implement without stalling production

Days 1–30: Start with governance. Before you generate a single article, build out your Deep IQ with company facts, voice rules, and claim boundaries. Pick one content type (like your blog) and run 3-5 articles through the full pipeline. Measure the time it takes.

Days 31–60: Expand to a second content type. Start generating distribution assets for everything you publish. Set up AI visibility tracking for your top 10-15 buyer prompts and get a baseline. Find your top three citation gaps and add them to the content queue.

Days 61–90: Formalize the AEO lead function. Have that person run a weekly prompt review. Set a quarterly target for improving your citation rate. And present a real ROI dashboard to leadership showing both efficiency gains and performance trends. By day 90, you'll know if the tool is working. Don't let a failing rollout drag on because of sunk costs.

Build your AEO-ready content pipeline (without adding headcount)

The content directors who are winning right now aren't the ones who just added more tasks to their team's plate. They're the ones who redesigned how the work flows, with structured governance, connected pipelines, and measurement that ties AI output to real business outcomes.

If you're still manually managing briefs, chasing internal links, and watching distribution slip every single week, changing your operating model isn't optional anymore.

DeepSmith connects research, drafting, QA, brand governance, publishing, distribution, and AI visibility tracking into a single pipeline. Your team stops running an assembly line and starts doing the strategic work that actually moves the needle.

See how it maps to your current workflow and what could change on day one.